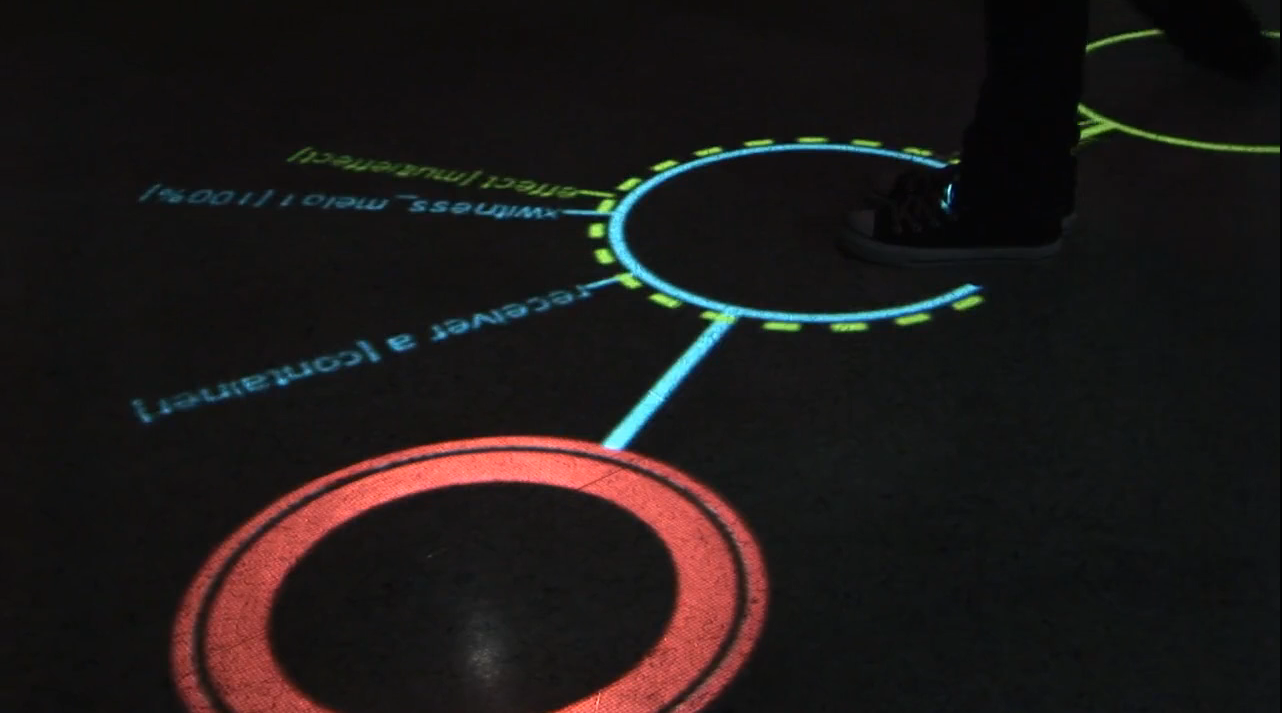

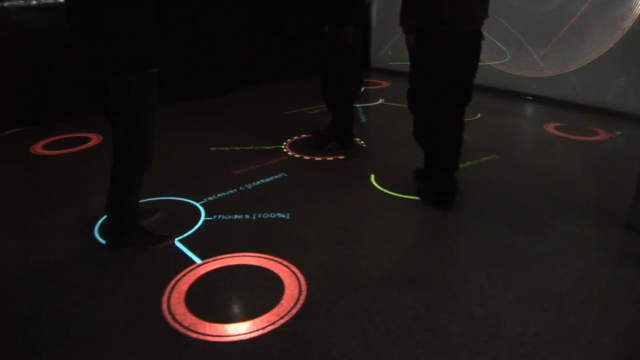

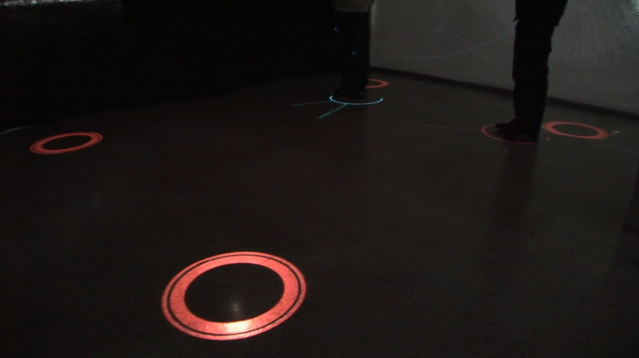

Mjuvi is a 2010 student project at h-da where music and visuals are generated by motion tracked people. The user can choose between different roles (beat = red ring, melodies = blue ring, effect = green ring or instrument switch = orange ring). Together they control a music program over midi signals.

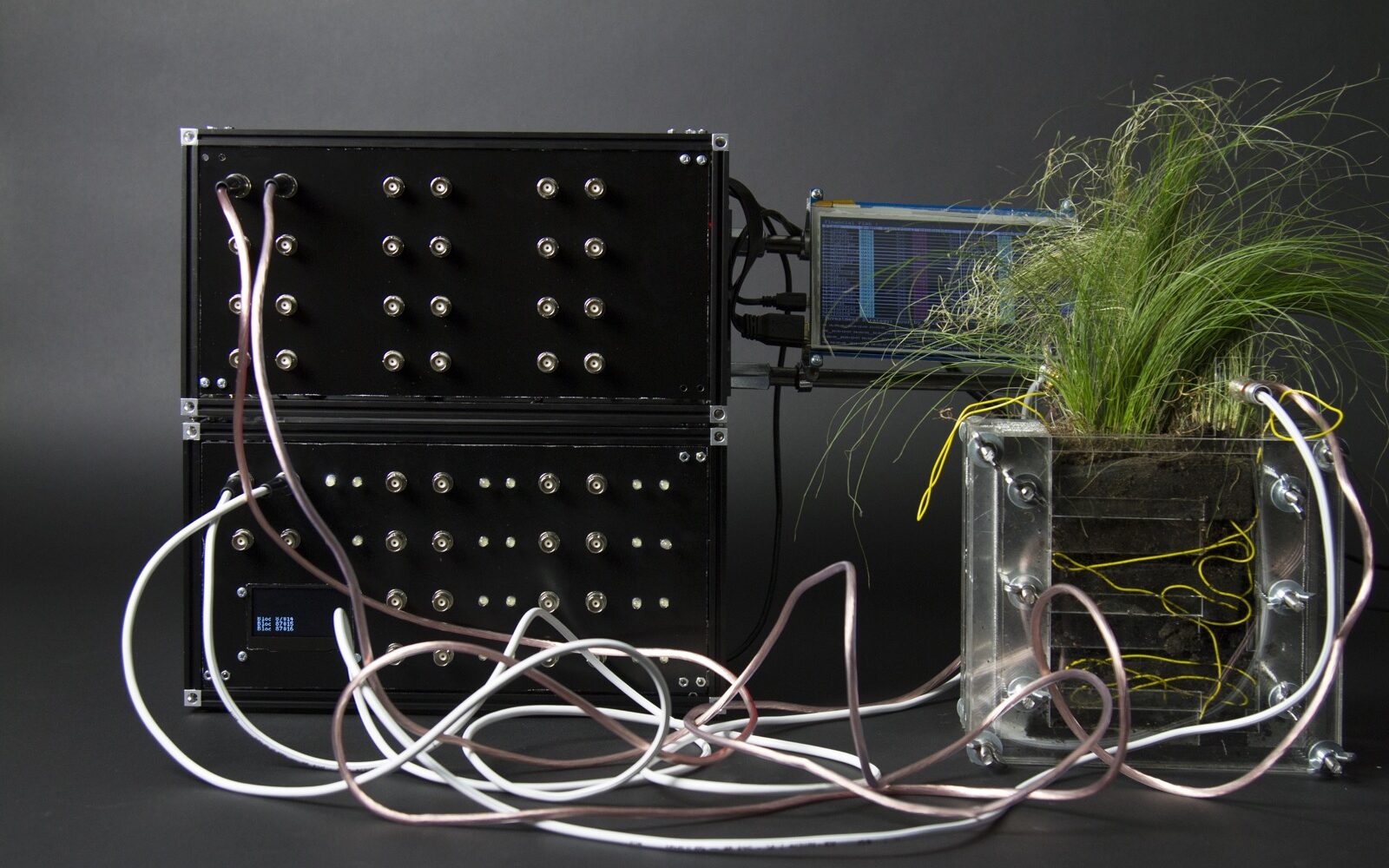

The tracking system is scalable, e.g. the system also supports a reactable as input and supports up to 20 cameras (each camera connected to a tracking-server which sends tracked data to the main application over TCP). The tracking system is able to use AR ToolKit or reacTIVision.

For visual output the main application works as a server for VVVV, Processing or Java clients allowing them to display objects, connections and played midi-notes. The prototype uses four cameras as input and two Processing clients as output (ground and visuals projection). Main application and tracking system were developed in C++.

(via Vimeo)