A Philosophy of Computer Art is a text that may interest some readers of creativeapplications.net as it covers the intersection of computing and art, discussing some of the classics of interactive art, and doing a lot of thinking about what art that uses computers actually is. In it Dominic Lopes does several things very well: it divides what he calls “digital art” from “computer art”, and it correlates that second term, which I’ll put in capitals to mark that it’s his term, Computer Art, with interactivity. He also articulates precise arguments for computer art as a new and valid form of art and defends his new term against some of its more tiresome attacks. As a quick example, Paul Virillios concerns about the debilitating effect of “virtual reality” on thought which is more than a little reminiscent of Socratic concerns about the debilitating effect of writing on thought and points to an interesting conclusion: what we call thought is a technologically enhanced phenomena. Note Friedrich Kittler: most human capacities are enhanced in some way or another with no great damage to the notion of “humanity” or “human”. It’s little more than a failure of imagination to thunder about how those augmentations debilitate the natural state of humans. Lopes also makes several extremely astute observations about the nature of interactivity and repeatability, comparing Rodins Thinker, Schuberts “The Erlking”, packs of refrigerator magnet letters, and true interactivity in artwork and concluding that interactive work has distinct characteristics. What he comes to, or what I read him as coming to, is this: a structured and rule based experience is interactive. “A good theory of interaction in art speaks of prescribed user actions. The surface of a painting is altered if it’s knifed, but paintings don’t prescribe that they be vandalized.” Reduced even further: grammar plus entities plus aesthetics equals interactivity. He also makes, to pick just a few, excellent arguments for the interpretive necessity of a view in automated displays, astute observations about the potential value of a computer art criticism, and for the nature of technology as a medium.

A Philosophy of Computer Art is a text that may interest some readers of creativeapplications.net as it covers the intersection of computing and art, discussing some of the classics of interactive art, and doing a lot of thinking about what art that uses computers actually is. In it Dominic Lopes does several things very well: it divides what he calls “digital art” from “computer art”, and it correlates that second term, which I’ll put in capitals to mark that it’s his term, Computer Art, with interactivity. He also articulates precise arguments for computer art as a new and valid form of art and defends his new term against some of its more tiresome attacks. As a quick example, Paul Virillios concerns about the debilitating effect of “virtual reality” on thought which is more than a little reminiscent of Socratic concerns about the debilitating effect of writing on thought and points to an interesting conclusion: what we call thought is a technologically enhanced phenomena. Note Friedrich Kittler: most human capacities are enhanced in some way or another with no great damage to the notion of “humanity” or “human”. It’s little more than a failure of imagination to thunder about how those augmentations debilitate the natural state of humans. Lopes also makes several extremely astute observations about the nature of interactivity and repeatability, comparing Rodins Thinker, Schuberts “The Erlking”, packs of refrigerator magnet letters, and true interactivity in artwork and concluding that interactive work has distinct characteristics. What he comes to, or what I read him as coming to, is this: a structured and rule based experience is interactive. “A good theory of interaction in art speaks of prescribed user actions. The surface of a painting is altered if it’s knifed, but paintings don’t prescribe that they be vandalized.” Reduced even further: grammar plus entities plus aesthetics equals interactivity. He also makes, to pick just a few, excellent arguments for the interpretive necessity of a view in automated displays, astute observations about the potential value of a computer art criticism, and for the nature of technology as a medium.

But Lopes is also a philosopher and philosophers seek to, among other things, define categories. Painting, sculpture, dance; these categorized mediums have all served us well over the years and so the thinking goes, why not extend them and add another: Computer Art. I’m not so sure that the idea of Computer Art as, with an admittedly blunt reduction, “stuff on a computer that allows you to participate in it presenting itself” is particularly useful. My feeling is that this isn’t what interactive art or art made in collaboration with computers is presently nor is it a meaningful extent of what it should be. The device is not the method, nor is the extent of what makes this type of artwork rich and meaningful and computers aren’t really the medium: algorithm and computation are the medium. In Form+Code, Casey Reas and Chandler McWilliams are right to point to Sol Lewitt as an earlier exponent of explicitly algorithmic art and tie that into the current computational and algorithmic art-makers. A computer originally was one who did computation, that is, a person sitting with a slide rule, pen, and paper and was only later applied to machines. The idea of computation is that it offloads a pre-existing human capacity, accentuates pre-existing things in the world. The person who calls their friend on their cellphone describes their action as “calling my friend” not “using my cellphone”. The person using Ken Goldbergs TeleGarden (a work mentioned frequently in APAC) is marveling at how they can collaboratively participate in creating a garden, not at how they can control a machine via a network. The point is not the device — the point is interactive computation, extension of human aptitude and capacity, and the type of relationship with the world that it enables. His insistence on the primacy of mediums and forms is doubly odd because in his finale Lopes emphasizes that “computer art takes advantage of computational processing to achieve user interaction”. Close, but not quite there.

I’m nitpicking, and admittedly so, because he’s looking at works that are unmistakably “Computer Art” by his definition of it. Computer Art is meant to be a measure of degrees, a spectrum. One looks at Scott Snibbes work and sees a computer system and an interactivity. Golden Calf, a work he references multiple times, is very firmly at the Computer Art circle in the Venn diagram of machine-human art-making experience. These are the easy examples, those that lend themselves most easily to the account of interaction in artworks that he describes. But I’m nitpicking for a reason: it’s painfully limiting. It says that computer art is things that are run on a computer with which I interact and observe a display where I consequently understand how my actions are interpreted.

This seems naïve to the ways that computation actually functions in our lives and an oversimplification of how people think that computation can function in their lives. This also seems to be reductive of what forms art can take and how the conversation that is art-making can evolve. For instance: Wafaa Bilals Domestic Tension, a piece far more indebted to performance art than sculptural installation. There’s quite a bit more at play there than myself seeing the manipulation of pixels and there’s more to my understanding of how this piece functions and signifies than understanding that I’m speaking with and through a computer.

Another example: Men in Grey. Is this interactive art? Not in many senses, I never interacted with it nor would I say that interacting with it is necessary to understand it and experience it. It has far more in common with Situationist/Lettrist works than with installation art, and yet it is computer based, one does interact with it by well-known protocols and through well-established rules, it has a display. It uses computation and networks and yet it’s not about manipulating a computer or a network to create display elements nor is that the forefront of it. Nor are EyeWriter, Natural Fuse, and a slew of other works and projects that I find most meaningful and engaging.

In philosophy of aesthetics at times philosophically strong categories sometimes are preferred over meaningful categories because of the defensibility of strong categories. Painting as a category of artwork is not deeply meaningful in many ways (consider the question “do you like paintings?”) yet determining how much something is and is not a painting is quite easy and categorically meaningful. “Minimal” as a style (ones furniture or aesthetic) or strategy (“minimalism” with attendant connotation) is a much more meaningful designation because it has historical precedents, significations, and because it extends beyond a particular category to cover a manner of production and reception. That is, it describes communicative strategies, which Lopes indicates is one of the goals of the interactivity in Computer Art. However “minimal” is a terrifically difficult thing to pin down into categories and yet it is descriptive, historical, and fundamentally meaningful as a description of an aesthetic practice. To play a small linguistic game, describing speech as “he spoke with words” is a bit odd; to describe it as “he spoke with silence” makes more sense because one does not normally make speech with silence. Likewise Computer Art seems primarily to describe a situation of abnormality, “this is art that involves you interacting with a computer”, that I believe few people actually find particularly abnormal and that will be less meaningful, if not near meaningless, in the near future.

Lopes text is an excellent opening of what I hope will be an interesting discussion that attempts to unravel the relationship between new forms of narrative, expression, and communication and the previous ones. He weaves together an excellent web of references from Umberto Eco to Clement Greenberg to Lev Manovich and references a wisely chosen group of artworks to bolster his argument. The example of A Philosophy of Computer Art is in it’s handling of complex arguments against the sort of odd disqualifications that occasionally are leveled against Computer Art. It’s insistence on categorical logic and mediums as definitive categories is a small aberation in what is otherwise an excellent text and opening of a new type of discourse about what creative computing might possibly mean.

Purchase on amazon.com / amazon.co.uk

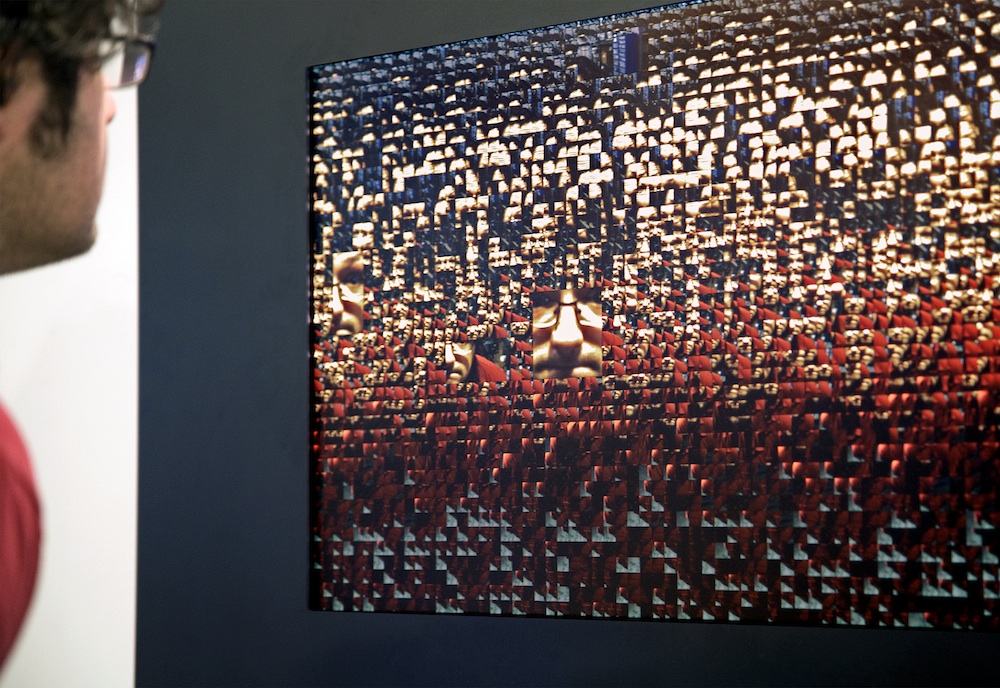

Rafael Lozano-Hemmer “Blow Up”, 2007

Daniel Rozin “Wooden Mirror”, 1999

Scott Snibbe “Boundary Functions”, 1998

Camille Utterback & Romy Achituv “Text Rain”, 1999

—

Joshua Noble is a writer, designer, and programmer based in Portland, Oregon and New York City. He’s the author of, most recently, Programming Interactivity and the forthcoming book Research for Living.

—