Raspberry Pi is a very exciting, low-cost computer aimed at the educational market. With a starting price around $25, a very small form factor and exceptional multimedia capabilities, it is very attractive for creative computing projects. openFrameworks already runs on a multitude of platforms and the Raspberry Pi is one of the latest of these.

In March 2013 we held a workshop at the Resonate 2013 festival where participants had a look at how to set up a comfortable work environment, the particulars of running openFrameworks on the Raspberry Pi and went through some examples that play to the strengths of the Raspberry Pi.

The purpose of this guide is to gain familiarity with the Raspberry Pi, a bit of Linux and a head start in programming for one of the most exciting computing platforms out there.

Some programming experience and familiarity with openFrameworks is required and although efforts have been made to make this guide easy to follow, please note that it was originally designed to be taught in a workshop.

We won’t be delving too deeply into any one topic as some of these topics (Linux, shaders) are huge, but rather we aim to provide a starting point and a few example apps that can be built upon.

Raspberry Pi Hardware

The Pi uses a System on A Chip (SoC) originally intended for set-top boxes. It has a fairly powerful Graphics Processor (GPU) that supports modern graphics technologies like OpenGL ES 2.0 (shaders!) and OpenMax (hardware accelerated audio/video decoding). Compared to the GPU, its processor (CPU) is less powerful as it wouldn’t be expected to be doing too much work in a set-top box scenario.

openFrameworks

As described on it’s homepage, openFrameworks is an “open source C++ toolkit for creative coding.” With version 0.8.0, openFrameworks has added support for the Raspberry Pi. A key benefit of using openFrameworks on the Raspberry Pi is that it provides a cross platform C++ interface to graphics, audio, video, networking and access to many popular libraries such as OpenCV and OSC.

Getting set up

To get started with this guide you will need to go through the steps outlined in the Getting Started guide on the openFrameworks website. This will walk you through downloading openFrameworks as well as setting the up the Raspberry Pi with the optimal configurations to use when working with openFrameworks.

There are many ways to develop for your Pi but a good cross-platform workflow is:

- Using Samba File Sharing to allow browsing the Pi’s SD Card over a network (setup guide)

- Editing files over the network using your a text editor (Sublime text 2 is a good cross-platform editor)

- Using a terminal window via SSH to send commands to compile, run and exit apps.

As openFrameworks is cross-platform, there is nothing stopping you from including project files for Windows/OSX/Desktop Linux, working on your project on your main machine and syncing up the source files to the Pi to compile and test there from time to time.

Sample Projects

The code and projects for this tutorial can be found at https://github.com/openFrameworks-arm/RaspberryPiGuideCAN The following commands will download and extract the projects into the correct location. We are going to assume that you followed the OF guide to the letter (particularly that the openFrameworks folder is located at /home/pi/openFrameworks), but otherwise just substitute any directory paths for your own.

$ cd /home/pi/openFrameworks/apps/ $ wget https://github.com/openFrameworks-arm/RaspberryPiGuideCAN/archive/master.zip $ unzip master.zip $ mv RaspberryPiGuideCAN-master CANApps

You should have a directory named CANApps that will contain the tutorial’s projects.

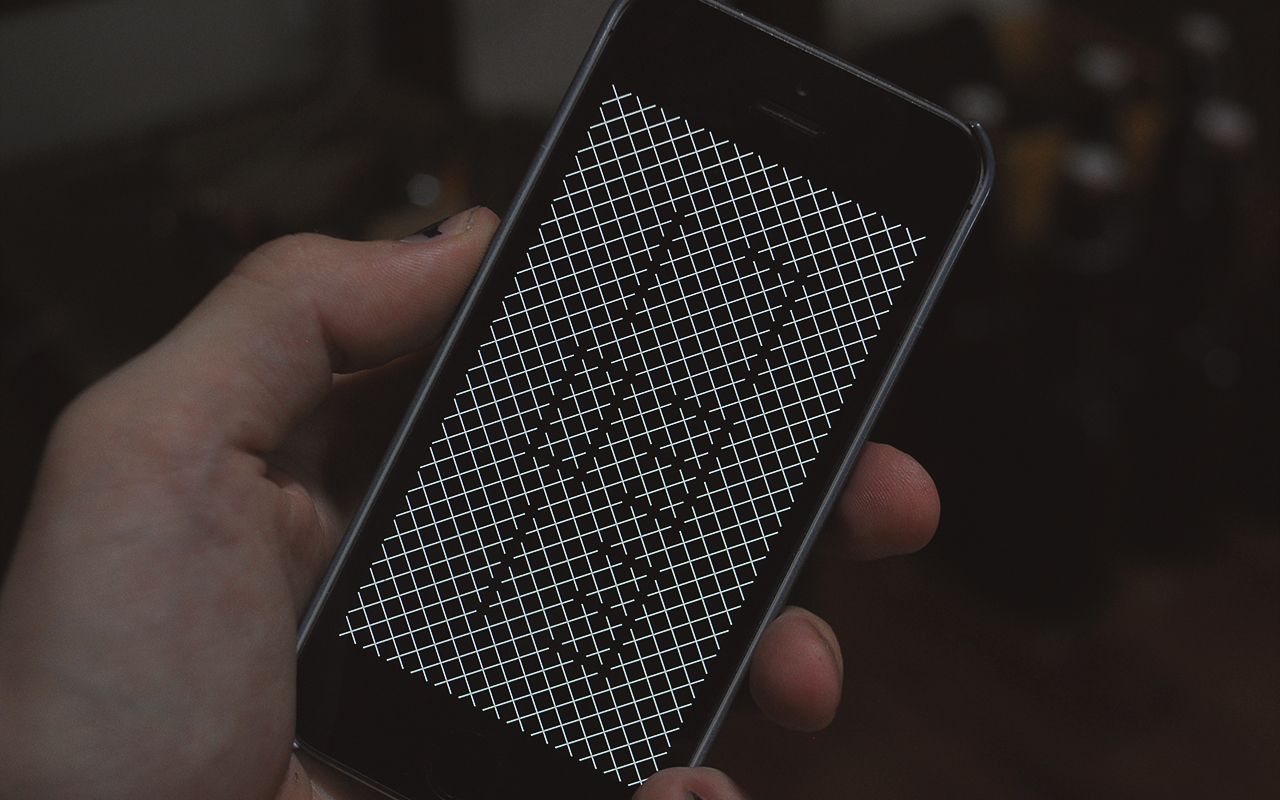

Hello World

Now that you have openFrameworks compiled on your Pi let’s get a Hello World program up and running. As we’re using openFrameworks our Hello World looks just as sexy as you would expect.

This Hello World app does not use shaders, but works by using a a simple mesh and a feedback buffer (drawing the old frame over the next) to draw the distortion. We’re not going to go the details of the technique in this tutorial as it’s not Pi specific, but we just want to highlight it’s exactly the kind of thing the Pi is good at. We’re keeping most of the work on the fast graphics card, using the CPU only to generate a distorted grid screen sized grid every frame, at a resolution of 30×30 in this case.

This isn’t to say that the CPU on the Pi is useless however, it’ll happily do a couple of passes on the pixels of a 320×240 video for instance as we will see later. To compile and run this app yourself run these commands:

$ cd /home/pi/openFrameworks/apps/CANApps/HelloWorld $ make $ make run

You should now be seeing your own version of the app on your screen, silky smooth! If so, we know everything is working as it should. Note: To exit the app hit CTRL+C

Shaders

Shaders on the Pi are very useful for graphics/image processing as they allow you to take advantage of the faster GPU. The new openFrameworks Shader tutorial is a great resource on getting started with working with Shaders on the Desktop versions of OF.

The Raspberry Pi is a bit different as it requires OpenGL ES 2.0 compatible shaders but a nice feature of OpenGL ES 2.0 that it is the same API that WebGL uses. What this means is that many shaders that you find at sites like ShaderToy can be used with openFrameworks on the Raspberry Pi.

The Raspberry Pi uses the mobile version of OpenGL ES, you can either use an OpenGL ES 1.1 context if you don’t want shaders, or you can use OpenGL ES 2.0. Why would you ever want to use a OpenGL ES 1.1 context? If you use a OpenGL ES 2.0 context, you are abandoning the fixed function pipeline altogether. Outside of openFrameworks, you would need to write a shader for everything. Want a red triangle on screen? You need to write a shader that writes red pixels (or takes a colour as an input) and draw a triangle with it. Want a textured triangle? You would need to write a shader that reads from the texture and draws the appropriate colour from the texture to screen.

Thanks to the openFrameworks crew you won’t really notice any of this as openFrameworks handles this complexity with internal shaders for the drawing operations. If you stick to the built in openFrameworks drawing functions (e.g. ofCircle, ofRect, ofRectRounded, ofEllipse) things will be handled behind the scenes. For using your own shaders for graphics/post-processing, openFrameworks provides a very nice ofShader class for working with shaders, making everything much easier and cleaner.

ShaderSimple

Let’s have a look at some shaders. Run these commands to compile and run our example:

$ cd /home/pi/openFrameworks/apps/CANApps/ShaderSimple $ make $ make run

Hit CTRL+C to exit the app and let’s break this down a bit. To start with, if you want to use shaders on the Pi you need to tell OF to enable the programmable renderer, which supports OpenGL ES 2.0. With your text editor open up ShaderSimple/src/main.cpp. Here have a line before ofSetupOpenGL that tells our program to use the programmable renderer, but only if we are on an OpenGL ES platform like the Pi.

#ifdef TARGET_OPENGLES ofSetCurrentRenderer(ofGLProgrammableRenderer::TYPE); #endif ofSetupOpenGL(1280, 720, OF_WINDOW);

Shaders are quite literally programs running on your GPU, so for them to be useful we need to pass in variables to them to operate on. There are different kinds of data to we can pass in, but let’s start with a simple floating point number, in this case the elapsed seconds.

shader.begin();

shader.setUniform1f("time", ofGetElapsedTimef() ); // pass in the elapsed time as a variable

ofRect(0, 0, ofGetWidth(), ofGetHeight() ); // we need to draw something for the shader to run on those pixels

shader.end();

Now let’s have a look at the shader files. As we won’t be doing anything to the vertices we can ignore the vertex shader for now, it will simply transform the vertices by the ModelViewProjection matrix.

Let’s have a look at the simplest possible fragment shader I could think of, it will just pulse the screen between black and white.

precision highp float; // this will make the default precision of any float variable high

uniform float time;

void main()

{

// Pulse screen

float col = (cos(time*2.0)+1.0)*0.5;

gl_FragColor = vec4( col, col, col, 1.0 );

}

Whatever we set gl_FragColor to will be the colour of the pixel on screen, so here we are simply using cos() to turn our time value (speeded up to twice as fast) into a number going between 0 and 1, make a colour out of that and set gl_FragColor to that colour.

There are some other simple shaders commented out in the .frag file to have a look at.

Video on the Raspberry Pi

Typically when using video with openFrameworks you use the ofVideoPlayer class. ofVideoPlayer runs just fine on the Pi and is great for playing smaller videos and provides access to the pixels which are often necessary for operations like when working with OpenCv. Let’s take a look at an example that does just this.

OpenCv Example

This example is very similar to the opencvExample that ships with OF, we’ve just changed the drawing a little bit so it doesn’t fall outside of your screens edges (the Pi likes to eat some pixels along the borders on TVs) and we have also replaced the source video for one compressed using H.264. H.264 and mp4 will work with ofVideoPlayer on the Pi out of the box.

To run this example use these commands:

$ cd /home/pi/openFrameworks/apps/CANApps/opencvExample/bin $ ./opencvExample.bin

Note: If you are not using a cross-compiler, projects that use OpenCv (and later OpenMax) take a while to compile. For all of our projects we have provided pre-compiled binaries but the source is also available so you can compile for yourself if you like.

You should now see the Pi doing a few operations per frame on all the pixels in a 320×240 video, hovering around 60fps without any overclocking. It’s not going to replace bigger machines for serious computer vision work, but for the price you could do a lot worse. ofVideoPlayer is providing us with pixel data from the video and passing it to OpenCv. OpenCv takes a background matte image the current video frame and runs a threshold operation to create a difference image. This simplified difference image is then passed through a contour finder to give you a shape that outlines the hand and arm. Note: Compared to the Desktop, rendering type can slow down your apps on the Pi. In our app we have a key shortcut to toggle label drawing (‘t’) so you can get a bit more performance.

ruttEtraExample

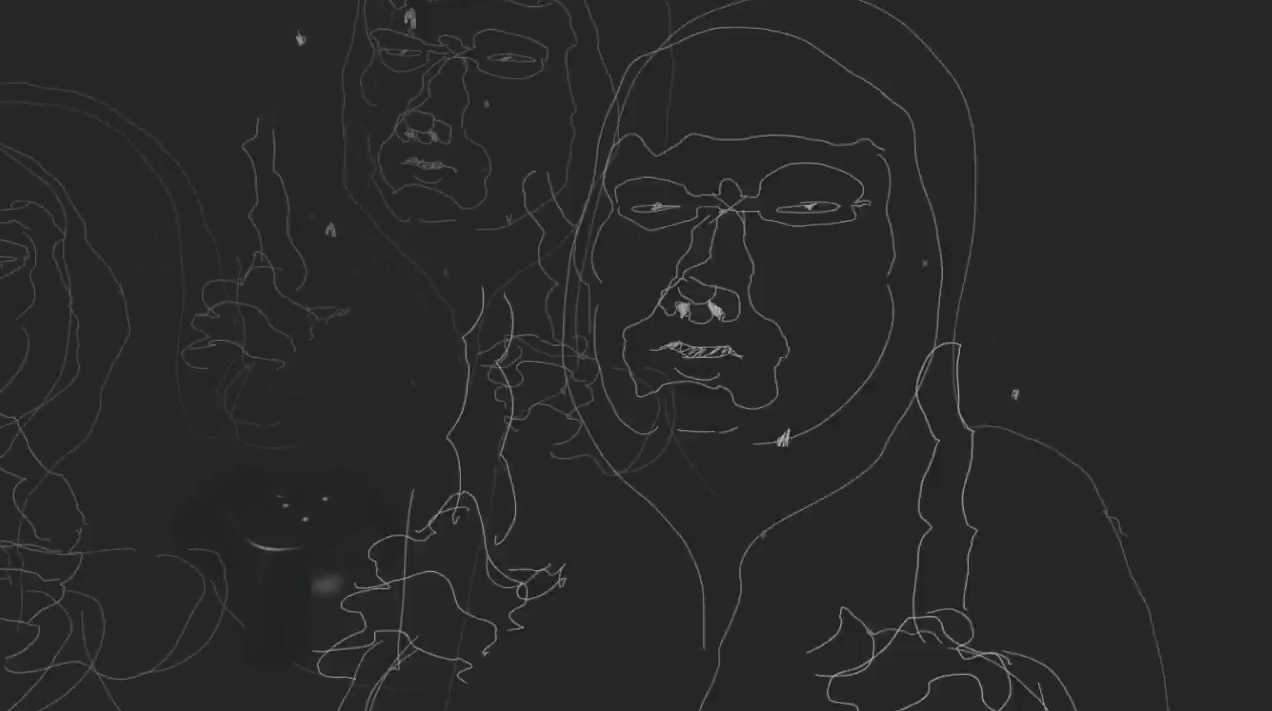

Manipulating video pixels is a cornerstone technique of so called creative programming. Let’s use our ofVideoPlayer and openFrameworks’ ofMesh to expand this into 3D.

To keep things running as smoothly as possible we’re going to use a video that is 160×120. With a video of 320×240 we would be looking at around 18-20fps while the video is playing (it’s nice and smooth once it stops), while a smaller video gives us a nice 30 fps if using the entire image and 60fps if we skip half the rows.

To run this example use these commands:

$ cd /home/pi/openFrameworks/apps/CANApps/ruttEtraExample/bin $ ./ruttEtraExample.bin

You should see something like this:

Hardware accelerated video

So for shader operations we may want play something a bit more high definition. Shaders only require texture access (opposed to pixels) so we can use an addon for openFrameworks (ofxOMXPlayer) to provide this very quickly at high resolutions.

OpenMax

The Raspberry Pi’s GPU provides support for OpenMax (OMX) , a technology that enables hardware decoding for video up to 1080p. OMX has the capability to bypass the processor and feed video directly to the screen or a texture, freeing up the CPU for other operations. You may have already been introduced to OMX as the Raspbian distribution includes a program called omxplayer. omxplayer was originally developed as implementation for OpenMax accelerated video for the open source media player XBMC on the Raspberry Pi. In our projects we will be using the addon ofxOMXPlayer that provides some of omxplayer’s functionality to openFrameworks.

Formats for Hardware accelerated video

With hardware acceleration you are limited to codecs the hardware supports.

Raspberry Pi firmware/ofxOMXPlayer updates may enable more formats in the future but currently this combo provides the most stable results:

- H.264

- Audio AAC 48k 16 bit

The free MPEG Streamclip is a great, cross-platform way to convert your source video for playback.

ofxOMXPlayer

ofxOMXPlayer is able to run in two different modes, direct-to-screen and textured.

Direct-to-Screen

In direct-to-screen mode videos are able to run smoother at higher resolutions (1080p is fine). The tradeoff is that you are limited to running video full screen and no access to textures for shader operations, etc. The player essentially renders directly to the screen and bypasses your graphics canvas. However, you are still able to do other background operations (e.g. talk to a sensor, download content from web, etc).

omxPlayerNonTextured

Let’s take a look at an example of direct to screen mode. Like the openCvExample, source code is included but to save some compilation time let’s run a pre-compiled binary:

$ cd /home/pi/openFrameworks/apps/CANApps/bin/omxPlayerNonTextured $ ./omxPlayerNonTextured.bin

You should see a 720p, AAC encoded sample video playing full screen at full frame rate! If you have your Pi hooked up to something like an LCD TV you should also hear sound that is passed through HDMI. As mentioned, a lot of the Pi’s system resembles the technology in set-top boxes like the Roku and Apple TV and this is way to harness some of this in your openFrameworks based apps.

omxPlayerTextured

So beyond simple playback let’s do something that allows more creativity with the output.

Textured mode

With textured mode you are able to use a OMX provided texture and continue to use openFrameworks drawing operations, apply shaders and do background tasks. We can’t get immediate access to the pixels as it requires the slower CPU to step in and read the texture to pixels. However, shaders and drawing operations don’t require pixels so we can still do lots of interesting things. 1080p textures are possible in this mode but typically you will get smoother playback up to 720p.

Like the previous example a compiled binary is provided. Let’s start by running it with these commands

$ cd /home/pi/openFrameworks/apps/CANApps/bin/omxPlayerTextured $ ./omxPlayerTextured.bin

This time we are able to draw the texture full screen as well as a resized version in the lower left corner. We are also able to use other OF drawing functionality to overlay information. With the exception of pixel access this is very similar to the functionality you would get from the standard ofVideoPlayer on a Desktop machine. Let’s now walk through some of the code and see some of our options

Here is the code in testApp.cpp that creates and starts the player

void testApp::setup() {

//The full path is at /home/pi/openFrameworks/apps/CANApps/videos/720p.mov

string videoPath = ofToDataPath("../../../videos/720p.mov", true);

ofxOMXPlayerSettings settings;

settings.videoPath = videoPath;

//useHDMIForAudio, default:true,

//set to false if you have headphones

//or computer speakers connected to the mini-jack

settings.useHDMIForAudio = true;

//enableTexture. default:true

//This is set to false for our omxPlayerNonTextured example

settings.enableTexture = true;

//enableLooping, default:true

//option for looping

settings.enableLooping = true;

//enableAudio, default:true

//Set to false to save some GPU resources

//if you don't require audio

settings.enableAudio = true;

//Send the player the config

omxPlayer.setup(settings);

}

ofxOMXPlayerSettings is an object created and passed to the player. The idea around this design pattern is to allow a large number of parameters to be set at the player’s initialization but avoiding a long list of arguments. Note: with both all the ofxOMXPlayer examples you may drop in one of your own videos into the project’s bin/data/videos folder. There is a bit of helper code in testApp that searches for a “videos” directory and picks the first file listed. This can be useful for testing out different sizes and compression settings without recompiling.

Hardware accelerated Video with Shaders

With our omxPlayerTextured project we have seen this little computer play provide textures from a 720p video and with our SimpleShader example we have used shaders in OF. Let’s combine the two.

Again let’s run a pre-compiled binary to see the end result by using these commands

$ cd /home/pi/openFrameworks/apps/CANApps/bin/omxPlayerShaders $ ./omxPlayerShaders.bin

You should see some thing like this:

With this project we will be using an Frame Buffer Object (FBO) with a shader. FBOs are like a canvas that we can quickly draw onto and manipulate over and over. In this case we will be filling it with our video texture, post-processing it with a shader and later drawing some info text on top of it. To use an FBO (ofFbo in openFrameworks) we need to set aside some memory for it with fbo.allocate(ofGetWidth(), ofGetHeight()). We then load our shader and like the process of using shader.begin(), shader.end() we do something similar with our FBO. We do this in update() as we are not drawing to the screen yet.

Note: On other platforms you may be able to successfully do the Fbo/Shader operations in draw(). Currently on the RPi/OF 0.8.0 using a FBO/Shader combo in update() gives the most reliable output.

fbo.begin(); //we start filling our FBO

shader.begin(); //we enable our shader

//Here we tell pass our shader some changing values

//We pass our texture id

shader.setUniformTexture("tex0", omxPlayer.getTextureReference(), omxPlayer.getTextureID());

//We give it an incrementing value to use

shader.setUniform1f("time", ofGetElapsedTimef());

//And a resolution

shader.setUniform2f("resolution", ofGetWidth(), ofGetHeight());

//We then send our texture that kicks it off

omxPlayer.draw(0,0);

shader.end(); //we disable our shader

fbo.end(); //and stop filling the fbo

Most of the complex work is during the shader processing and is done inside the shaderExample.frag located in bin/data.

precision highp float; // this will make the default precision high

varying vec2 texcoord0; // we passed this in from our vert shader

uniform sampler2D tex0; // our texture reference

uniform vec2 resolution; // width and height that we are working with

uniform float time; // a changing value to work with

void main()

{

//We scale our time value

mediump float newTime = time * 2.0;

//And then use the time with sin and cosine to make new pixel positions

vec2 newTexCoord;

newTexCoord.s = texcoord0.s + (cos(newTime + (texcoord0.s*20.0)) * 0.01);

newTexCoord.t = texcoord0.t + (sin(newTime + (texcoord0.t*20.0)) * 0.01);

mediump vec2 texCoordRed = newTexCoord;

mediump vec2 texCoordGreen = newTexCoord;

mediump vec2 texCoordBlue = newTexCoord;

texCoordRed += vec2( cos((newTime * 2.76)), sin((newTime * 2.12)) )* 0.01;

texCoordGreen += vec2( cos((newTime * 2.23)), sin((newTime * 2.40)) )* 0.01;

texCoordBlue += vec2( cos((newTime * 2.98)), sin((newTime * 2.82)) )* 0.01;

//we apply the new positions to each color channel of our RGBA texture

mediump float colorR = texture2D( tex0, texCoordRed ).r;

mediump float colorG = texture2D( tex0, texCoordGreen).g;

mediump float colorB = texture2D( tex0, texCoordBlue).b;

mediump float colorA = texture2D( tex0, texCoordBlue).a;

mediump vec4 outColor = vec4( colorR, colorG, colorB, colorA);

//we then output our pixels

gl_FragColor = outColor;

}

Later in our draw() function we can the draw the FBO at any size we want. We first draw it as the background layer and then a scaled version in the lower right corner

void testApp::draw(){

fbo.draw(0, 0);

//draw a smaller version in the lower right

int scaledHeight = fbo.getHeight()/4;

int scaledWidth = fbo.getWidth()/4;

fbo.draw(ofGetWidth()-scaledWidth, ofGetHeight()-scaledHeight, scaledWidth, scaledHeight);

In conclusion

openFrameworks, Linux and the Raspberry Pi are all very deep subjects and hopefully this article provides a starting to point for exploration and some insight into some of the unique capabilities that this combination has to offer. Also checkout out some of our projects and examples that were presented at the 2012 Resonate workshop – these include Multiscreen applications, Networked OpenCv, GPIO, and OpenNI integration. We have been collecting projects and examples from workshops and articles like this here: https://github.com/openFrameworks-arm Keep an eye on the github page for updates and feel free to open up issues if something isn’t working as expected or described.

A big thanks to the Open Source Community

The openFrameworks/Raspberry Pi project is composed of many, many Open Source contributors and would not have been possible without the extensive support of:

Christopher Baker @bakercp, Arturo Castro @artur0castro, Tim Gfrerer @tgfrerer, Lukasz Karluk @julapy, Damian Stewart @damian0815

And all of the openFrameworks contributors

https://github.com/openframeworks/openFrameworks/graphs/contributors

OpenMax addons would not have been possible without these Open Source contributions omxplayer , xbmc Authors: Andreas Müller and Jason Van Cleave