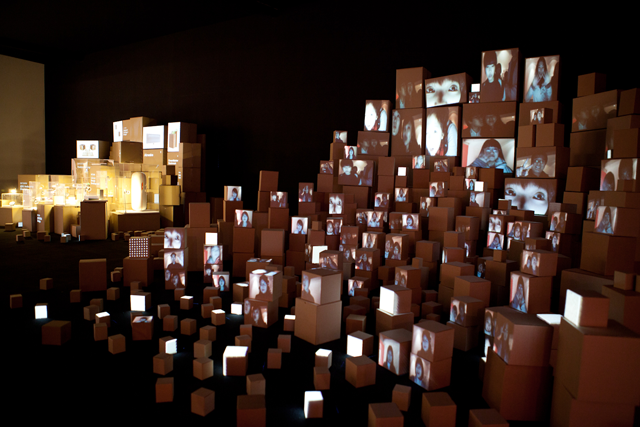

Building on their previous installation at Coffee Kitchen, Kimchi and Chips created ‘Link’, their latest interactive installation for Design Korea 2010 where people record their stories into a cityscape of cardboard boxes.

Link was created for the event as an interpretation of ‘Convergence’, the theme of the exhibition. The team presented a convergence of complex, fast moving technologies with low, everyday materials. Furthermore, the audience is invited to take part and “can store their memories inside boxes”.

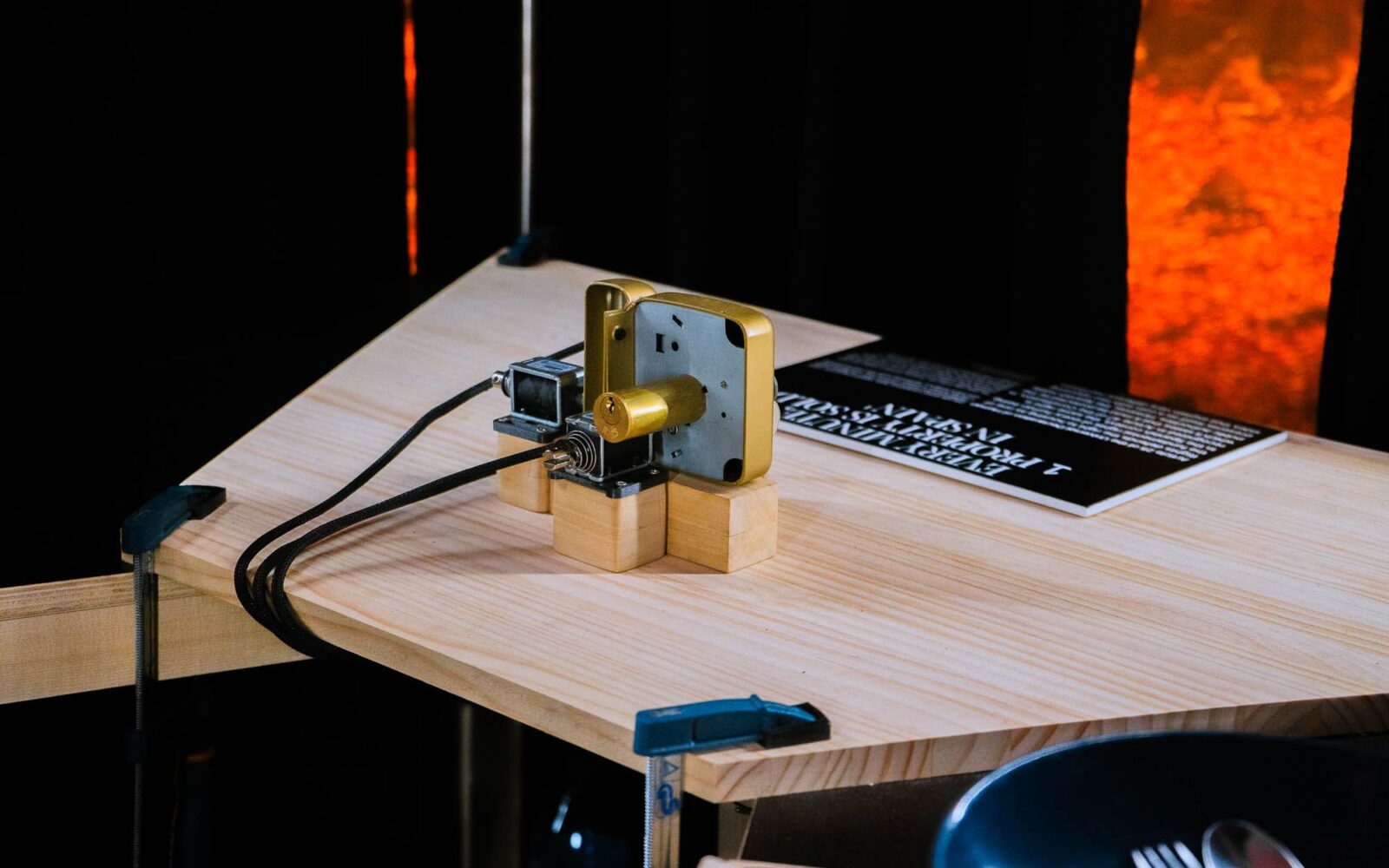

The installation includes a number of different components. To match the projections to the boxes, the team developed iPad mapping application allowing users to interactively match projected images in the room. App was built using openFrameworks and libmysql (see video demo at the bottom of the post). The iPad interface, allowing users to add information was also built using built using openFrameworks with 2-way communication over OSC. Main mapping playback was created using VVVV with custom plugins for threaded video playback / recording (up to 80 videos playing simultaneously whilst 2 videos being recorded), MySQL for database and a total of around 3000 recordings were taken during the exhibition. The team also used Adobe Flash for designing animations.

Hardware included 3 servers, each Core i7 Quad core (8 threads), Nvidia Geforce 460 GTX, 8GB RAM (for caching video playback), Triplehead2Go x 2, 2xPlaystation eye and 6 x 3000lm projector.

See video below including the making of at the bottom of the post.

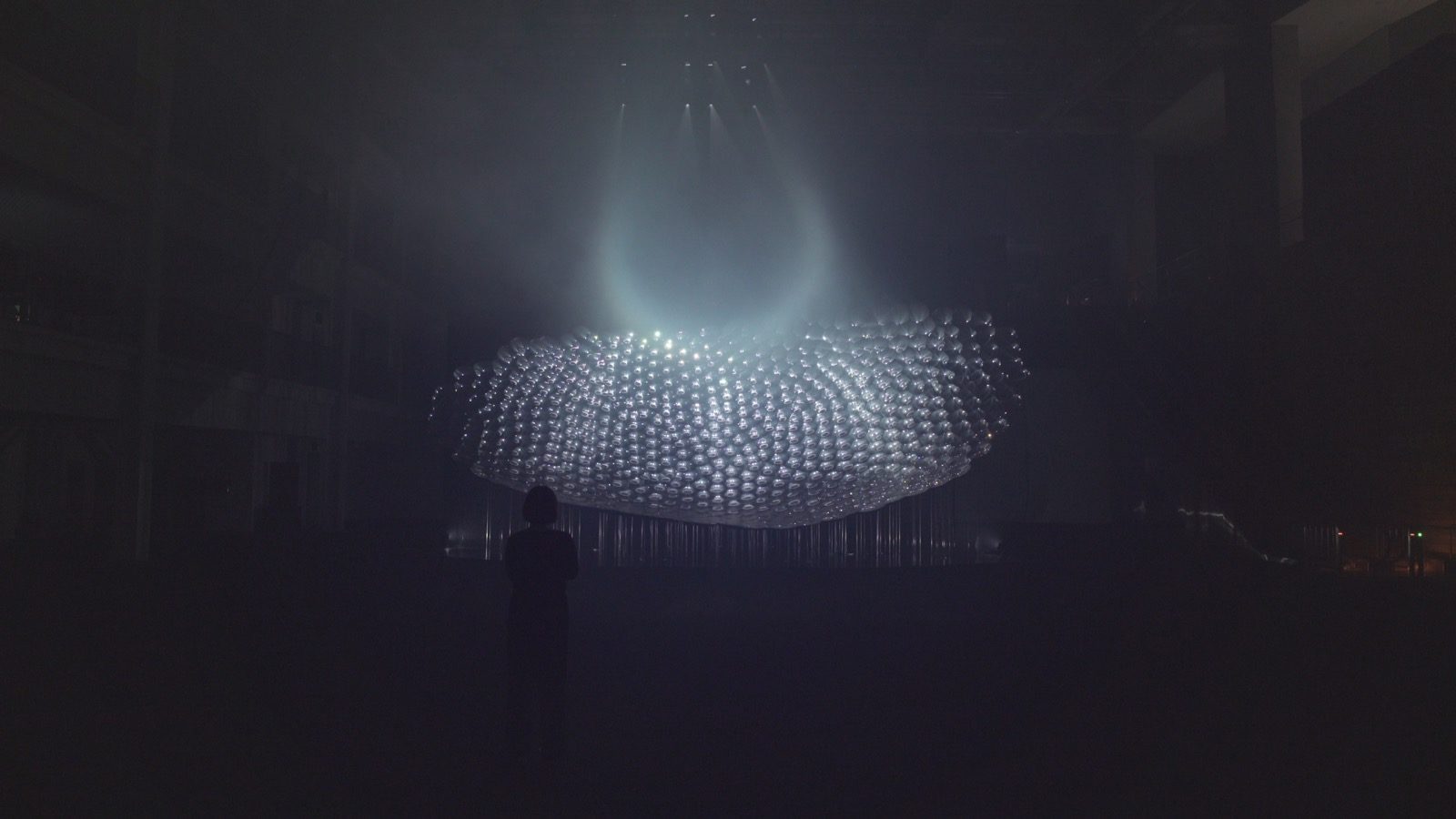

Kimchi and Chips are a cross-disciplinary art & design studio based in London and Seoul. They create installations, products and services that bridge the gap between people and people, people and technology, people and nature. They are Elliot Woods, media artist, technical designer and Mimi Son, user-centred interaction designer and visual artist.