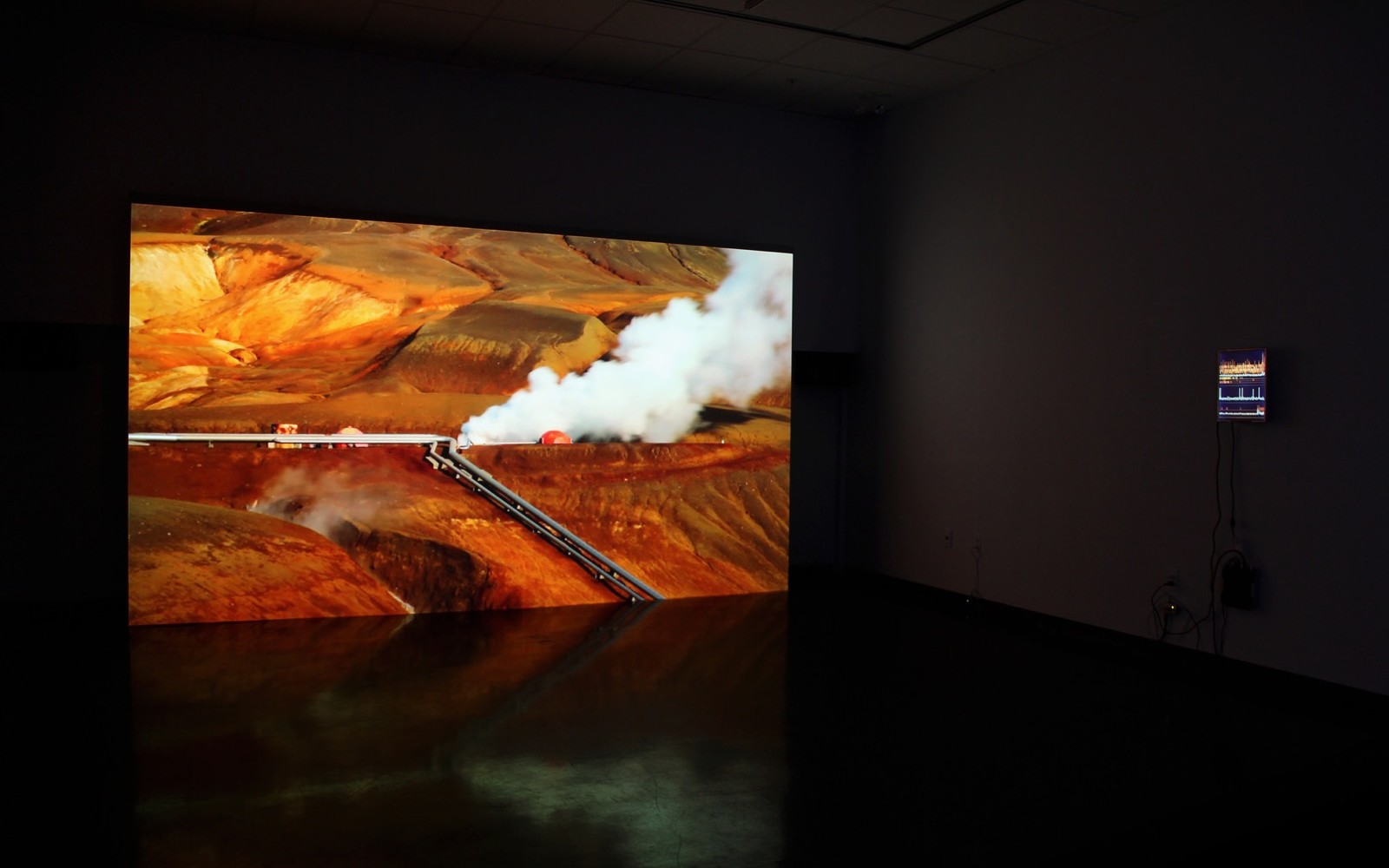

Given the current state of the world, this project is aptly named current_state. It is a fictional-functional product that analyzes daily news from major regions worldwide to understand global sentiment, then generates music that represents the world’s mood each day.

When someone enters the space (imaginary target location: bathrooms), an embedded mmWave radar triggers playback. It’s designed as an everyday object, like the ambient music players in fancy hotel bathrooms. But in our case, it is powered by AI, but that blends into its environment. A visitor hears only a looping song, unaware of the intentions and mechanisms embedded inside, much like modern devices performing complex operations we don’t necessarily understand or comprehend.

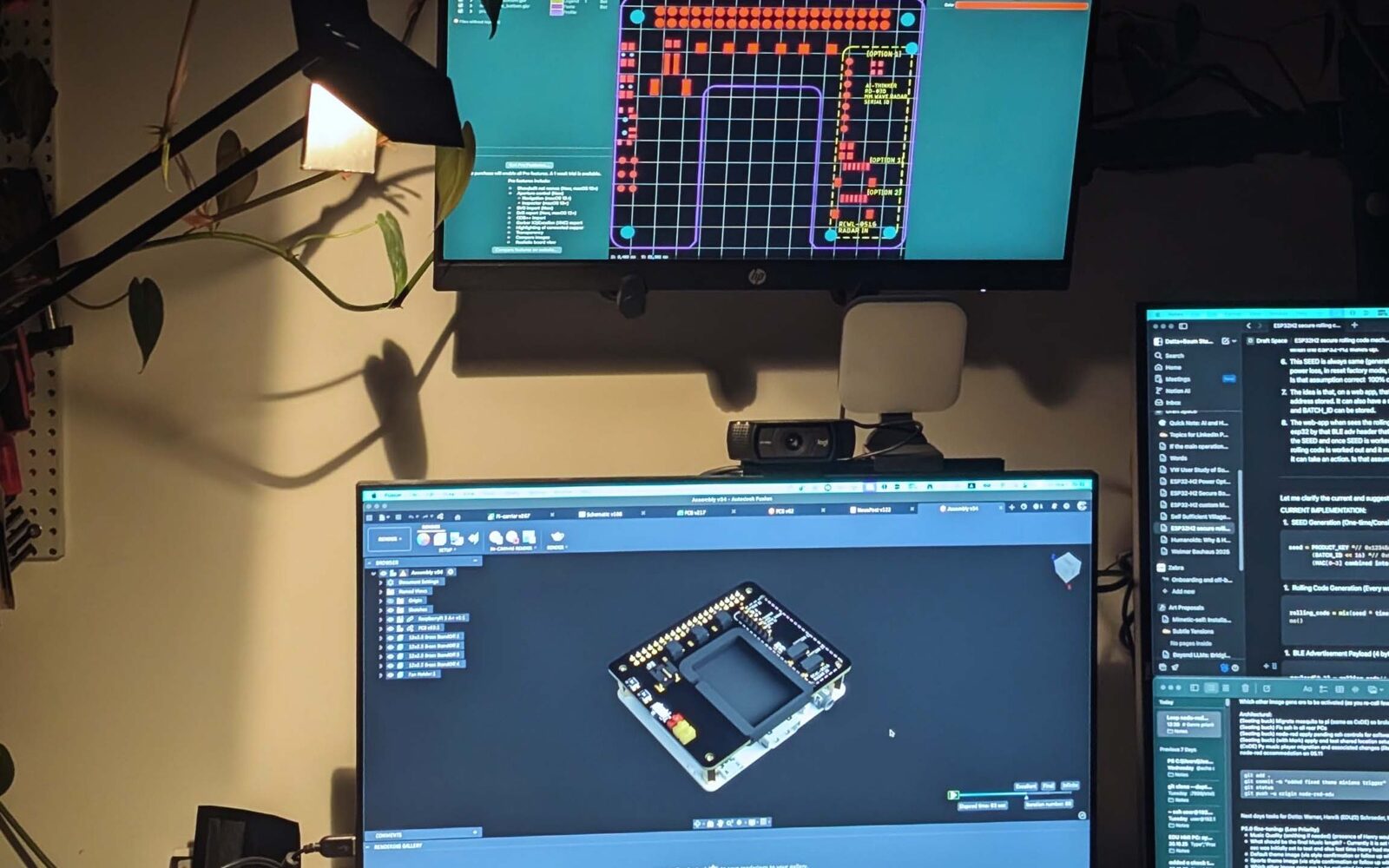

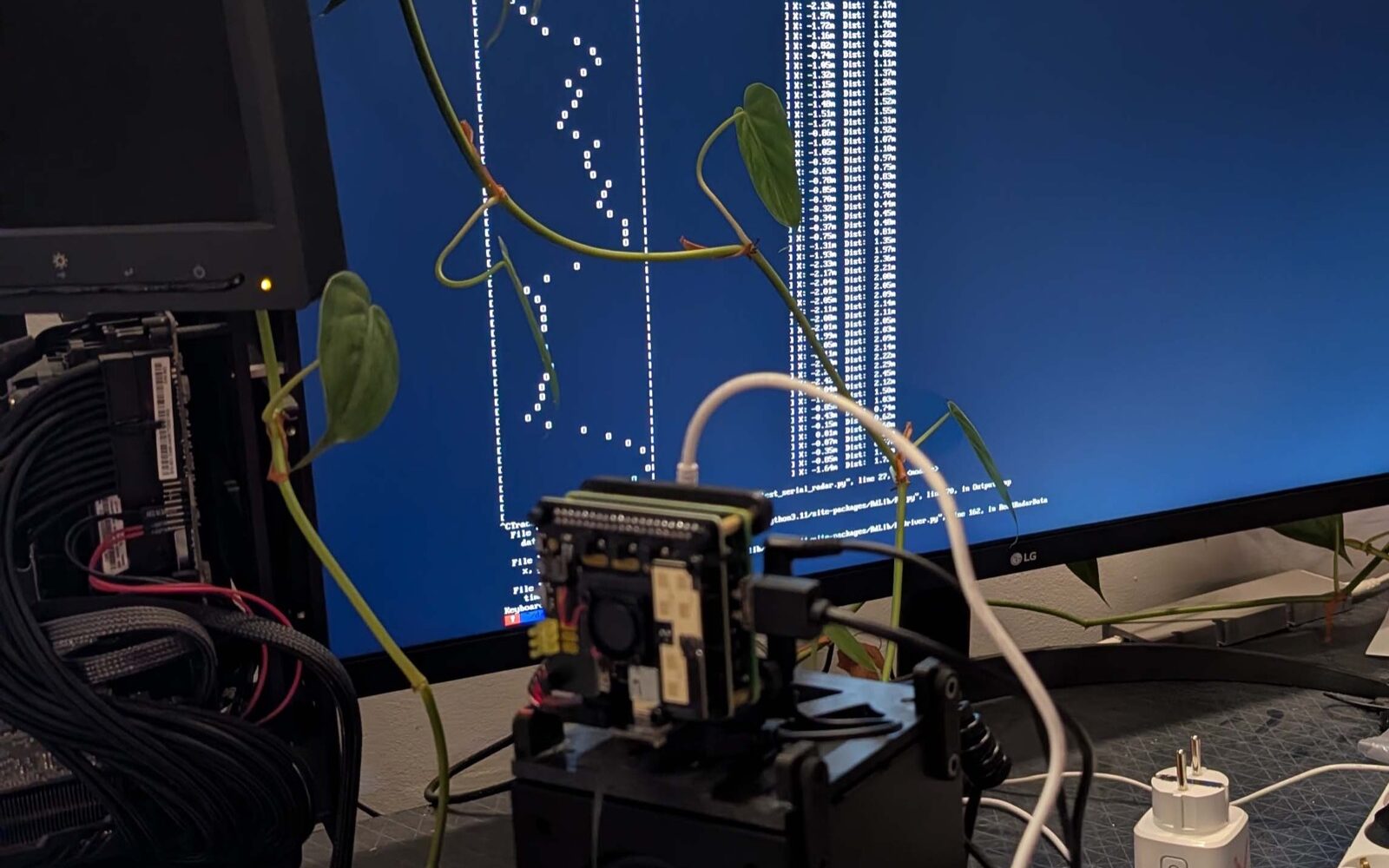

All sounds are ambient—whether the sentiment is chaotic (leaning melancholic) or positive. The system uses a hybrid process, not purely AI, since music generation requires careful prompting strategies (which vary across models) and controlled features are implemented to ensure pleasant repeatable output. The process can be complex, so the product current_state hosts a self-explanatory web interface where the whole chain is visualized.

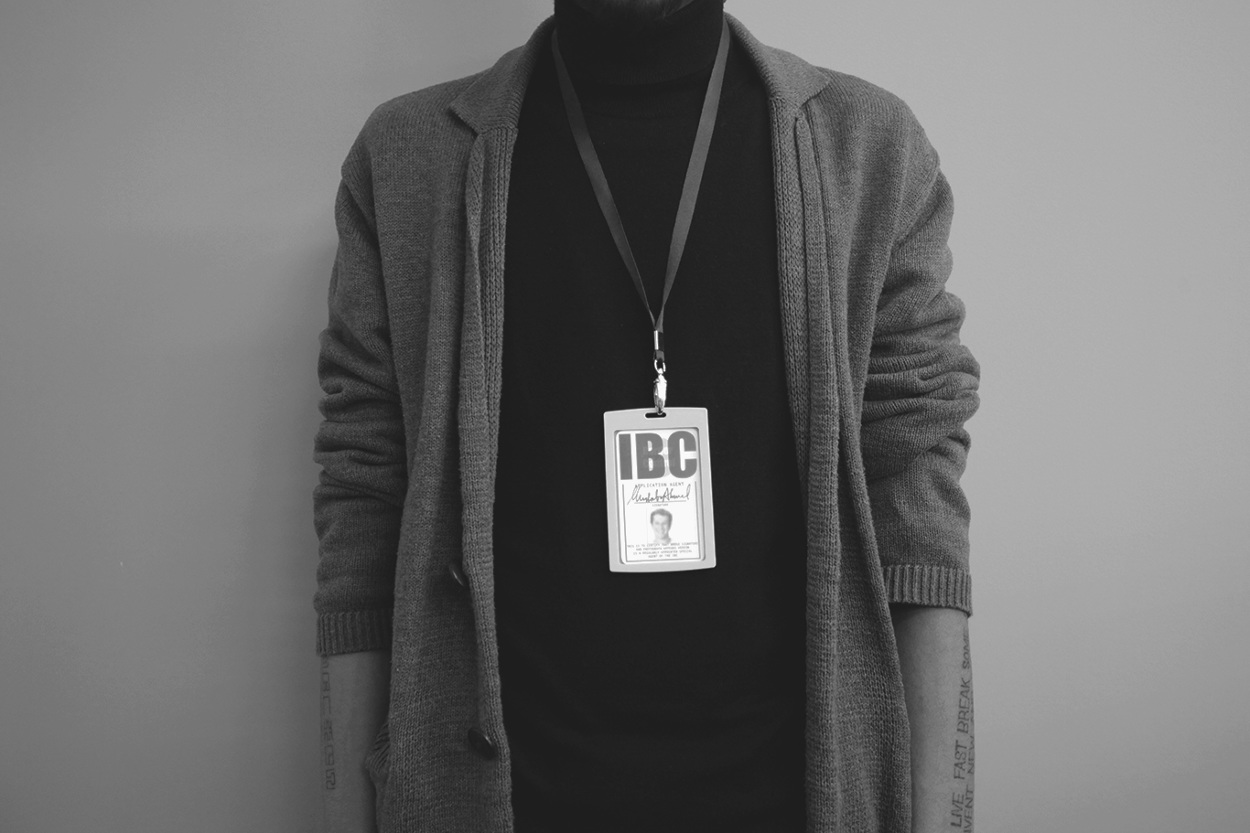

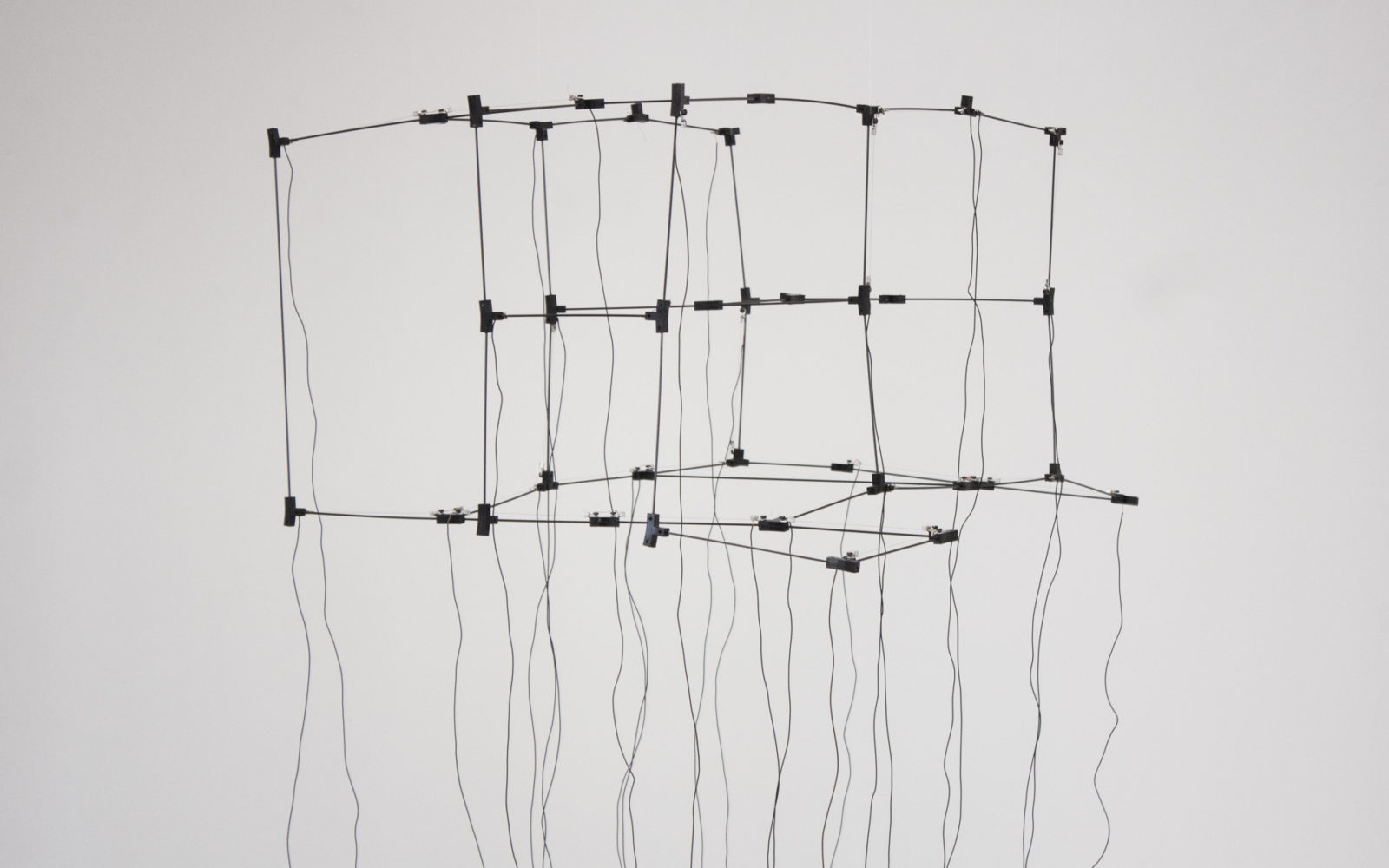

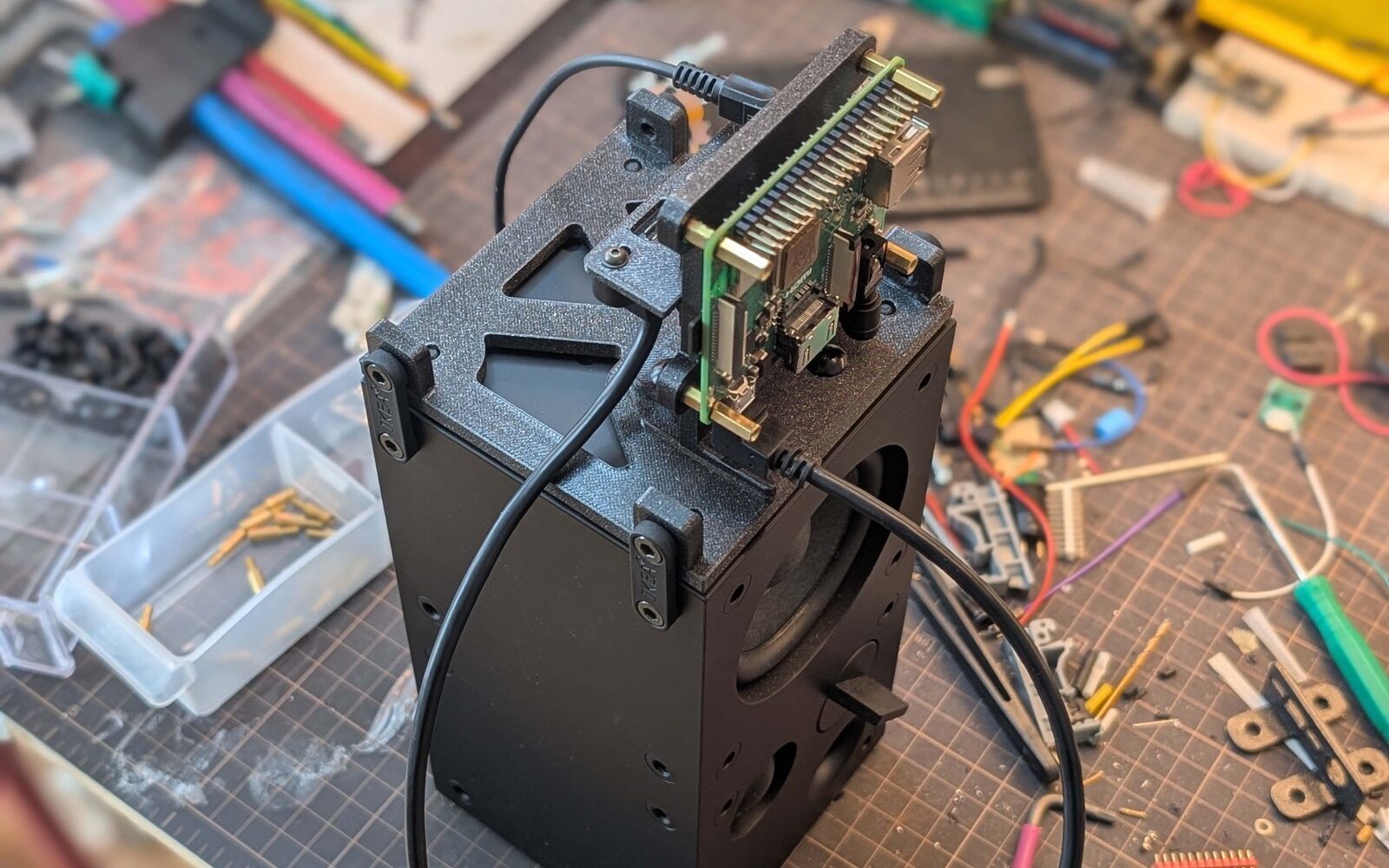

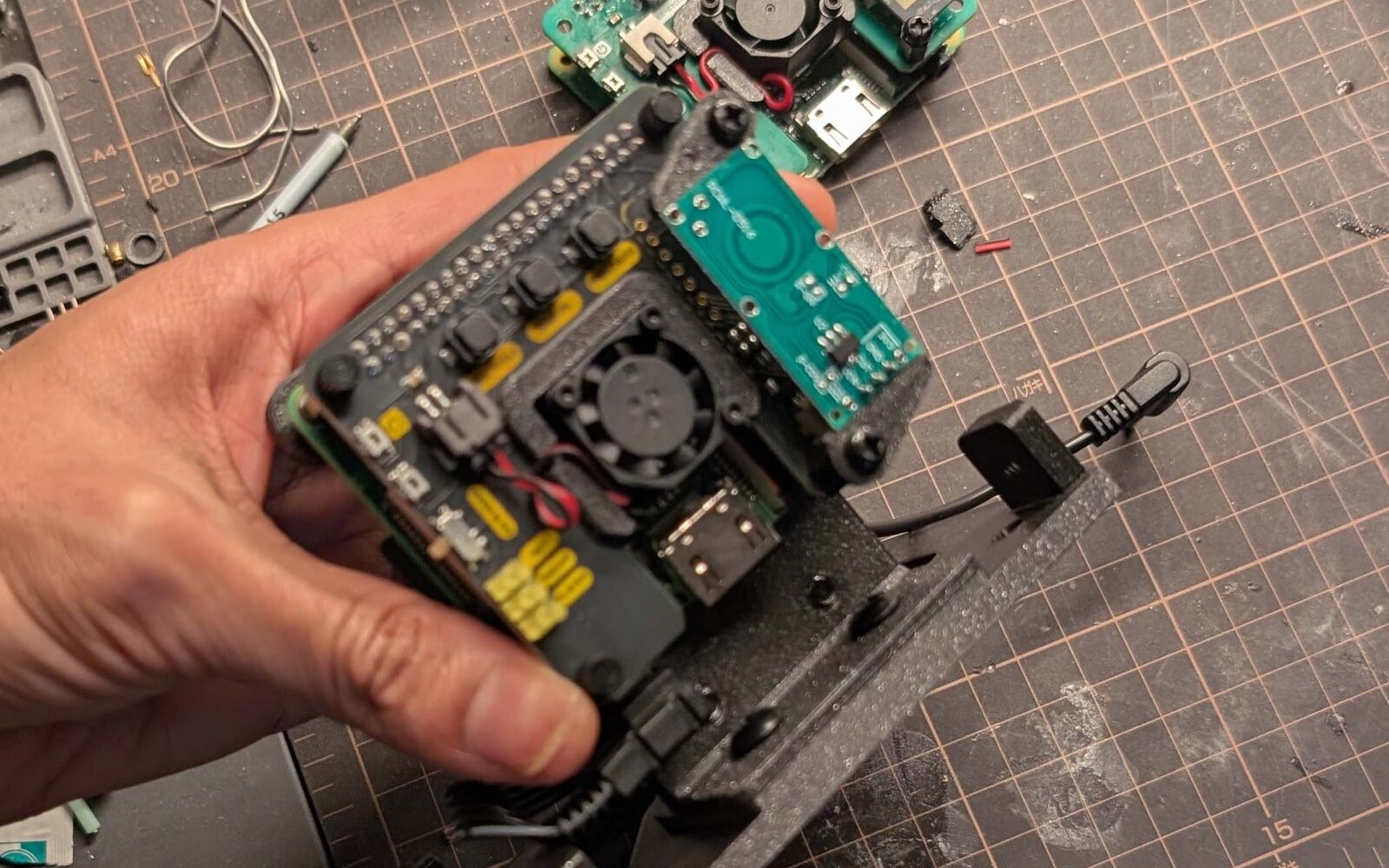

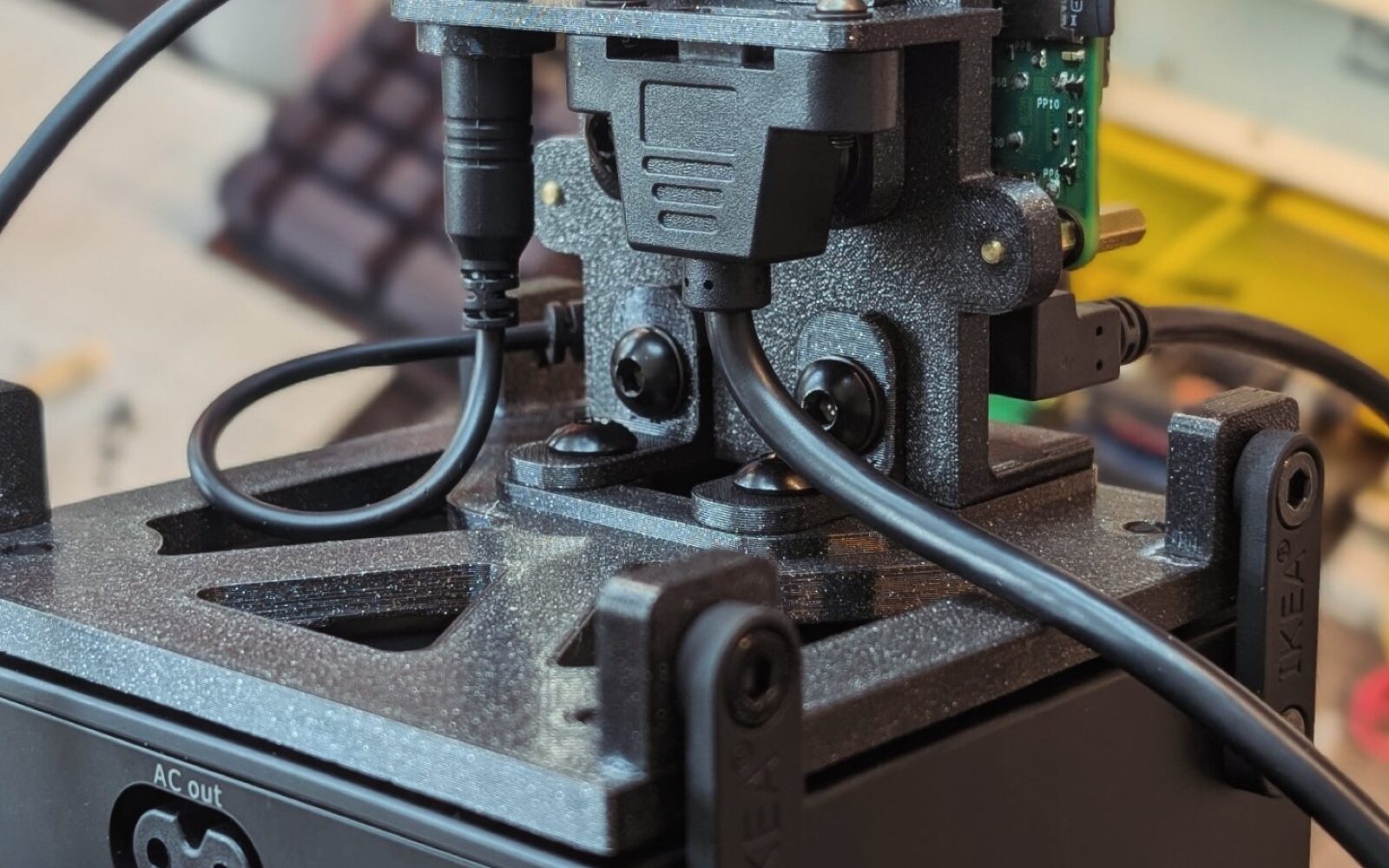

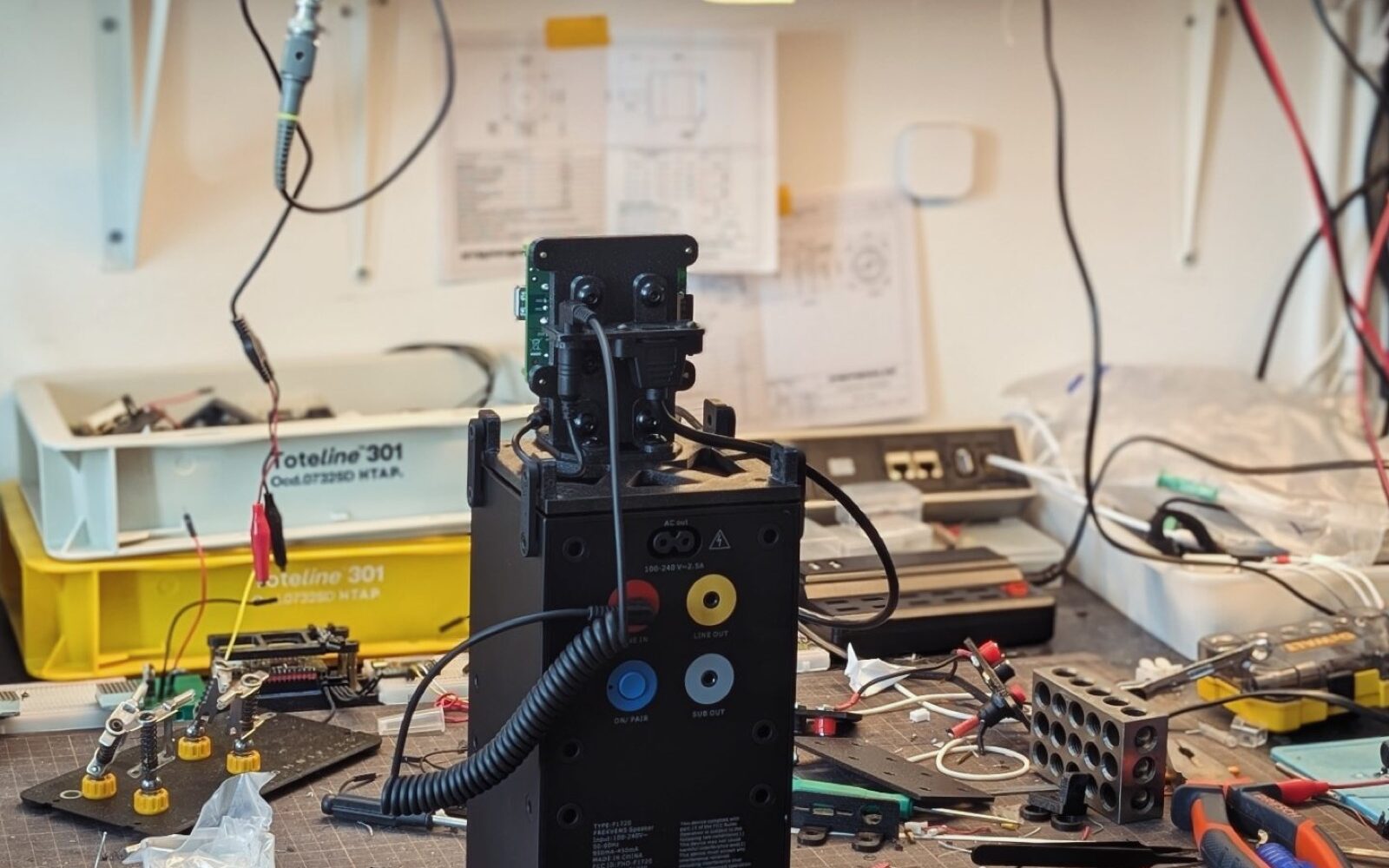

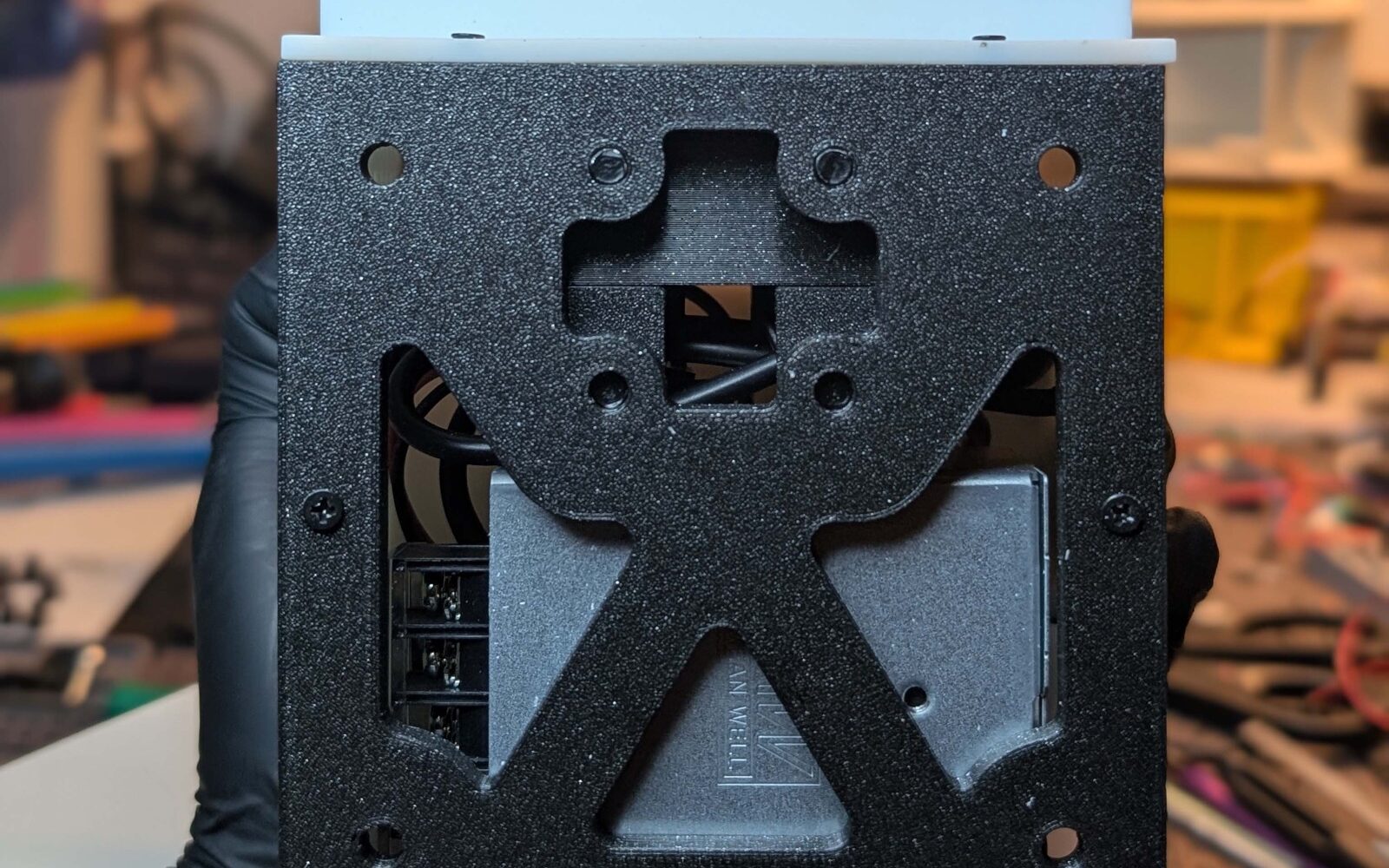

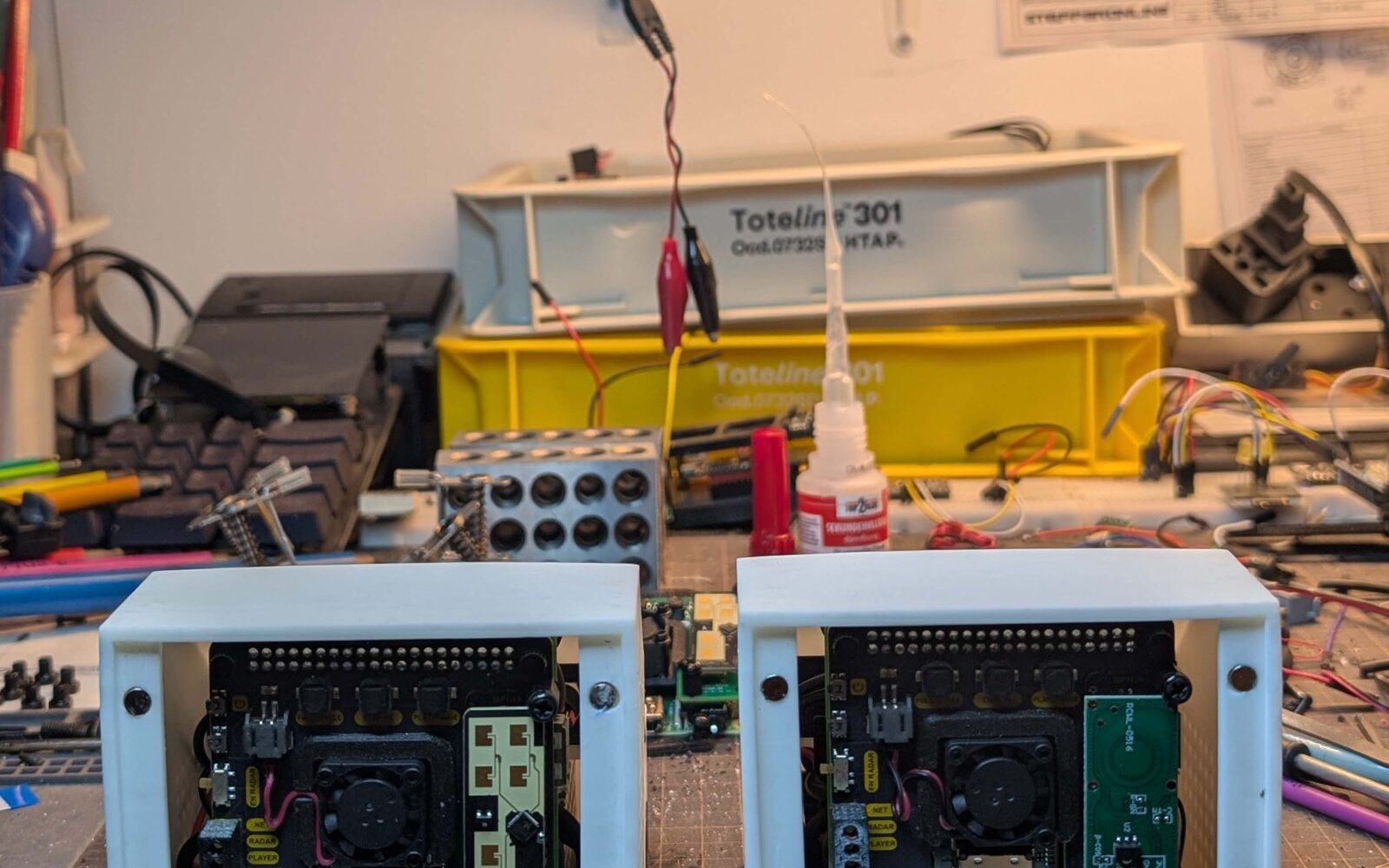

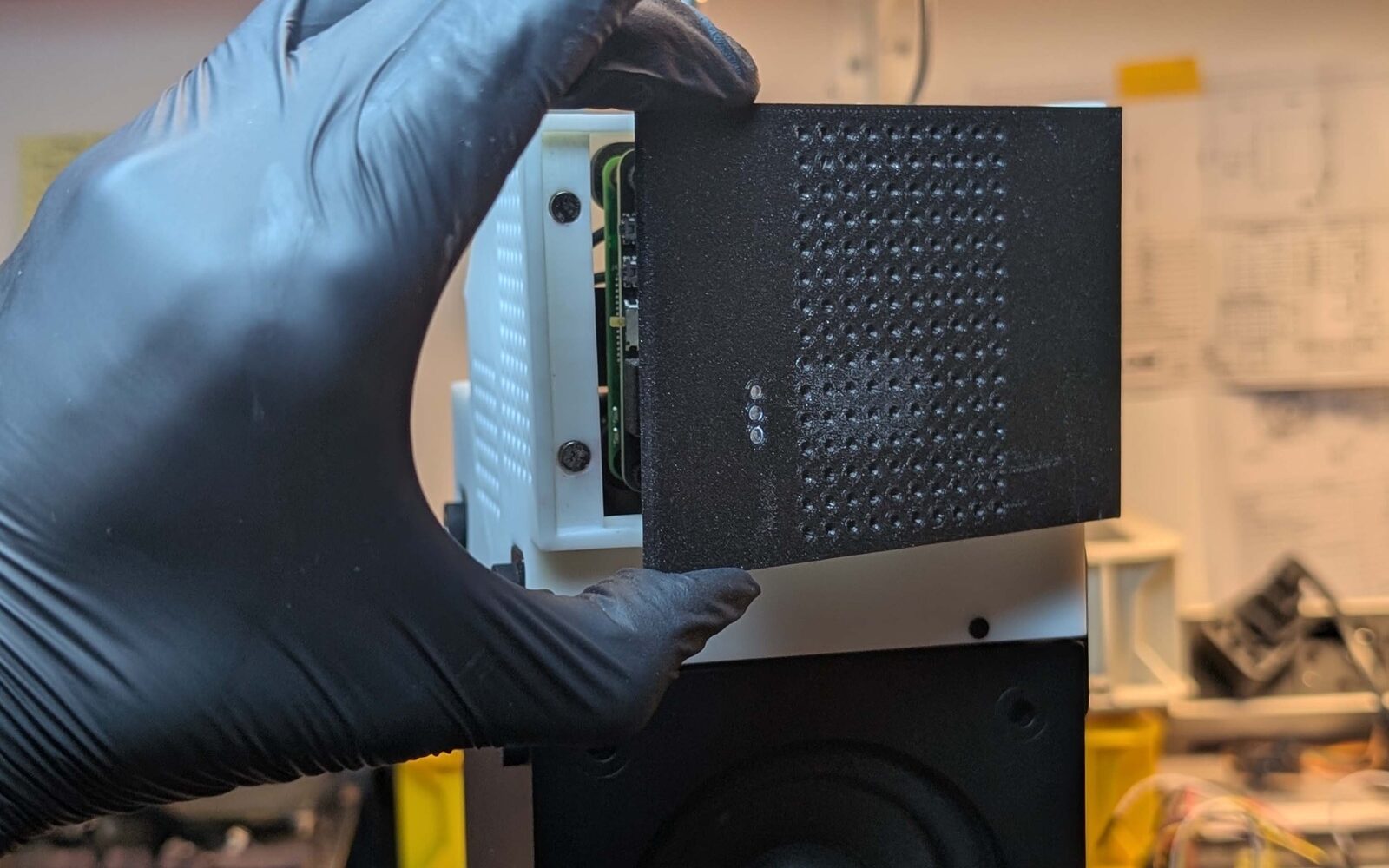

It can’t play audio on its own, so it requires an additional accessory (shown in the image: Ikea FREKVENS speakers designed in collaboration with Teenage Engineering). Following this theme, it’s designed to look like a real industrial product. The form—somewhat brutal—draws inspiration primarily from the speakers themselves. It includes product-like details: easy user-configurable WiFi and simple press-based power controls. These elements are designed with the same care you’d expect in a real IoT product’s software.

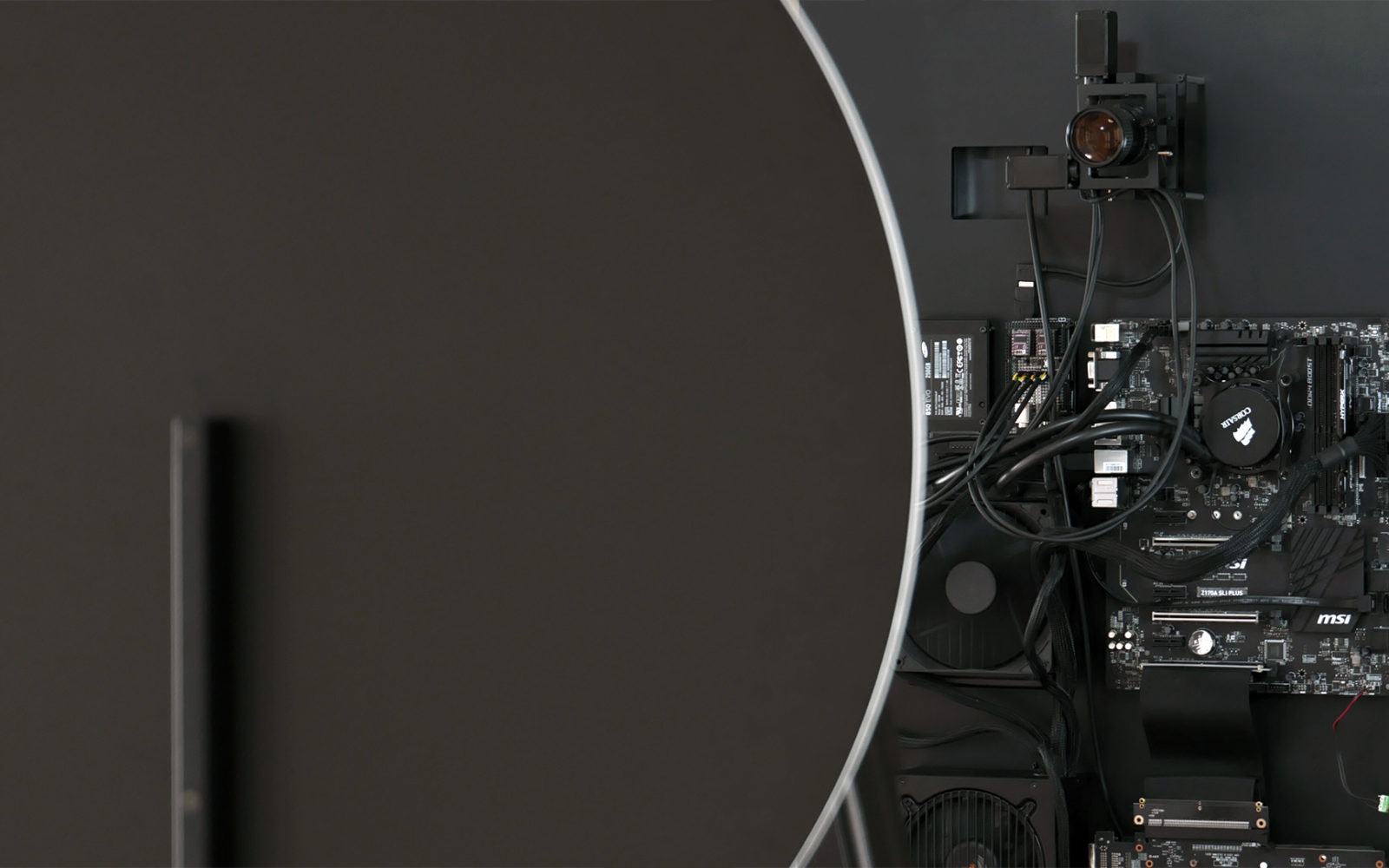

The system is running autonomously on a Raspberry Pi using open-source LLM models hosted on Replicate. With onboard hardware buttons, all functionalities can be triggered and controlled. For example, disabling radar trigger or shutting down or turning ON the PI or even resetting WiFi for the PI, can all be carried out via the custom PI HAT I designed. Every night at 3:00 AM, scrapes news and generates a 30-second ambient music piece based on world sentiment (and various other factors). Then, if the device detects presence of a person (also notified via LED), it plays back that music in a loop for a predefined time (currently set to 5 mins) and then stops. While music is playing, the LED is ON. While paused, it turns OFF. It serves a web monitor where you can see (1) News Tab: News that were collected, (2) Pipeline Tab: view explaining how music was then generated including Music files too, and (3) Logs Tab: of all process for live preview and debugging of any steps. If fails to connect to Wifi, it blinks and notifies you. If any step in the full pipeline (news scraping -> music generation) it shows in logs and if radar detection is disabled the LED also notifies you with blinks.

| Hardware | Software |

| Custom 3D Design & Prints, Custom PCB Design & Assembly (PI HAT), Raspberry PI | Replicate AI API (meta/musicgen & meta/llama3) NewsAPI Python |

More details are available on GitHub (here, here and here).

Project Page | Saurabh Datta | X | Instagram