Created by Golan Levin, Chris Sugrue, and Kyle McDonald, The Augmented Hand Series is a real-time interactive software system that presents playful, dreamlike, and uncanny transformations of its visitors’ hands. The project explores interrelations of hand, mind and identity and is designed as ‘an instrument for probing or muddling embodied cognition‘ using a ‘direct manipulation’ interface and the suspension of disbelief to further problematize the mind-body problem‘. Originally conceived in 2004, the project was developed at the Frank-Ratchye STUDIO for Creative Inquiry in 2013-2014 through a commission from the Cinekid Festival of children’s media.

The hand is a critical interface to the world, allowing the use of tools, the intimate sense of touch, and a vast range of communicative gestures. Yet we frequently take our hands for granted, thinking with them, or through them, but hardly ever about them. Our investigation takes a position of exploration and wonder. Can real-time alterations of the hand’s appearance bring about a new perception of the body as a plastic, variable, unstable medium? Can such an interaction instill feelings of defamiliarization, prompt a heightened awareness of our own bodies, or incite a reexamination of our physical identities? Can we provoke simple wonder about the fact that we have any control at all over such a complex structure as the hand? Read more.

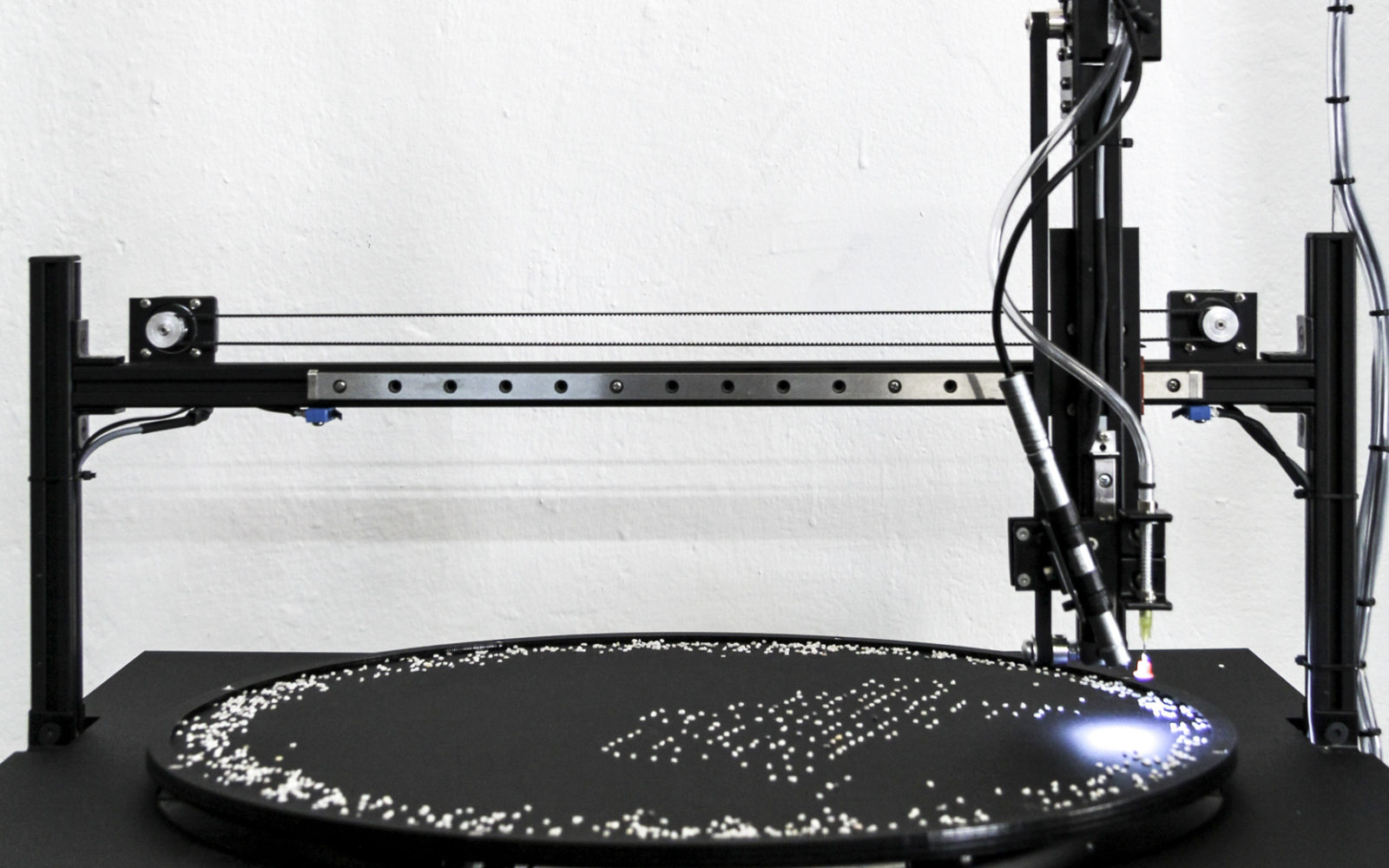

The installation, as premiered at the 2014 Cinekid festival, invited visitors to interact with the software by inserting their hand into a ‘box’ constructed to provide an alternative (interactive) view of their hand. The accompanying large scale projection displays the dynamic and structural transformations affecting the hand, and the system works even with visitors who wiggle their fingers, or who move and turn their hand. The software may produce unpredictable glitches if the visitor’s hand differs significantly from a flat palm-down or palm-up pose. However the system accommodates a wide range of hand sizes, from children (of about 5 years old) through adults, as well as a very broad range of skin colors. It also performs robustly with hands that have jewelry, nail polish, tattoos, birthmarks, wrinkles, arthritic swelling, and/or unusually long, short, wide or slender digits.

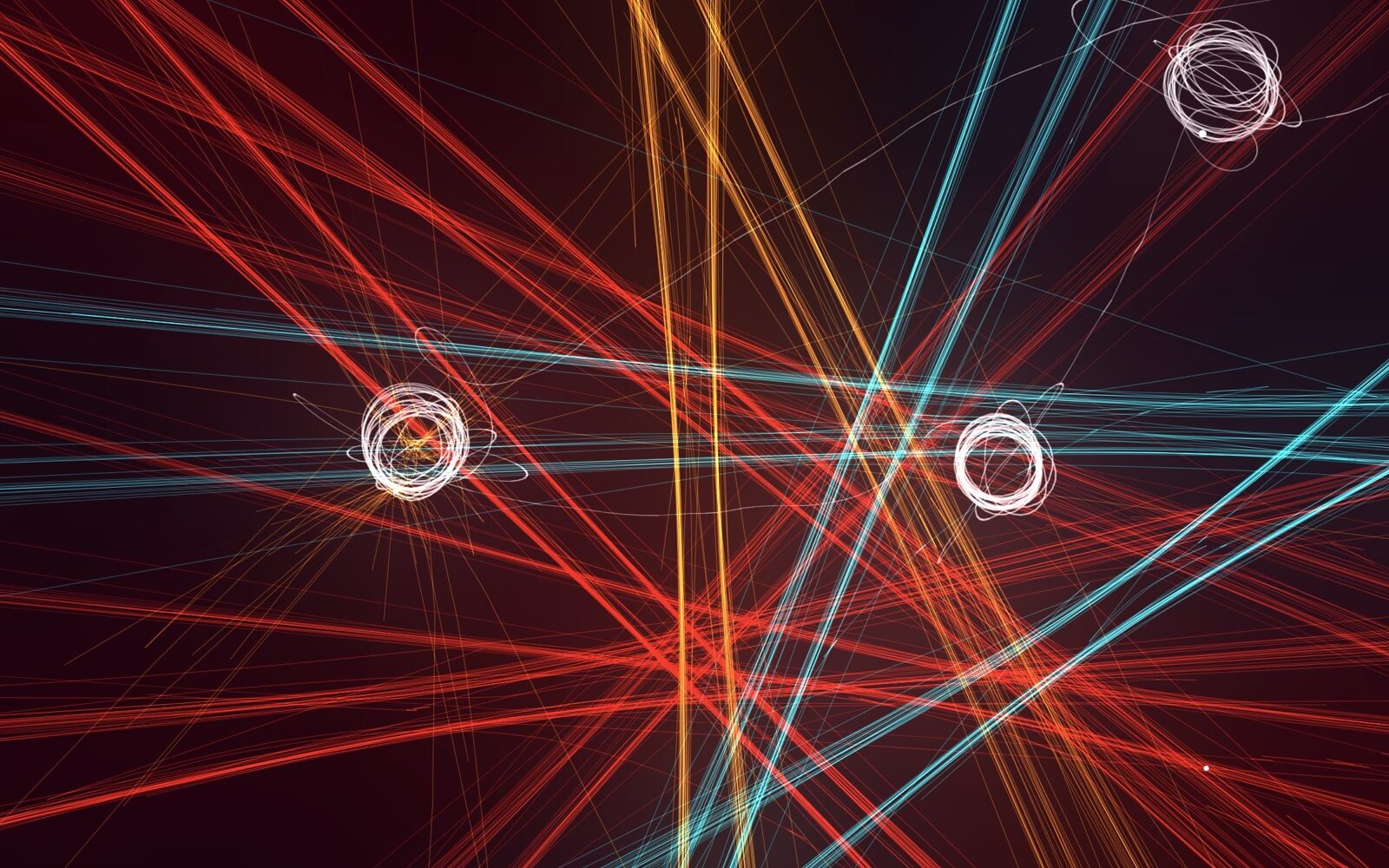

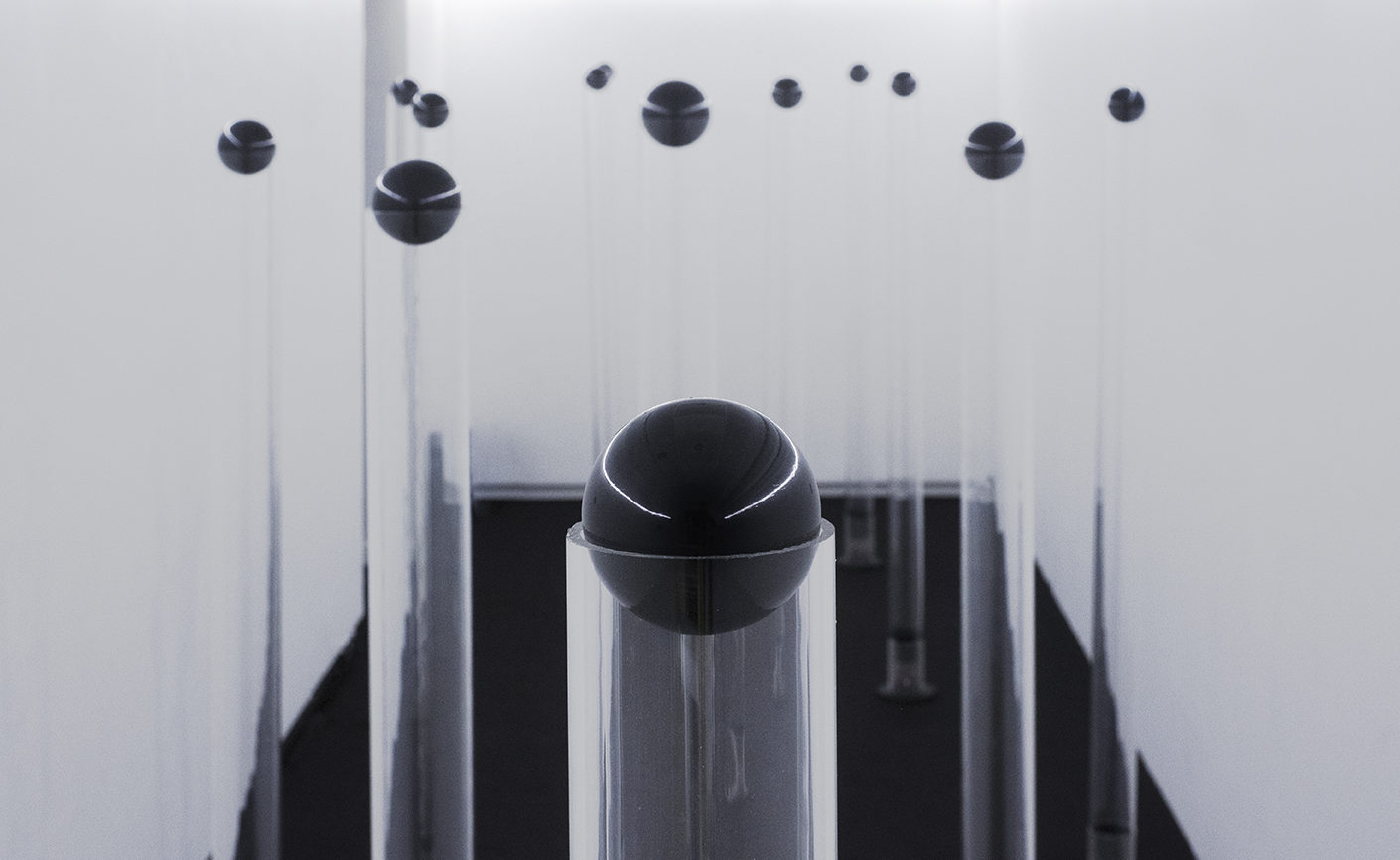

About twenty different transformations or scenes have been developed for the project. Some of these perform structural edits to the hand’s archetypal form; others endow the hand with new dimensions of plasticity; and others imbue the hand with a kind of autonomy, whose resulting behavior is a dynamic negotiation between visitor and algorithm. These include ‘Plus One’, ‘Minus One’, ‘Variable Finger Length’, ‘Meandering Fingers’ (the fingers take on a life of their own), ‘Procrustes’ (all fingers are made the same length), ‘Lissajous’ (the palm is warped in a periodic way), ‘Breathing Palm’ (the palm inflates and deflates), ‘Vulcan Salute’ (the third and fourth fingers are cleaved), ‘Angular Exaggeration’ (fingers are added and angles amplified), and ‘Springers’ (finger movements are exaggerated by bouncy simulated physics).

The Augmented Hand Series was developed using openFrameworks. Structurally, the artwork consists of two main components: a system which attempts to understand the structure of the hand, and a system for altering the image of the hand. Both components could only be achieved by using certain recent advances in computer vision and computer graphics.

To build a model of the visitor’s hand, the project combines geometric information about the hand’s “skeleton” (obtained from a Leap Motion controller), with pixel- and contour-based information gleaned from a high resolution color video camera (with the aid of OpenCV-based image processing techniques such as background subtraction, blob detection, edge detection, and contour extraction). Because the camera and the Leap controller are necessarily located in physically different places, they observe the visitor’s hand from different points of view. As a result, one of the first technical hurdles in creating the project was developing code to calibrate these two sensors together, so that the skeleton data produced by the Leap and the contour data obtained from the camera could be aligned together in a common coordinate system.

Golan’s weeklong summer workshop in vision hacking at the woodsy Anderson Ranch arts center provided the ideal setting for addressing the Leap/camera calibration problem. Workshop participant and game developer Simon Sarginson, with remote assistance from Elliot Woods, developed an application to perform real-time augmented projections of the Leap skeleton data onto a person’s hand. Although the application space of Simon’s concept is physically distinct from the Augmented Hand Series (he calibrates a Leap+projector instead of a Leap+camera), the mathematics are exactly the same. It’s worth pointing out that recent updates to the Leap SDK should make this sort of calibration much easier in the future.

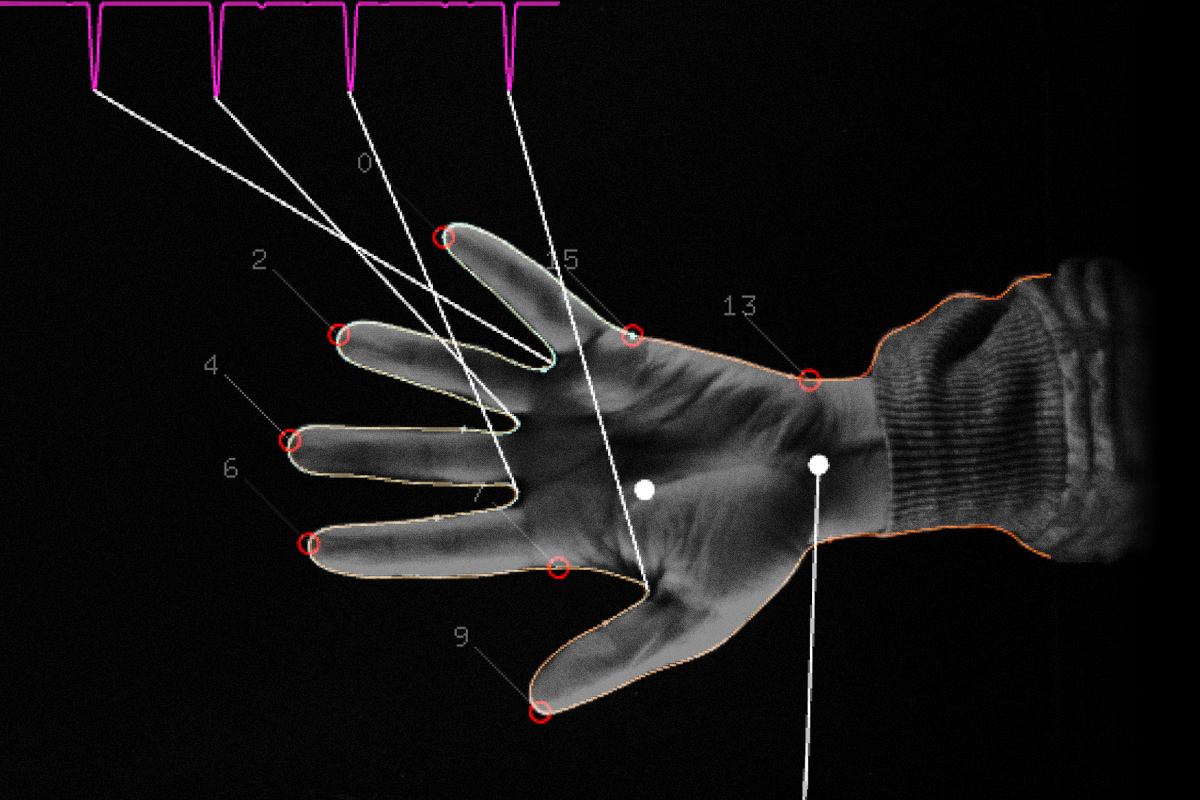

Above – The “Plus One” scene with debug view, showing the software’s resilience to finger movements.

Another core technical hurdle in the Augmented Hand Series was the development an image-processing system which could correctly label each of the camera’s pixel with the part of the hand to which it belongs. In other words, for every pixel in the video camera’s image of the hand, we had to know, with very strong certainty, which finger it belonged to. Leap Motion has certainly made great strides with their software development toolkit, and their 2.0+ SDK is arguably one of the best available products, in late 2014, for accurately representing the pose of the hand with a geometric skeleton. Nevertheless, their SDK does not address this problem at all. Simple geometric techniques for solving this problem failed badly; errors in the Leap estimation meant that some fingers were mis-identified, while others were labeled with more than one identity, and some fingers had regions with no labels at all. Instead, a solution was developed that propagated identity information using Voronoi regions, represented by pink blobs in the “debug-view” images and videos above.

The complete source code for the Augmented Hand Series is online, in the Github repository of the Frank-Ratchye STUDIO for Creative Inquiry. There is also an online image archive of “debug-view” screenshots, documenting the project’s inner workings and technical development.

For more information about the project and ideas as well as historical precedents, please see project page link below.

Project Page | Golan Levin | Chris Sugrue | Kyle McDonald

Credits:

The Augmented Hand Series (2014), its code and its associated media, by Golan Levin, Christine Sugrue and Kyle McDonald, are licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

It was commissioned by the Cinekid Festival, Amsterdam, October 2014, with support from the Mondriaan Fund for visual art. It was developed at the Frank-Ratchye STUDIO for Creative Inquiry at Carnegie Mellon University.

Concept and software development: Golan Levin, Chris Sugrue, Kyle McDonald.

Software assistance: Dan Wilcox, Bryce Summers, Erica Lazrus, Zachary Rispoli.

Article edit: Golan Levin (source)

Code repository: http://github.com/CreativeInquiry/digital_art_2014