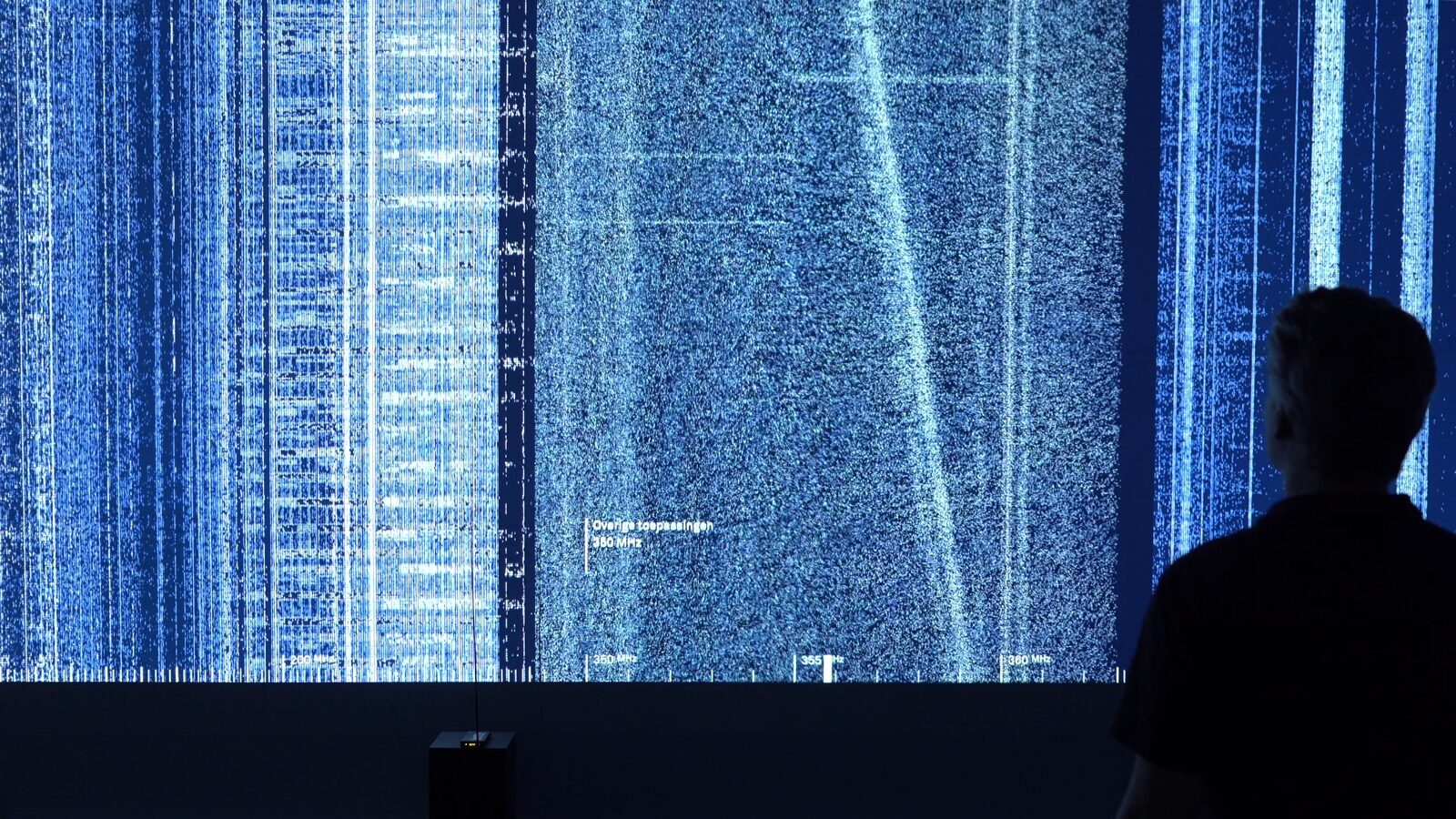

Beyond the Screen is an immersive installation that explores how algorithms look right back at us – exposing, in real time, how machine vision perceives and interprets human behaviour.

In today’s world, where screens have become just as commonplace and omnipresent as windows themselves, it’s easy to overlook the symbiotic, shaping relationship that we have with digital technology. And yet, as we look into a screen, it looks back into our eyes – just as humans shape and determine the future and direction of our technological creations, as we interact with these same creations, we partially internalise their mode of perception and understanding too. The screens shape and determine us.

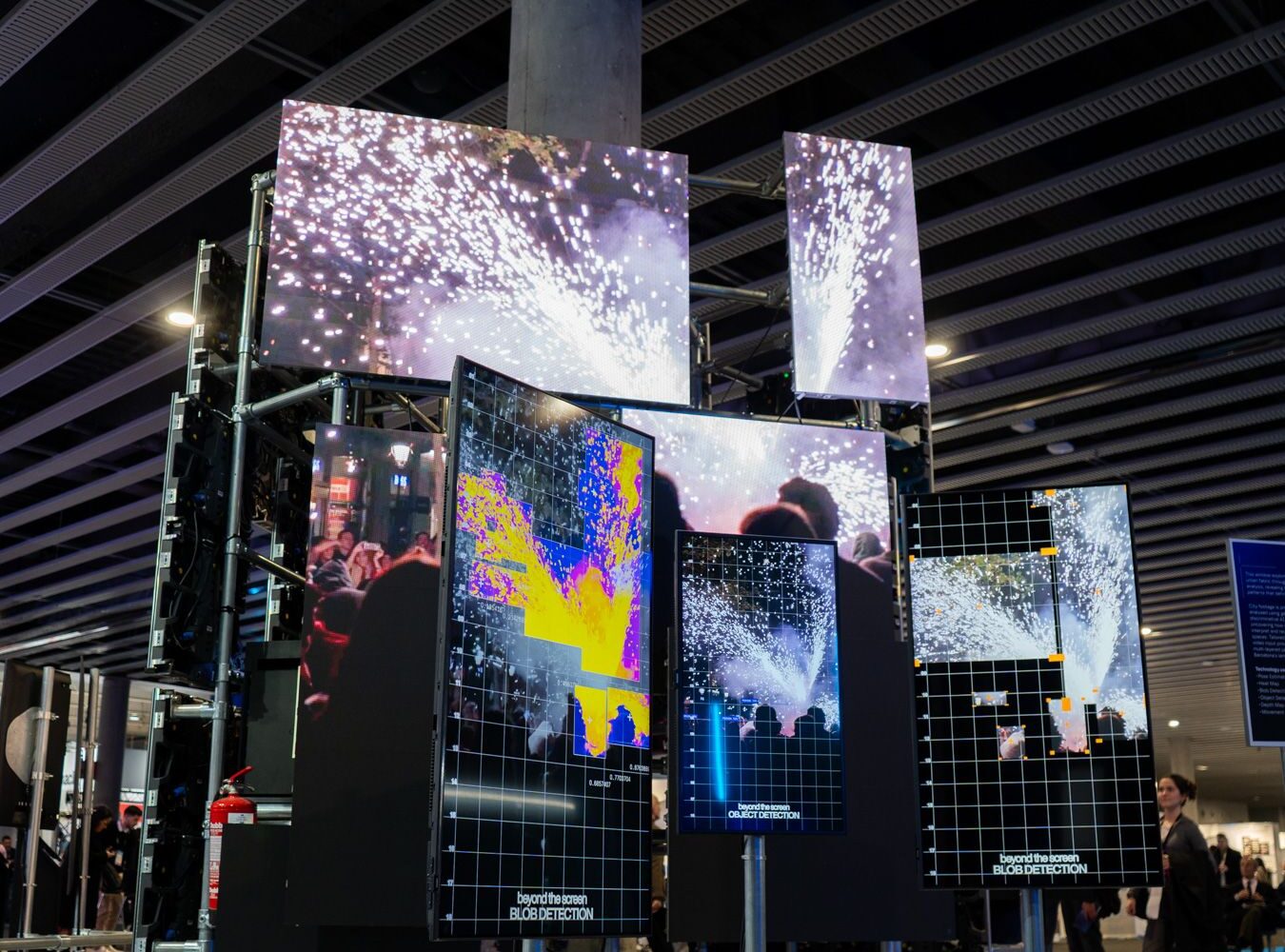

This “cyborg” existence is exactly what we sought to expose in Beyond the Screen, exhibited at an audiovisual and systems integration exhibition in Barcelona, in 2025. By taking on the viewpoint of “machine eyes,” we wanted to explore how these technologies perceive and shape human behaviour in real time – not to render a verdict on AI, but to demystify its inner workings and foster a deeper public understanding of both the seemingly simple applications and the potential pitfalls of algorithmic surveillance.

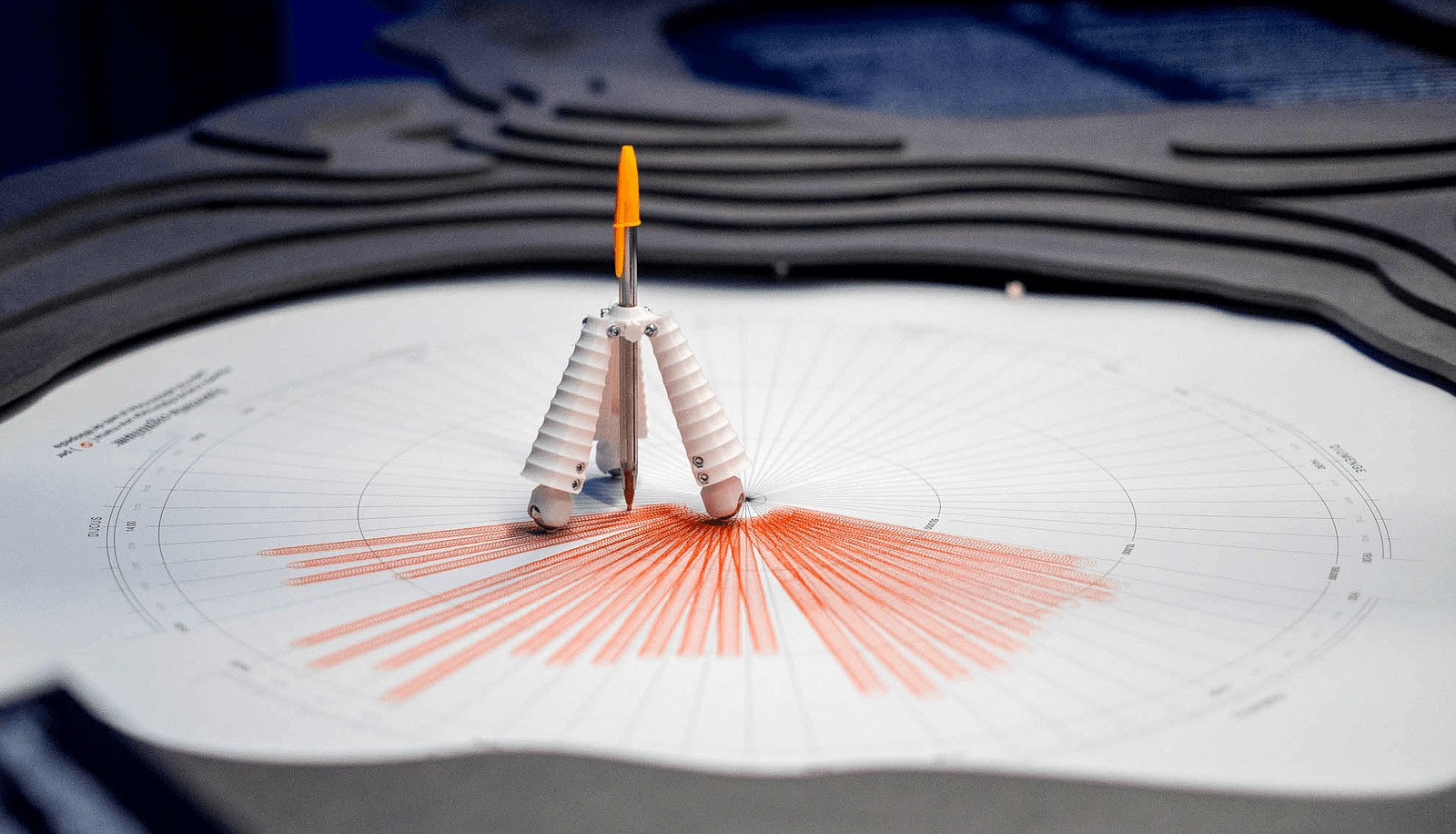

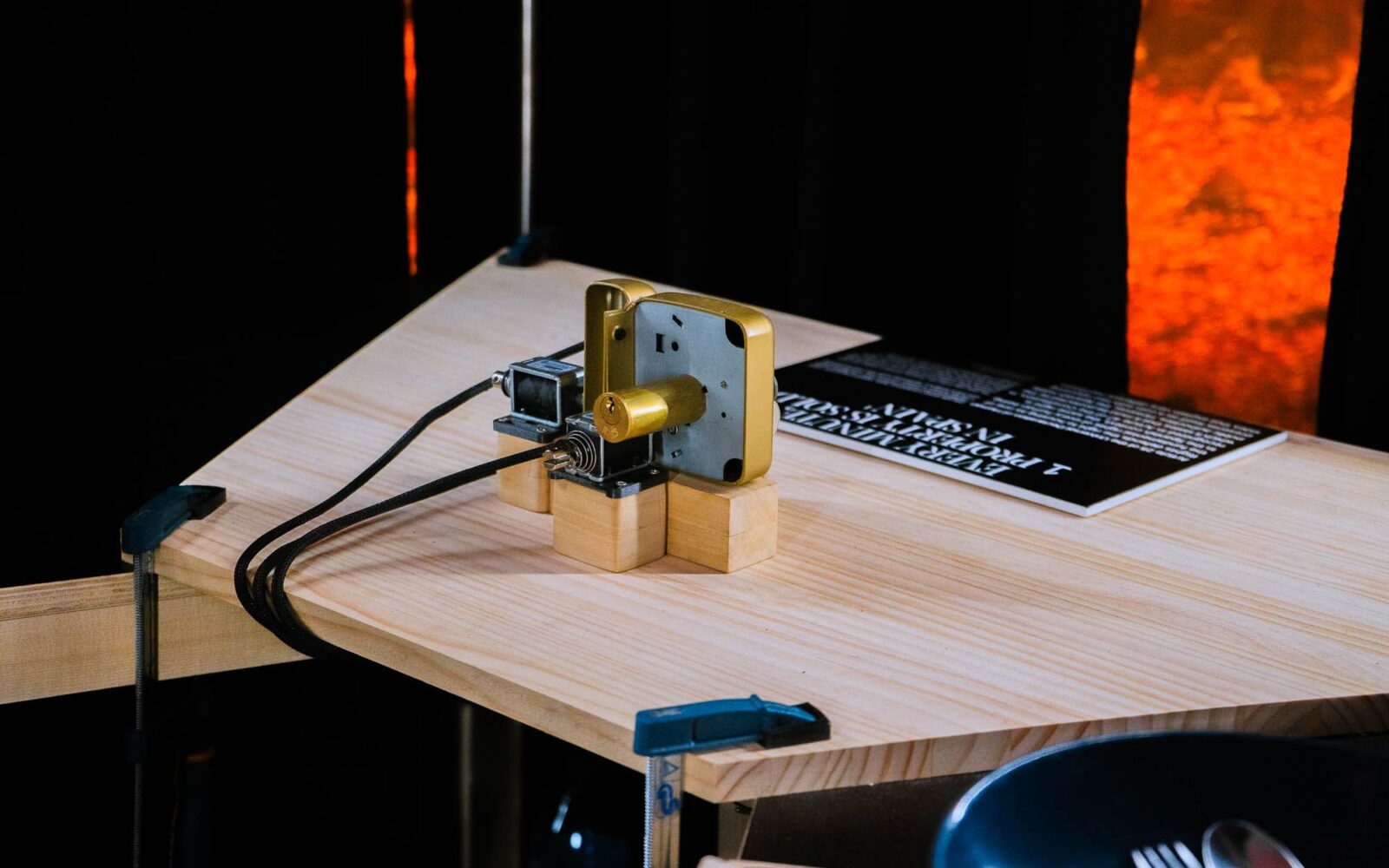

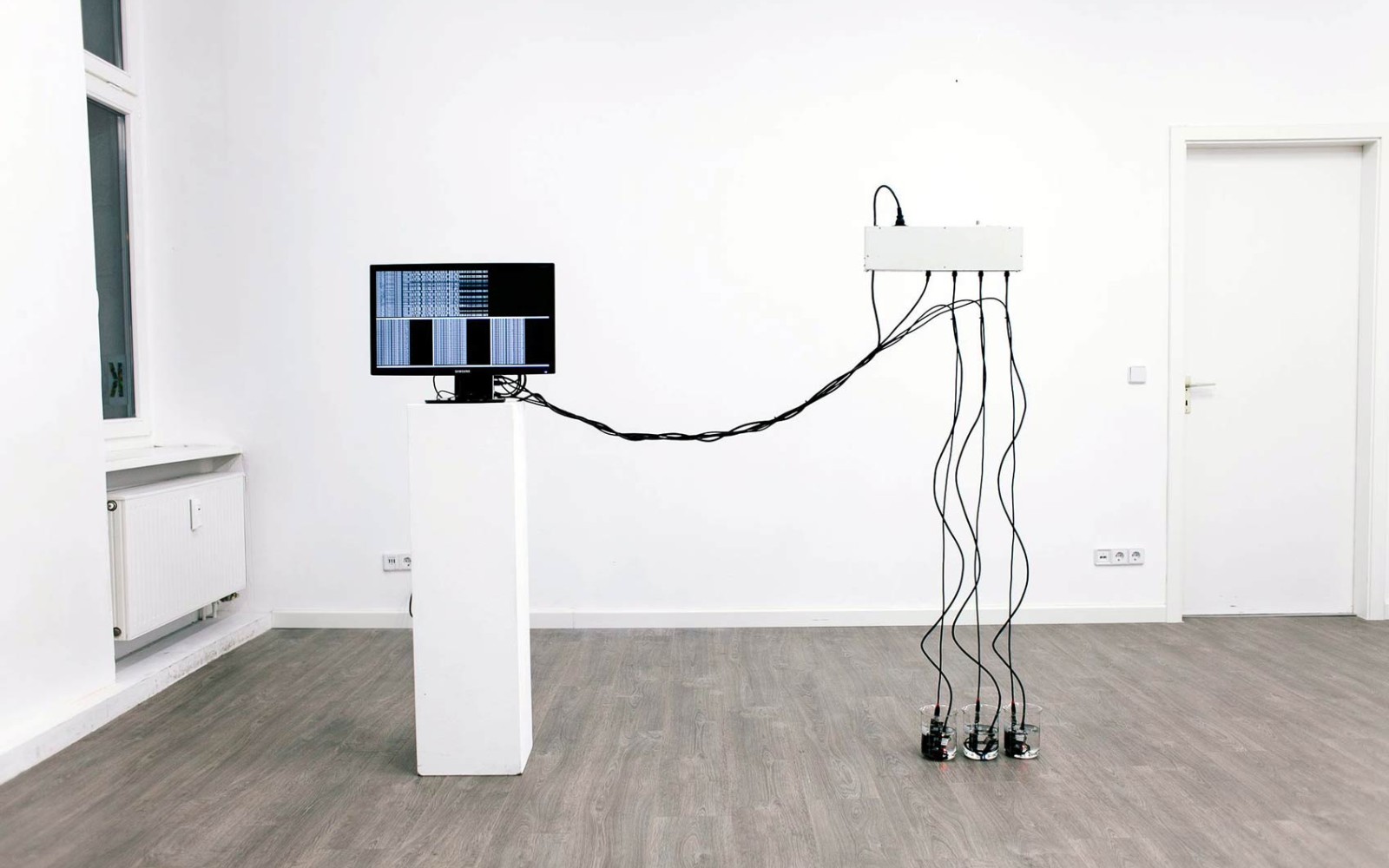

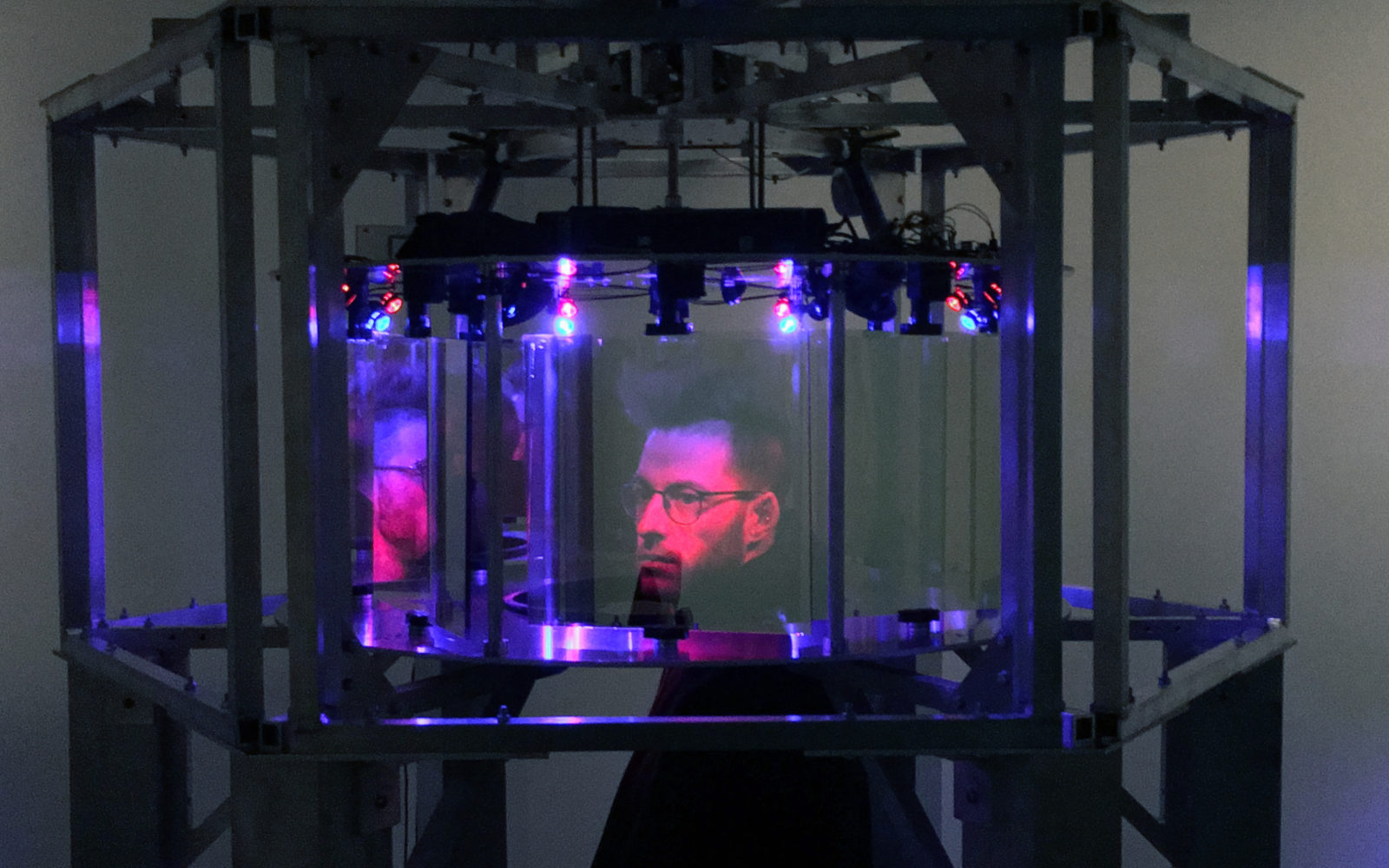

The installation is structured around three “windows,” each focusing on a different scale at which these technologies operate: individual, collective, and urban. Each of the three windows runs a distinct set of computer vision and machine learning pipelines, tailored to the scale it represents. At the individual level, the system uses landmark detection and facial recognition models to track and analyse body posture, movement, and facial features in real time. At the collective scale, object detection and people-tracking models monitor group dynamics and movement flows across shared spaces. At the urban scale, the installation draws on imagery of Barcelona processed through segmentation and classification models to demonstrate how city-wide algorithmic monitoring operates.

A core design principle throughout was transparency over the act of observation: rather than obscuring the technology behind a seamless interface, the installation was deliberately built to make its computational processes legible to a non-specialist public, surfacing algorithmic outputs, classification labels, and detection overlays as part of the visual language of the piece itself. Over four days of exhibition, the installation detected 31,236 people, 8,445 phones, 22,469 backpacks, 27,637 handbags (and 54 bananas.)

Project Page | Domestic Data Streamers | Instagram