Directed by Evan Boehm at Nexus Interactive Arts and commissioned by The Creators Project, The Carp and the Seagull is an interactive short film about one man’s encounter with the spirit world and his fall from grace. It is a user driven narrative that tells a single story through the prism of two connected spaces. One space is the natural world and the other is the spirit or nether world.

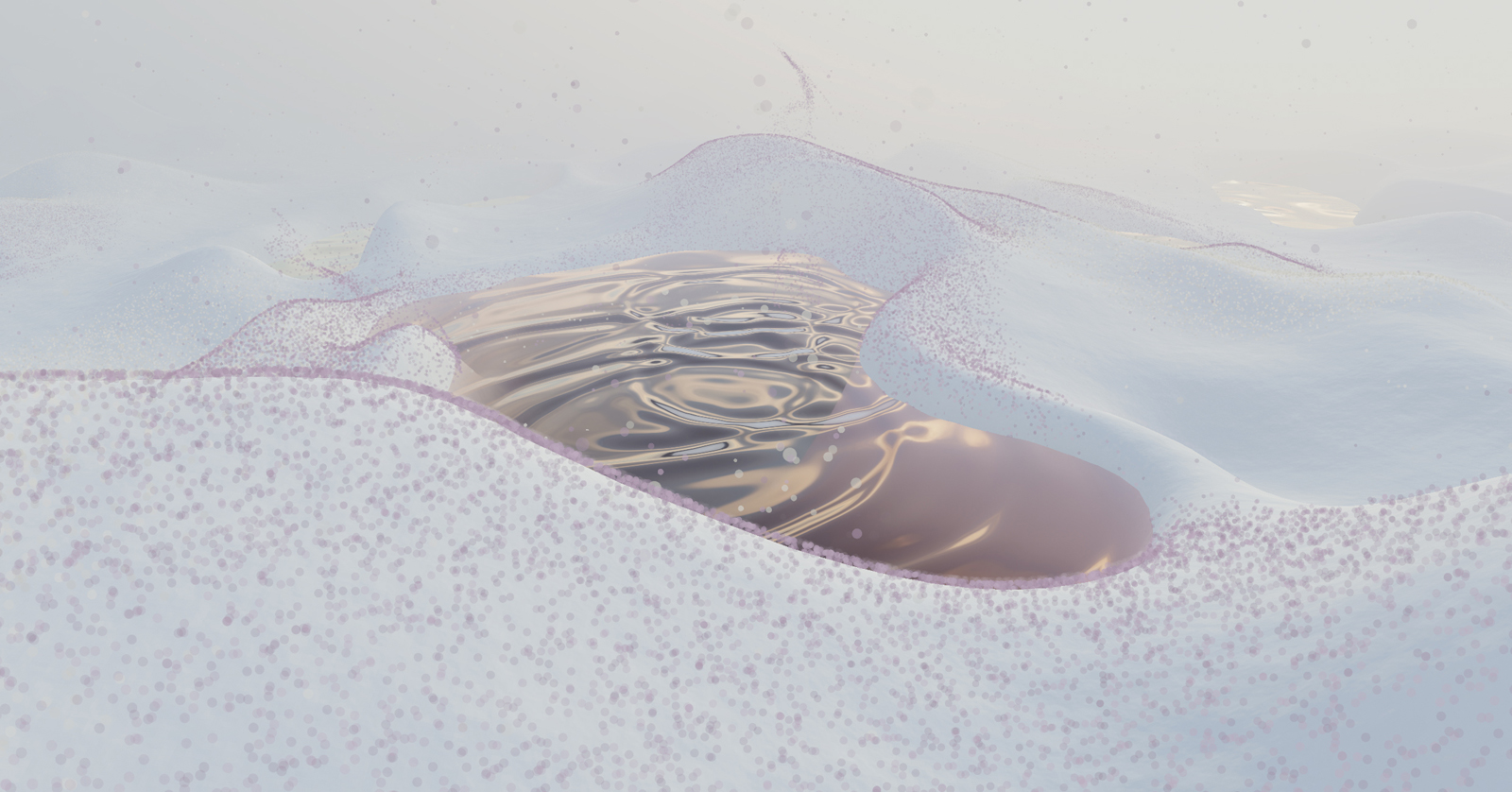

Evan first started thinking about the project during the Visual Music Collaborative held at Eyebam back in August 2010. Then only a prototype for story telling, the project began to evolve. He was interested to see how the polygon reduction can be used as a storytelling tool. Could reducing/increasing the number of points on a model be used to drive a story forward or create a emotional response? Throughout the piece the character’s emotional state is represented by both the polygon count and distortion of the water.

Almost 2 years later, Evan joined Nexus as Interactive Director and the search for funding to make his project come to life began. After successful pitch to The Creators Project, the project finally received necessary funding for not only Evan to see his vision come to life but also bring other people on board to complete it as he imagined it.

The project is an experiment in space and narrative using 3D character modelling, rigging and animation with HTML5 and WebGL, optimised for viewing in Chrome.

This film is not an authored text but about authored space. The Carp and the Seagull is an experiment that aims to explore what this means in a story that would otherwise be told through the medium of film. It’s about simple point and click, about exploration and about moving around a space. It’s about exploring the space of a story as much as the telling. – Evan Boehm, Director

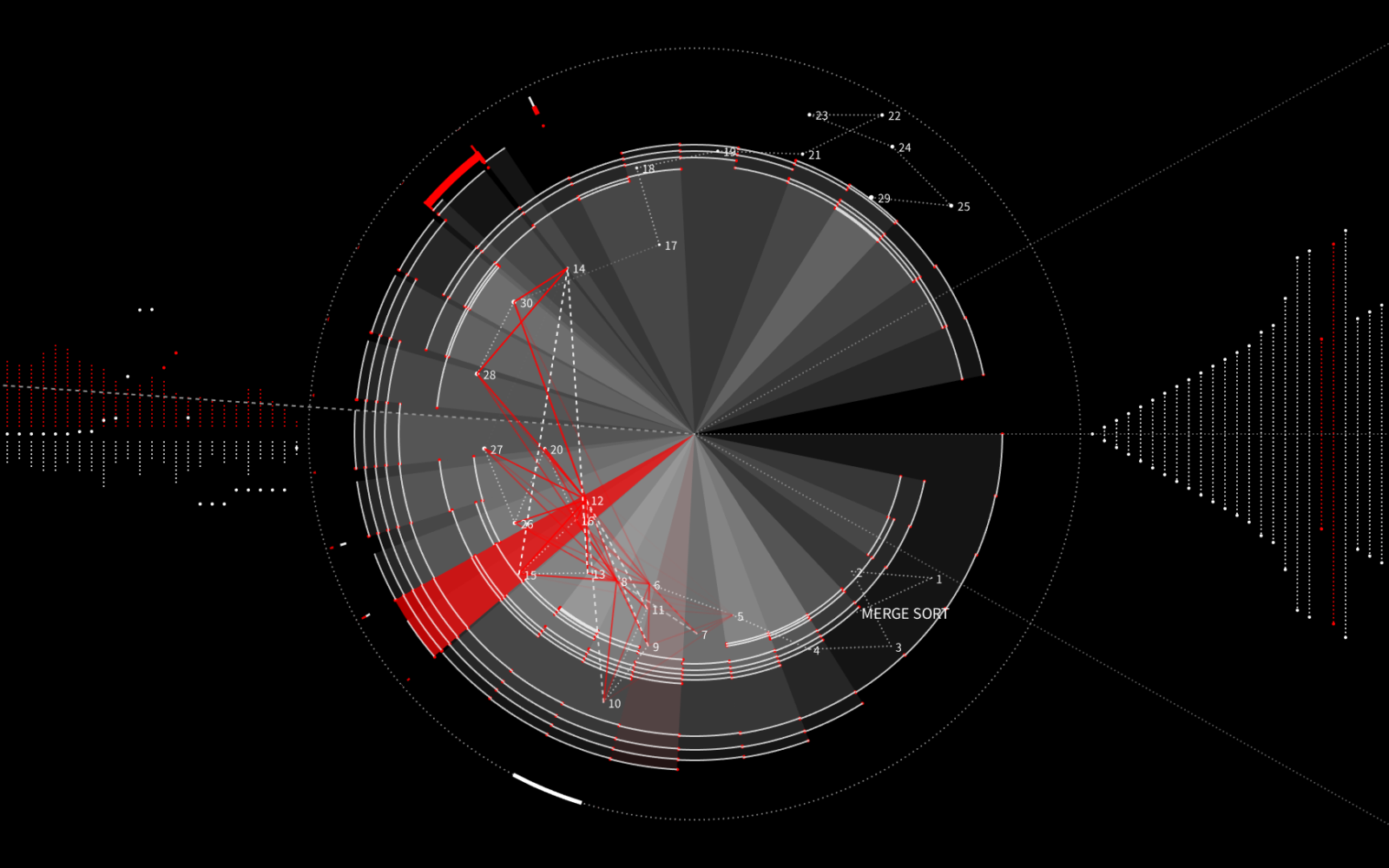

Evan created diagrams for every single scene element in the film. What happens when you click them, when you turn the world, how they affect other things, etc. They look like code diagrams but were his way of planning out everything in advance. The original idea was to hand them to the other programmers but the project evolved more organically once they got started.

This film is rendered in WebGL(Web Graphics Library) using Three.Js for the heavy lifting, relying on its render pipeline and general structure. On top of it they built a framework that was specific to a four part story with DOM event management, goal/action management, cross fades, post-processing effects, time based events. Evan describes it as a bit of a beast and one that could have been done better but a lot of things were thought of later down the road. Each scene/character element has its own custom vertex and fragment shader. Evan says they are not complicated but they had to write bespoke ones for lots of the little details they wanted to add.

Every object and character is self-contained and has it’s own logic and response system. This allows them to control every element in the film and make adjustments until it feels or looks just right. Unlike a traditional film, they can alter an objects speed, multiplication, size, direction, texture, and interaction with other objects right until the end of development. In traditional WebGL experiments you can interact with them and change these variables in real- time.

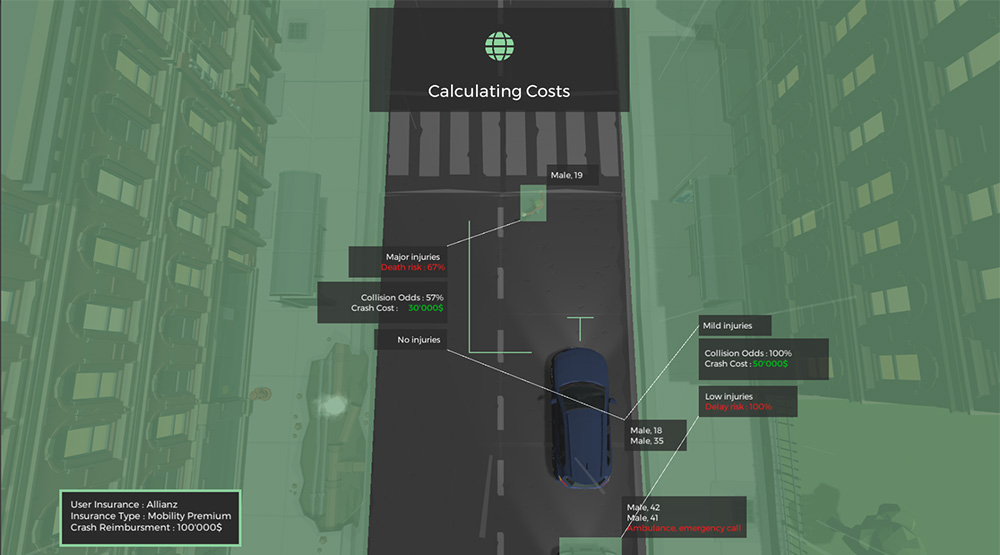

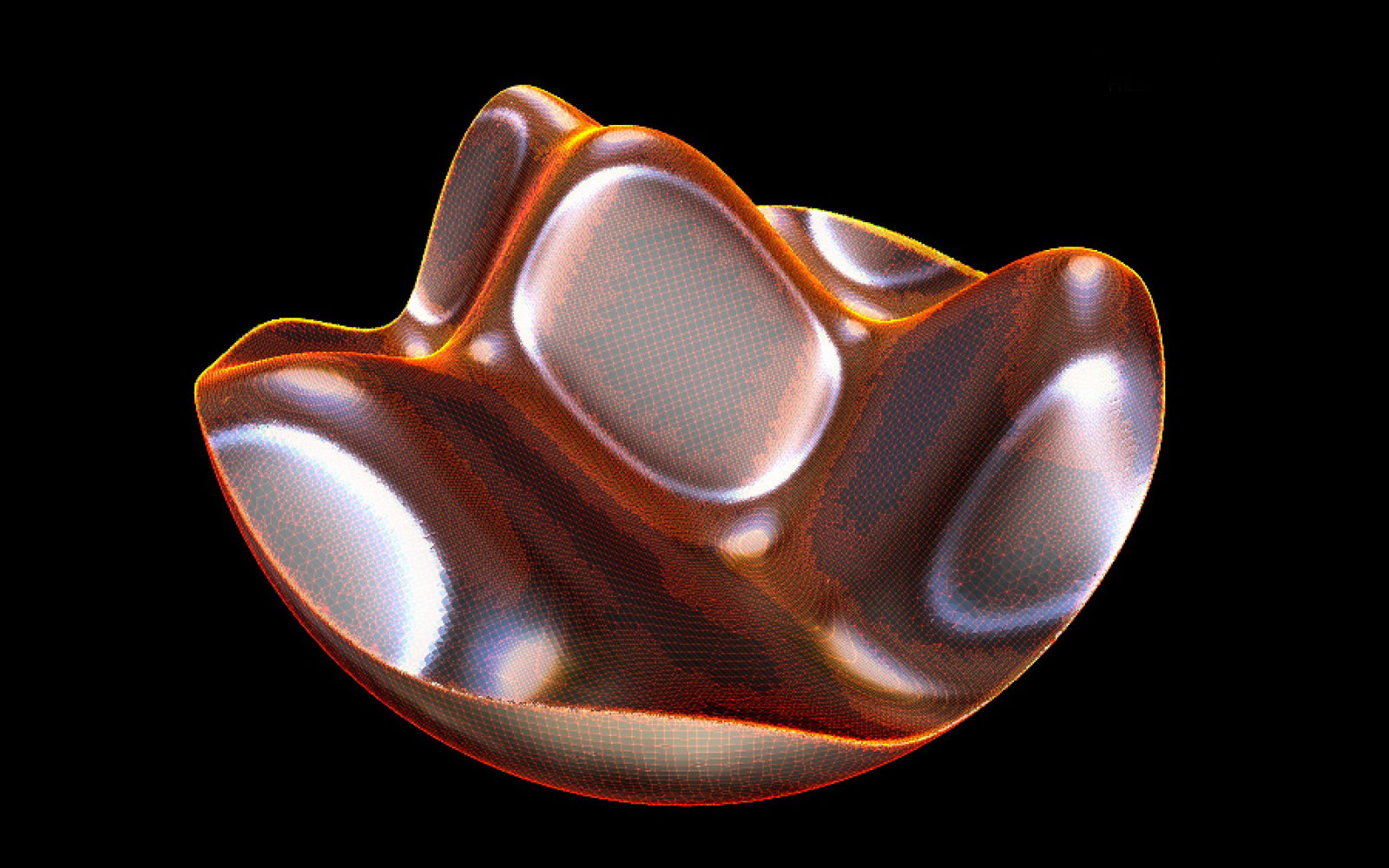

A big part of the story and the reason its rendered as wireframe is that the structure of the polygons is an emotional indicator for the characters, as in the original idea. Highly distorted polygons (done through vertex shaders) convey a more distressed emotional state. For example Masato’s polygons are more chaotic the closer he gets to attacking the bird. The team used a mixture of pre backed distortions out of 3D Studio Max and and noise functions in the shader. They did however get better control through Max but having code tell a part of the story was integral to the project.

The scene is rendered in multiple passes through multiple stencil masks to create the illusion of dual worlds inhabiting a single space. There are actually four worlds running in parallel, each with their own sets of models and interaction. This means they the scene is rendered four times per frame (natural world, nether world, shared world and hit test world). And then the objects are all tripled! One black version, one white line version and a hit test version. This means Masato and the Old Man in Chapter 1 equals 6 models all moving in sync across 4 worlds.

Because the story takes place across the two main worlds (natural world, nether world), they had to create two separate, but connected 3D spaces and then render them through separate buffers. For example, Masato and his spirit sit at the same location but are only visible when viewed through particular angles. Not to difficult when rendering polygonal objects but got tricky when rendering polygons plus image files (the fog) plus particle systems. Add the fact that some objects move between worlds (at the end of the film) made it somewhat even more difficult. In addition they had another two worlds rendered just to calculate when items were being interacted with.

The models and animation come straight out of Nexus’ studios and processed through a python based pipeline before being integrated into the scene as JavaScript objects (JSON). This integrated workflow was critical to ensure modeling and animation and code-based development ran effortlessly, creating a process where they could live preview and react accordingly. For sound, the team used the WebKit Web Audio API to manage and play all our sound files. Music from Plaid is loaded and triggered through a custom Webkit audio manager.

Writing a new story from scratch with the specific goal of making it a 3D interactive film is my next project. There was so much to explore with this, in so little time, I would like another go at it. And of course, reuse a lot of the framework in the process.

To find out more about the project see The Creators Project.

—

Evan Boehm is a technology art director, an animator and a coder. With a background in computer engineering and animation, Evan works at the intersection of technology, moving image and digital installation. He combines his interests in narrative structures and cutting-edge technology to construct new forms of story telling, blurring the line between the virtual and the real.

Nexus Interactive Arts is an independent production company and animation studio based in London, with a worldwide reputation for creative storytelling across a range of media. Nexus Interactive Arts is a home for directors and artists who work in interactive moving image. It shares the same values for storytelling and design as Nexus film division, but with new technology being a more equal partner.

The Creators Project Digital Gallery is the new home for artworks from various genres which are conceived and designed to be experienced primarily online. On an ongoing basis, we’ll be introducing new works that range from interactive web-based art and apps to games, music videos, and short films. We’re kicking off the Digital Gallery with five works across mediums of film, music, and interactive websites, giving you an idea of the range of creativity you’ll see there from now on.