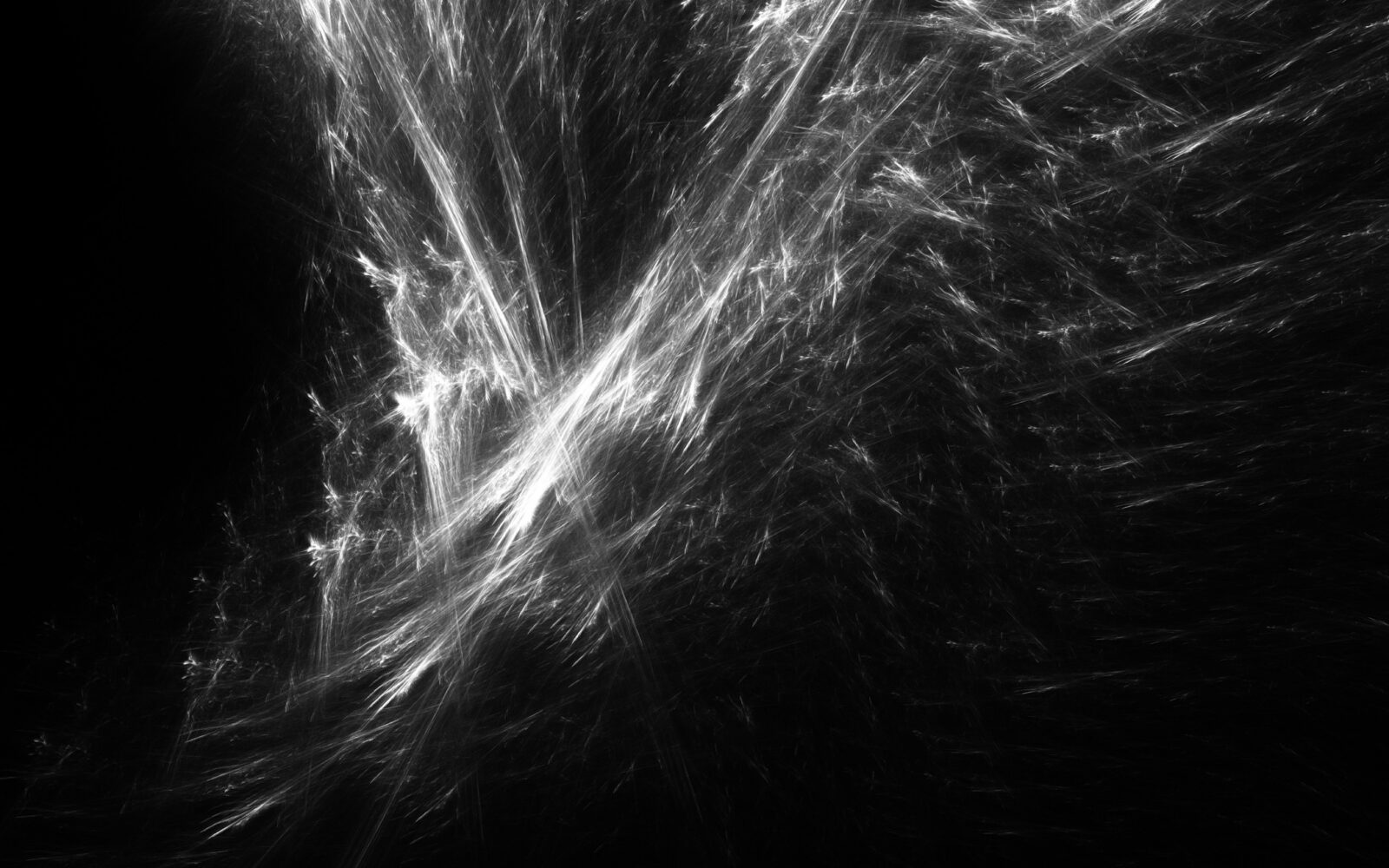

Habsburg AI Portrait Studies is a series of cloth-printed generative portrait images produced using a bespoke diffusion model recursively retrained on its own output. This autophagous training causes the model to collapse on itself, constricting the model’s distribution around the mean, forcing it to produce images in an amplified version of the model’s default style. The images not only demonstrate the potential retrograde effects on model development caused by incorporating synthetic data into training but also reveal the average aesthetic character of the target model, MidjourneyV5, by exaggerating the formal properties to which it characteristically reproduces.

The artwork was produced as part of a research-through-design project responding to the implications of growth rates in model parameter size outpacing growth in available training data and an investigation into the particular aesthetic character of popular synthetic image generation models. The project produced Aesthetic Distillation, a software application for recursive training that amplifies the fundamental aesthetic characteristics of a target model, making them amenable to formalist analysis. In the Habsburg AI Portraits Studies series, this distilled version of Midjourney was used to explore and articulate how these qualities are manifest in the target model’s representations of human subjects. As such, the work takes its name from the medieval Central European dynasty with a famed legacy of inbreeding. In their physical manifestation, the images were printed on banners and installed such that they appear to be slowly collapsing under their own weight.

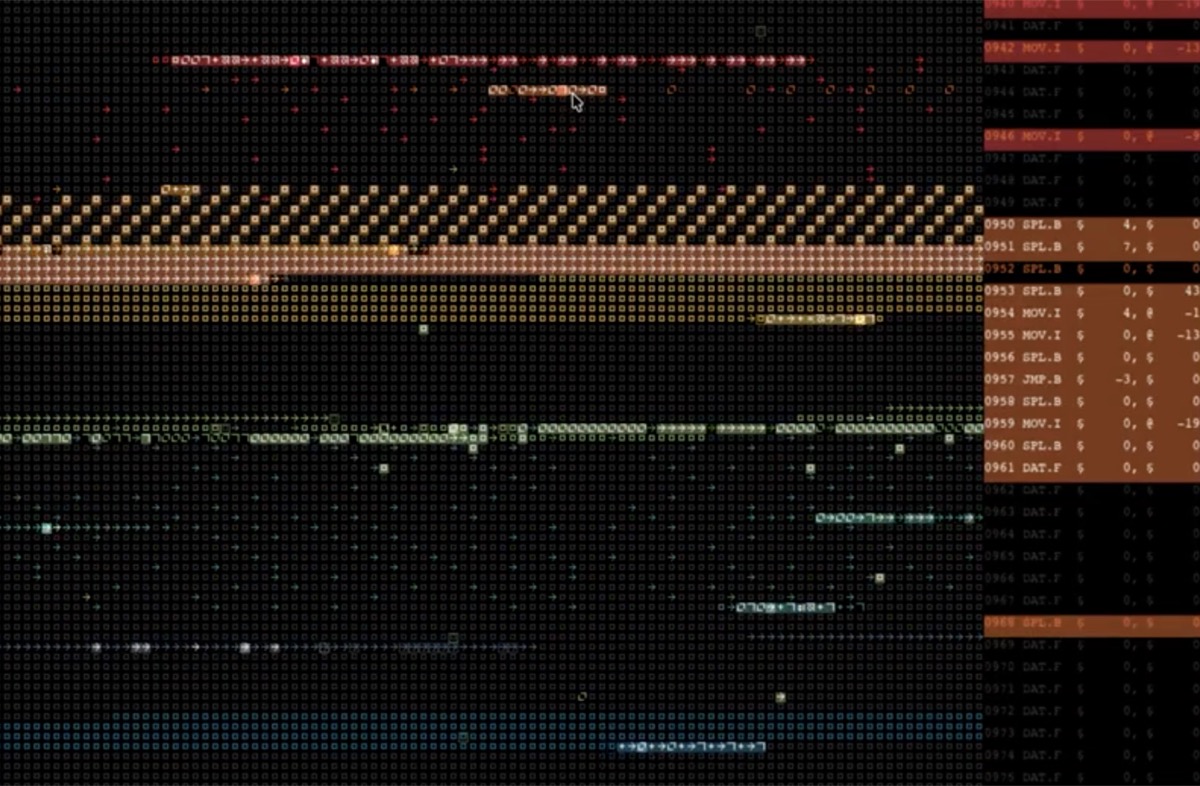

The images in the Habsburg AI Portrait series were generated by prompting a bespoke model, which was developed using a custom training script called Aesthetic Distillation. The software was written in Python and utilises the Hugging Face Diffusers library to manipulate and train the model. As a proprietary platform, the Midjourney model is not accessible to researchers. Therefore, the base model used in this work was an open-source clone of Midjourney.

The Aesthetic Distillations method works by recursively fine-tuning the target model, submitting it to rounds of training on a dataset of images generated by the model produced in the previous round of fine-tuning. After each round of training, the seed dataset is reproduced and used to train the latest model. Finally, with the model trained, ComfyUI was used to manipulate it with a custom workflow to create the exhibition images.

Project Page | Research Paper | Instagram

Martin Disley

Institute for Design Informatics, University of Edinburgh, Edinburgh, UK