Autolume is a no-code visual synthesizer developed by the Metacreation Lab. It enables artists to train and explore their own models with small datasets, giving them direct creative control and the ability to perform live with AI-generated imagery.

The system covers the full workflow, from data preprocessing and model training to real-time latent space navigation and output upscaling. By making the artistic potential of generative AI accessible to non-technical users, Autolume supports a hands-on workflow that fosters creative ownership. It also integrates with the OSC (Open Sound Control) protocol for audio-reactive visuals, making it a powerful tool for live performance.

Autolume is built on Generative Adversarial Networks (GANs) and provides a controllable artistic workflow. Key features include:

- Model Training: Train models from scratch or resume from a checkpoint, with support for square and non-square datasets. Augmentation techniques enable training with small datasets.

- Latent Space Projection: Project an image or text embedding into the latent space for unique artistic exploration.

- Model Mixing: Blend two trained models into a new one, combining their visual features.

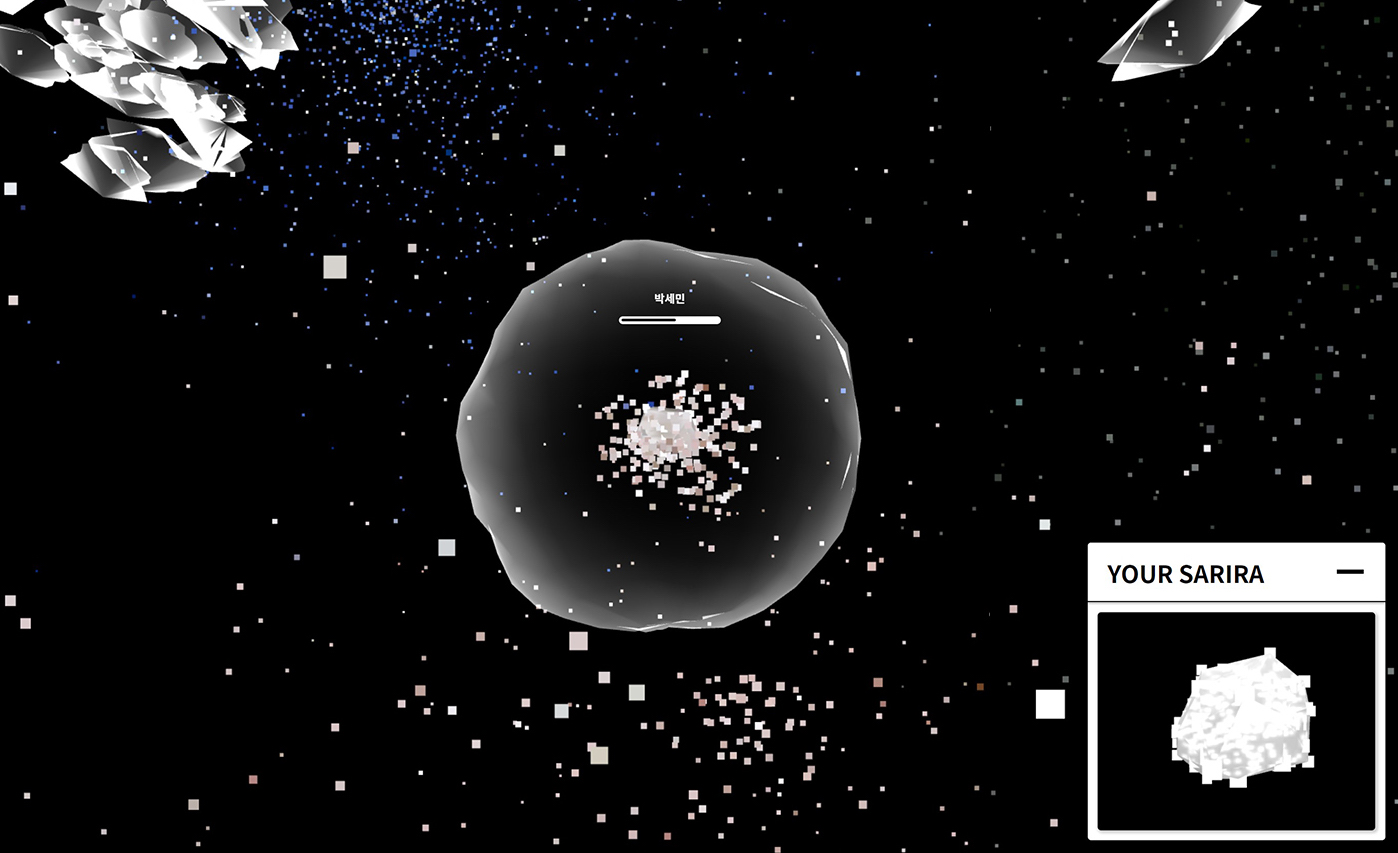

- Real-time Generation: The Autolume-live module allows real-time latent space exploration and network parameter control via OSC for audio-reactive works and other interactive works.

- Super-resolution: Upscale images and videos with a dedicated module for high-resolution output.

- Hardware/Software Details: Built on GANs, Autolume supports interactive applications through OSC integration.

See Autolume in action in these projects:

A generative AI artwork combining AI-driven music and visuals to reflect on dystopia and algorithmic autonomy.

A real-time audiovisual improvisation where artists collaborate with AI agents in sound and visuals.

A live audiovisual performance co-created by a calligrapher, a generative AI system, and a sound artist through intricate audio-visual feedback loops.

Artists used Autolume to create improvised, audio-visual reactive works by processing stills and video loops.

Project Page | LinkedIn | Instagram | Read the Paper | More