Organised and led by Madeline Gannon with assistance from Huanyu Li, Improvisational Machines is a workshop that brings live coding, dynamic interfaces, and flexible workflows into industrial robotics. The workshop was held in June 2025 in Barcelona at the Institute for Advanced Architecture of Catalonia with the first-year students in the Master in Robotics & Advanced Construction program.

Over the course of four days, Madeline taught a group of students how to bypass standard tools for programming robots and build their own workflows from scratch. They focused on how to harness real time communication with their 2 one-tonne robots for rapid experimentation and creative flow. Workshop also included a guest lecture from live coder extraordinaire, Char Stiles, who set the tone for building low-level tools as a path towards creative discovery. On the final day, the workshop concluded a speed project that harnessed students’ newly created flexible workflows to avoid programming pain points, iterate with rapid trial-and-error, or unlock an interactive experience between people and their big, giant robots.

The workshop covered topics including an introduction to live coding principles, overview of tools & frameworks for real-time robot control, strategies for improvisational workflows with robots, safety considerations & best practices for dynamic environments, and hands-on coding exercises & experimentation. By the end, the students understood the limitations of traditional industrial robot programming, learnt live coding techniques for real-time robot control, expolored workflows that encourage rapid prototyping and iteration, and implemented safety best practices.

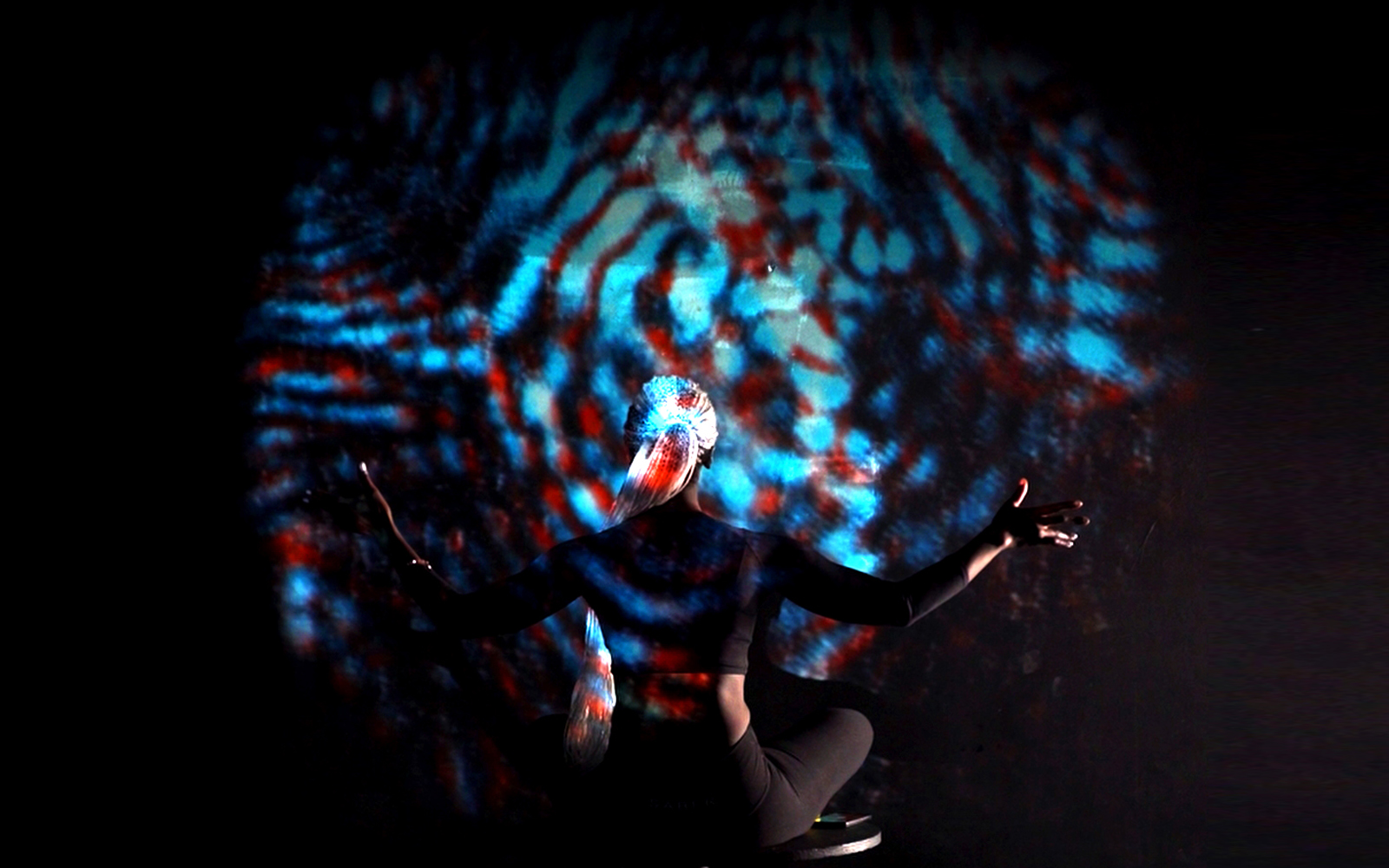

The workshop was inspired by the tools Madeline had to build for the Paris Fashion Week for Issey Miyake back in January. This project was a two-week sprint where the team could only finalize the creative decisions the day before the show — so having flexible, open-ended tools to program these rigid machines was paramount.

To learn more about this workshop, see github.com/madelinegannon/improvisational_machines. For more information about Madeline Gannon and her work, see atonaton.com

Group 1: Reflection

| Team Members | |

| Carlos José Larrain Lihn (instagram linkedin) Javier Albo Guijarro (instagram linkedin) | Marianne Weber (instagram linkedin) Elizabeth Frias Martinez (instagram linkedin) |

Reflection is an interactive installation where two robots create immersive geometry using mirrors, lasers, and smoke machines. The law of reflection states that the angle of incidence is equal to the angle of reflection. When light hits a mirror it bounces back at the same angle it came in at. The challenge was precise coordination between both robotic arms to align the mirrors and laser beam as well as cross-platform communications, ie being able to communicate from python to abb studio while controlling both robots. Finally, the toolpath was created in Grasshopper, including the required adjustments for precice movement.

The components included a ABB IRB 6700 Robotics Arm, Mirrors, Laser Pointer and a Fog Machine. The software was Rhino 3d Grasshopper, Robot Studio, Touch OSC and Python.

This workshop, guided by Madeline Gannon, enriched our technical and conceptual understanding of human-machine interaction. We learned how robotics, sound, and light can come together to create an emotional and immersive experience using reflection as the main focus of the exhibition.

Group 2: HearMeOut

| Team Members | |

| Lauren Deming (linkedin) Santosh Prabhu Shenbagamoorthy (instagram) | Krystyne Kontos (linkedin) |

HearMeOut is an experimental workflow project that uses LLMs to generate robot motion commands based on Natural Language Input. Developing an elaborate system in a couple of days was challenging and the outcome was promising but has potential for improvements. The group was also eager to see upcoming development in the space of first-principles reasoning models that will bridge this gap and enable robots to be responsive real time to complex human interactions and behavioral properties.

The group used inverse kinematics to orchestrate joint rotations, and constructing smooth mathematical functions that are composed together with principles from ‘the illusion of life’ to simulate embodied awareness and intelligence through robotic movements and path trajectories. The team used Google Speech Recognition API to transcribe audio into text. Python is then used to indentify the target robots (“Luna,” “Spot,” or “both”) and the command whilst also supporting synonyms (e.g., “home,” “return home” map to “Home”). Commands included wave, greet, nod, agree, stop, halt, freeze, retreat, bow, bend and others. There was also a speed map, allowing the voice control over the robots speed of movement, eg. fast, quick, rapid, gently, easy, careful, etc.

Since none of LLMs understand rapid code, directly using a NLM would not work because it doesn’t know the syntax; a translation layer could be a middleware script. Using grasshopper as a tool for validation as opposed to tool for design (the kiss); inclusive of animation challenge involved visual animations not following physical rules, which means finding ways to convert animation rules to physical reality. No noise isolation is included here, and would require rigorous safety checks if the the commands also involve parameters like speed that are changing based on tonal inflex, prompting the team to devise a dedicated axis rotation limit for all 6 axis to ensure the movement doesn’t collide.