Earlier this year, we have been thinking about “curated workshops”, an opportunity to bring people together to work for a very short period and share their creations. These would include setting up a team, inviting a few high-profile individuals and opening up submissions for participation. When I was approached by Héctor Ayuso earlier this year to give a talk at OFFF, instead of talking about CAN, I thought this would be an excellent opportunity to do something more, a workshop, and use the workshop material as the content to drive the talk. Hector and I agreed ‘Workshop Collaborative’ was born.

What was the aim of “Workshop Collaborative”?

1. Initiate collaborations between those who share common interests.

2. Create a playing field, both physical and virtual.

3. Allow ideas to evolve by asking questions.

When we announced the workshop back in January, we also opened to applications for participation. In total, 80 applications were submitted and 11 participants chosen by the team including Aaron Koblin, Ricardo Cabello – mr.doob, myself and Eduard Prats Molner.

The participants included:

Marek Bereza, Alba G. Corral, Andreas Nicolas Fischer, Martin Fuchs, Roger Pujol Gomez, Marcin Ignac, Rainer Kohlberger, Thomas Mann, Joshua Noble, Roger Pala and Philip Whitfield.

Programme – Single Day

09:00 – 10:00 Introductions / Teams

10:00 – 13:30 Stage 1

13:30 – 14:00 Lunch

14:00 – 19:00 Stage 2 (Completion)

Total creation time: 6.5 hours

Few weeks before the workshop, Aaron and I decided on four themes we should allow to influence the work we would be making. By allowing other participants to comment and feedback on these themes, we would discover areas we all want to explore. The themes included:

1. Digital Ecosystem – Build an application, an organism of information, sound and visuals, a digital ecosystem that flows through different mediums and evolves. “living system – travelling through technology and mutates through tools.

2. Analogue Digital – Explores the notions of physicality in code. Using made objects as assets to code. Scan 3D objects, cut paper and cut-out, traditional 2D scans, 3D objects scanned using flatback scanners, etc..

3. Projection Mapping – Address projection mapping conceptually. Moving away from technical demos, it is time to question what it all means: surface, source, angle, point projection, scale, form, interaction, and animation.

4. Data re-embodied – Tell stories through juxtaposing data sources and their representation methods. How can we create new meaning, understanding and value from reinterpretation of data?

By no means did this mean that we would have to choose one over the other. The purpose was to get a feel of where the interest lies among the participants and set up, so to say, a ‘playing field’ and allow first ideas to develop. We knew that working together for a single day, we would not be able to produce anything of “finish” quality, rather focus on the subjects themselves and see what comes out.

Following the feedback, a number of keywords were derived, to summarise our interests:

ecosystem, data, scan, evolution, input, mutation, osc, node, rhythm, pattern, touch, physical, language, viewport and mobility.

Five projects developed during the 6.5 hours of work. These included Kinect > WebGL bridge, Kinect Image Evolved, Input Device, Data Flow and Receipt Racer.

Kinect > WebGL

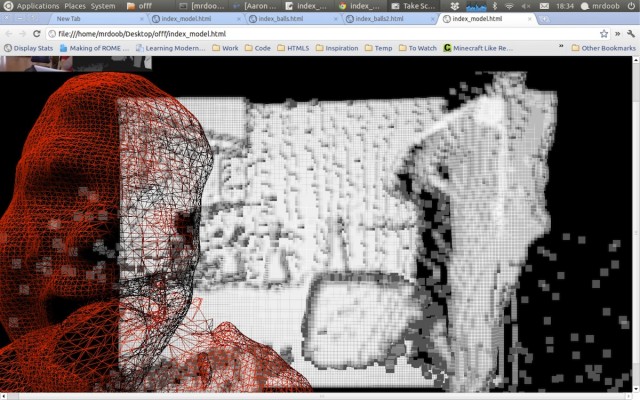

This project was the work of mr.doob, Marcin and Edu, although other people were involved also. The task was to create a bridge between the Kinect and the browser, allowing the real-time feed over the web. Although aspirations were much higher than the time allowed, instead of utilising node.js server – which I understand was 99% complete anyhow, the team settled for feeding downscaled image data from cinder application, using standard HTTP requests to the three.js script, which was reading the images at about 10f/s. Several rendering styles are presented below. First one is just a simple point cloud done by Marcin for debugging, while the rest was done by mr.doob using his amazing Three.js engine.

Download .js code here.

Kinect Image Evolved

Simultaneously while Ricardo was working on the .js part, Marcin was exploring different ways of kinect image representation. In an attempt to get away from the standard kinect point cloud, we developed the idea of trying slitscan effect with the point cloud. What this means is that the Kinect point cloud was dispersed along the time-lapse, with different bands representing different moments in time. Likewise, Macin also explored what happens if the point location is reversed when a particular depth is reached. What you see in the videos below are both effects.

Code available soon.

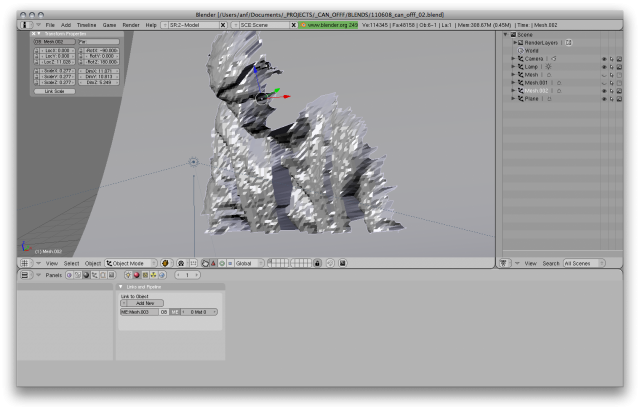

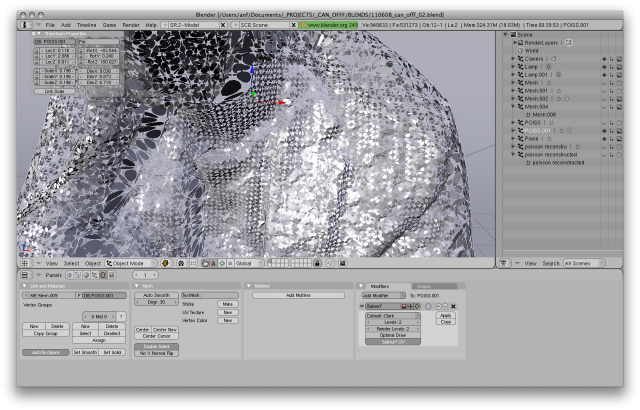

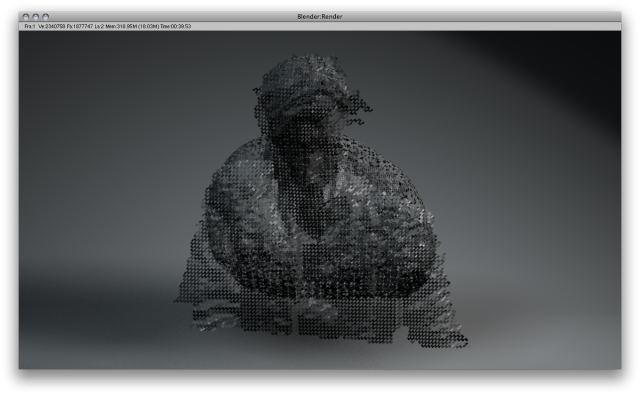

Thomas and Andreas were also testing different tools to manipulate kinect image. Meshlab, Blender were used to pull kinect point clouds and convert them into meshes which could then be render, distorted, split, etc.

—

Input Device

Marcin was also working on controlling the input, ie how one could interact with the Kinect point cloud. We were toying with the idea of being able to assign different devices over OSC to different Kinect body parts. This would allow for each individual to be assigned a unique element of the point cloud and to interact with it. The first step was to use a simple gyroscope data sent from an iPhone over OSC. The video below shows what is happening. Likewise, Rainer and Roger were working on the iPhone application that would send the OSC data. Rather than just utilising a gyro or accelerometer, Rainer was exploring different forms of interaction with the device, seeing whether a language could be evolved, one that would somehow enhance emotional attachment the Kinect body parts. The videos below show and instrument-like application that also has audio feedback.

Code available soon.

Data Flow

With all the data moving, Marek was wondering if the input and output are in the same medium, you can compare them, apples for apples, what would happen. Marek looked at the process of the loop by examining the image obtained by subtracting the initial input from the output, so we’re just left with the parts that change. For the loop algorithm, jpeg compression was chosen because it was easily available in oF and ubiquitous enough to warrant investigation. The boxy images are a result of feeding the jpeg “high” quality compression back into itself and subtracting it from the original. The finer images are using the “best” compression setting. Then Marek tried the same thing with sound (using logic), using first the original sound, then the encoded and seeing what was left. You can hear all the sounds below.

Receipt Racer

The receipt racer combines different in and output devices into a complete game. It was made by Martin, Philip and Joshua utilising a receipt printer, a common device you can see at every convenience store, a small projector, sony ps controller and a Mac running custom openFrameworks application. Print is a static medium, that’s why, Philip, Martin and Josh explain, it was an intriguing challenge to create an interactive game with it. First the team tried to do it only with the printer as the visual representation, but that seemed rather impossible. But then Joshua Noble came up with a small projector, perfect to project a car onto a preprinted road. There is no game without an input device. So they were lucky enough as at least one of them always carries a gamepad. The cables connect back to the laptop running an openframeworks application the team wrote parts of. The app was entirely programmed during the workshop. Internally it runs something like the basic js game. Only a car driving on a randomly generated race track. Then it broadcasts its components to the external devices, prints the street and guesses where the car’s projection is supposed to be to perform the hit test. That’s the trickiest part. Everything has to be in sync and needs some calibration in the beginning. The paper also has a little bit of a mind of it’s own and tends to slide around or curl. But that’s nothing some duct tape and cardboard can’t fix.

It was a lucky day. Somehow everything was just lying around, waiting to be used. Even the stand and this plastic thing you would normally use to put in your name on a conference. Even the timing was perfect. Right at the end of the workshop we finished adding the details like a little score and the YOU CRASHED TEXTS.

Project Page (code available)

On Saturday we presented the creations. Regardless of the fact that Erik Spiekermann was presenting in the other OFFF room, we had a full theatre (500 ppl estimate) including another room where our talk could be watched on a large screen.

Photo above by Arseny Vesnin

CAN would like to thank all the participants at the workshop as well as Aaron and Ricardo for taking time off their busy schedules to take part of the workshop. For more information on the workshop and all future information/code/links see creativeapplications.net/offf2011

We leave you with OFFF Barcelona 2011 Main Titles made for OFFF by PostPanic (full screen recommended).