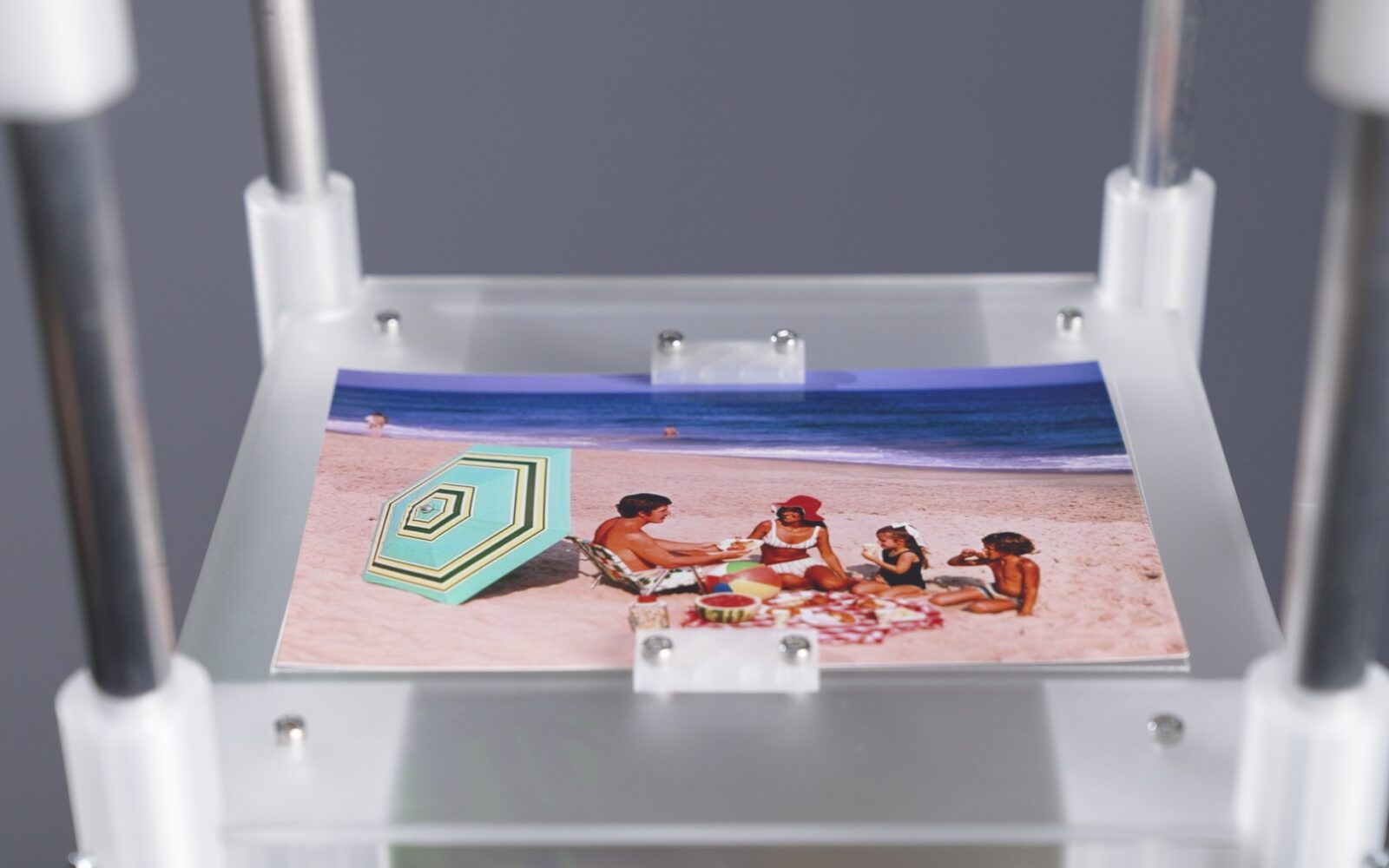

The Anemoia Device is a scent-memory machine that uses generative AI to distill photographs into bespoke fragrances. Guided by language models, a dial-based interface, and a custom olfactory formulation system, each image becomes a singular scent composition — a multisensory memory that evokes anemoia: nostalgia for a moment you never lived.

→ Read our paper in NeurIPS 2025

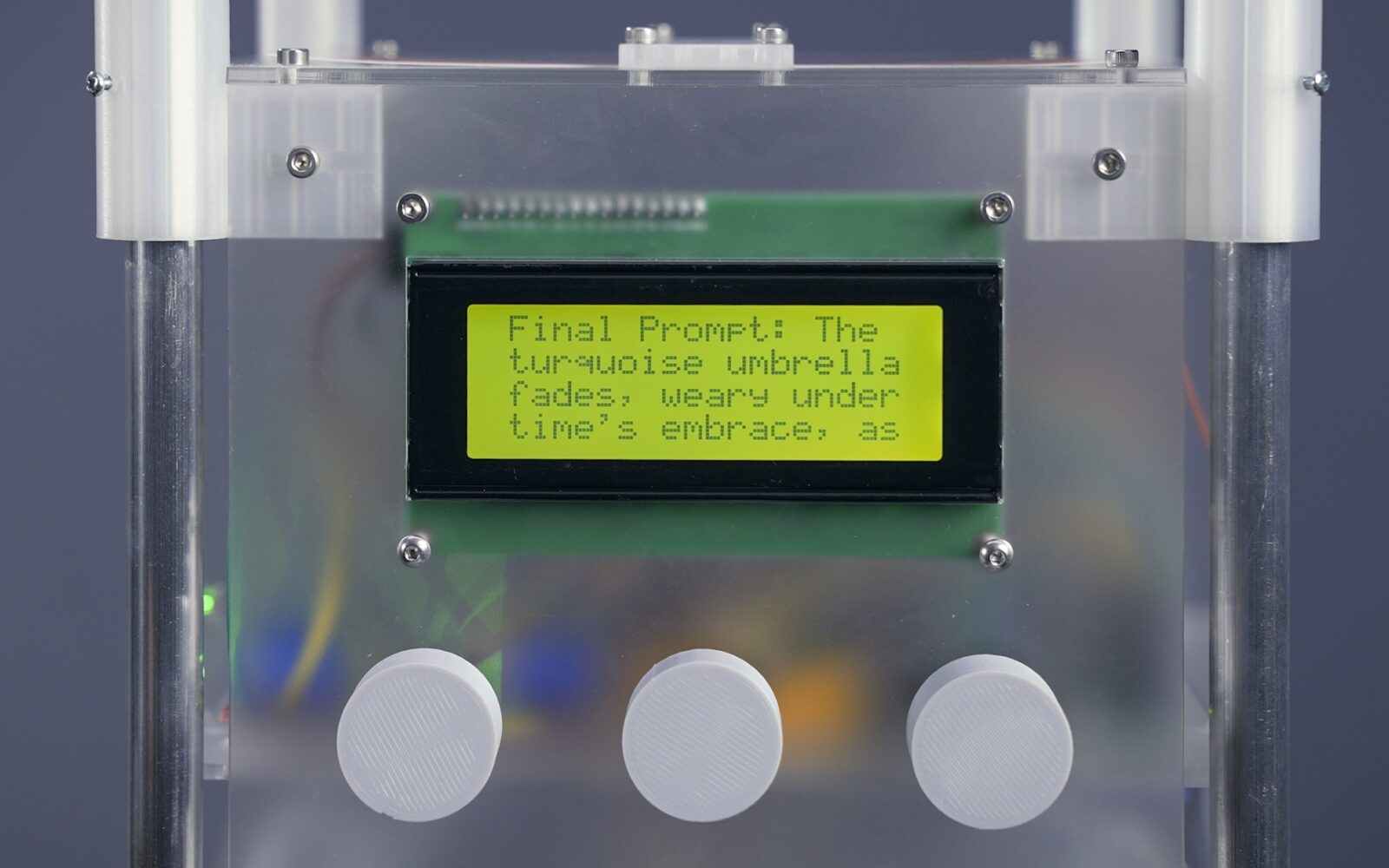

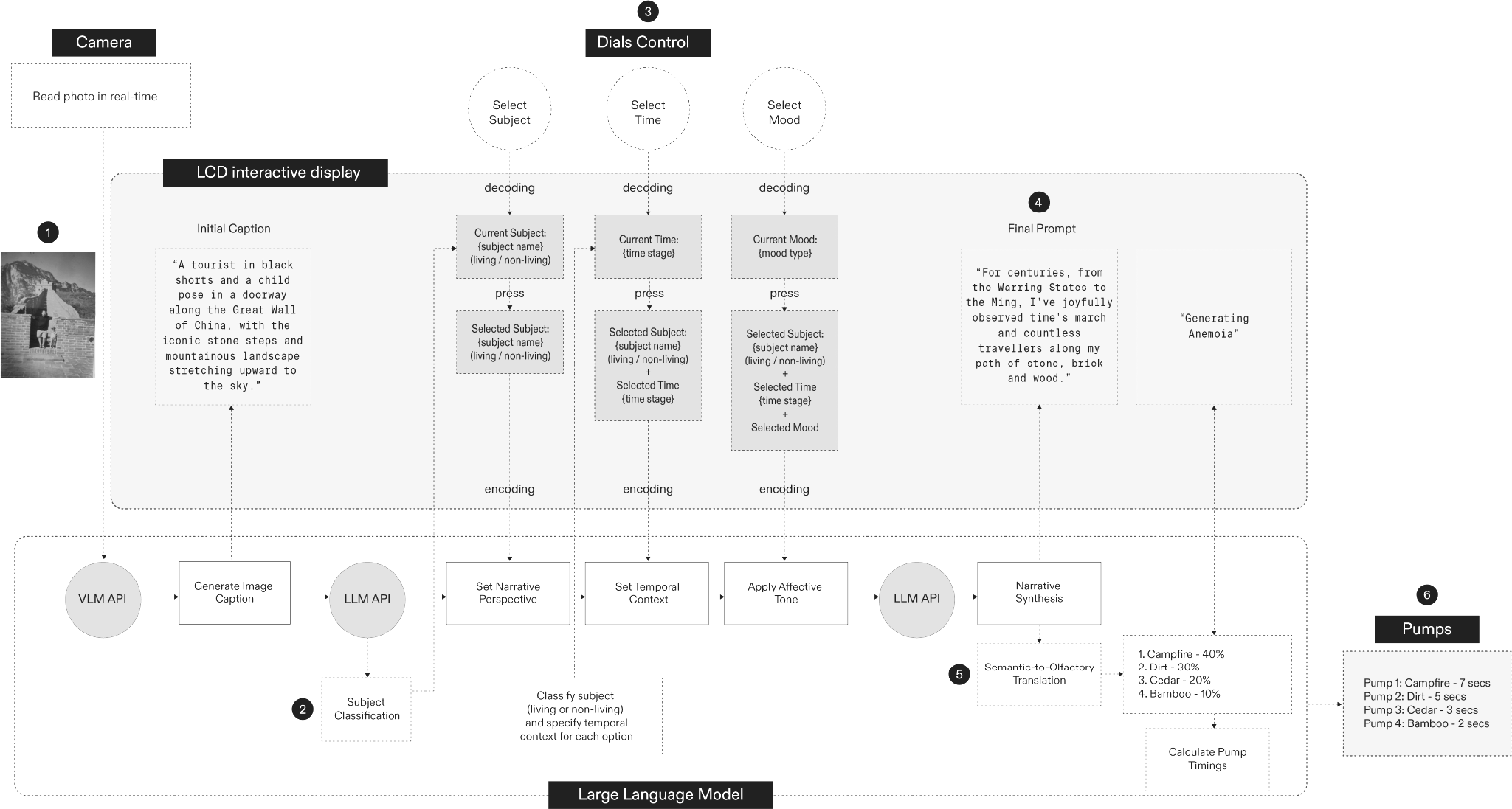

The system begins with a vision-language model (VLM) that interprets an analogue photograph and produces an objective semantic caption displayed on the integrated screen. Three rotary dials then lead the creation of a prompt, shaped through constrained inputs:

Dial 1 — Subject (Perspective)

Selects a particular point-of-view within the scene (e.g. old man, tree, bicycle) and classifies it as living or non-living to define the following parameters.

Dial 2 — Time (Temporal Context)

Positions the chosen subject within a contextual lifecycle.

Living: childhood, youth, adulthood, elderly

Non-living: raw material, manufacture, in-use, decay

Dial 3 — Mood (Affective Tone)

Assigns an emotional tone: happy, sad, calm, or angry.

These inputs are synthesised into a structured prompt for a large language model (LLM), which takes the form of a concise narrative; an interpretive bridge between the photograph and its eventual olfactory form. This narrative is the basis for a cross-modal translation stage in which an LLM produces a scent formula using few-shot in-context learning.

A curated scent library and an olfactory knowledge base underpin this translation. Each fragrance in the library is defined by semantic descriptors, with examples in the knowledge base pairing narratives with a sparse scent vector and a rationale. The model analyses the generated prompt against these examples and returns a proportional blend of up to four fragrances that align with the prompt’s emotional and thematic structure.

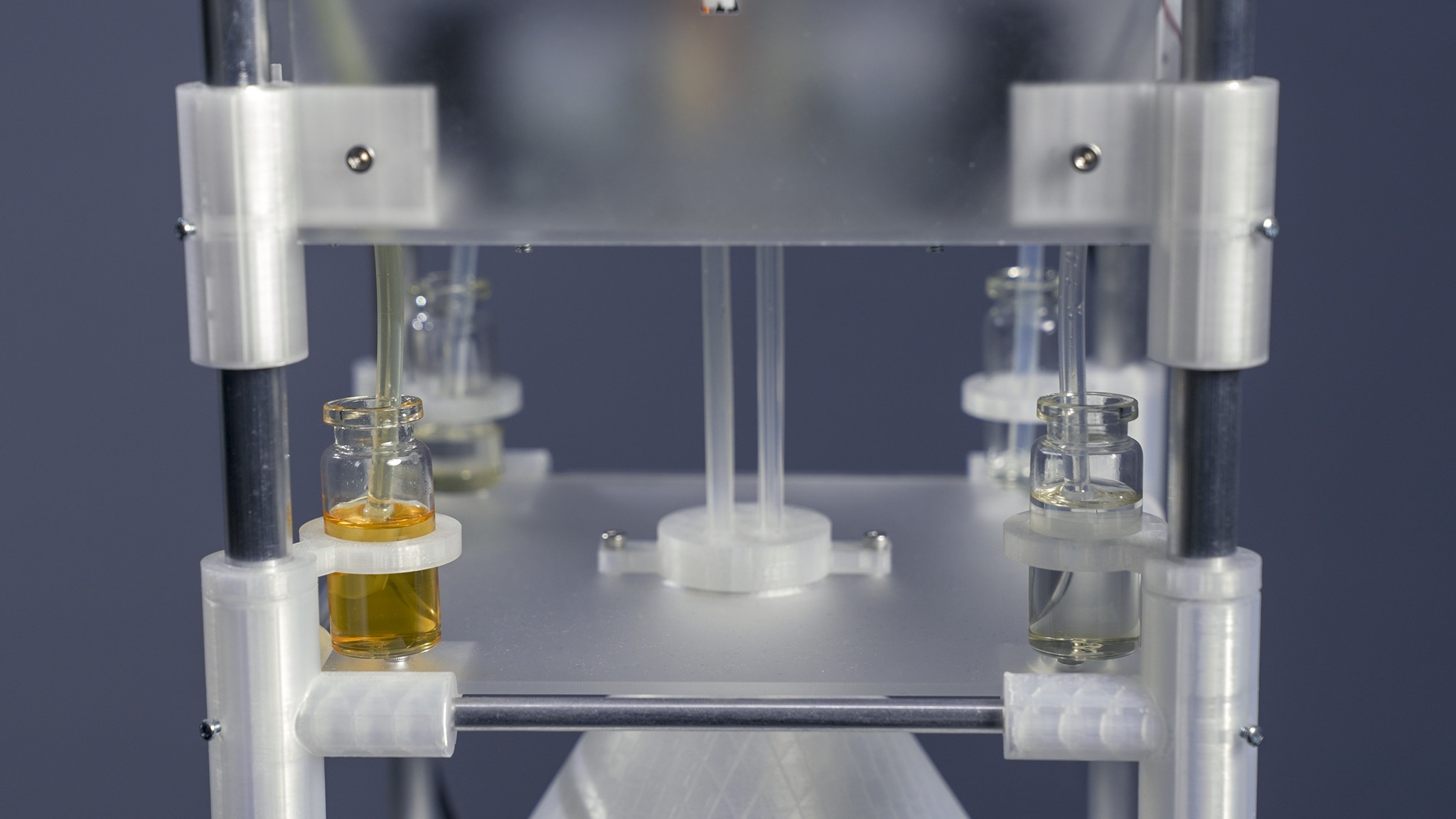

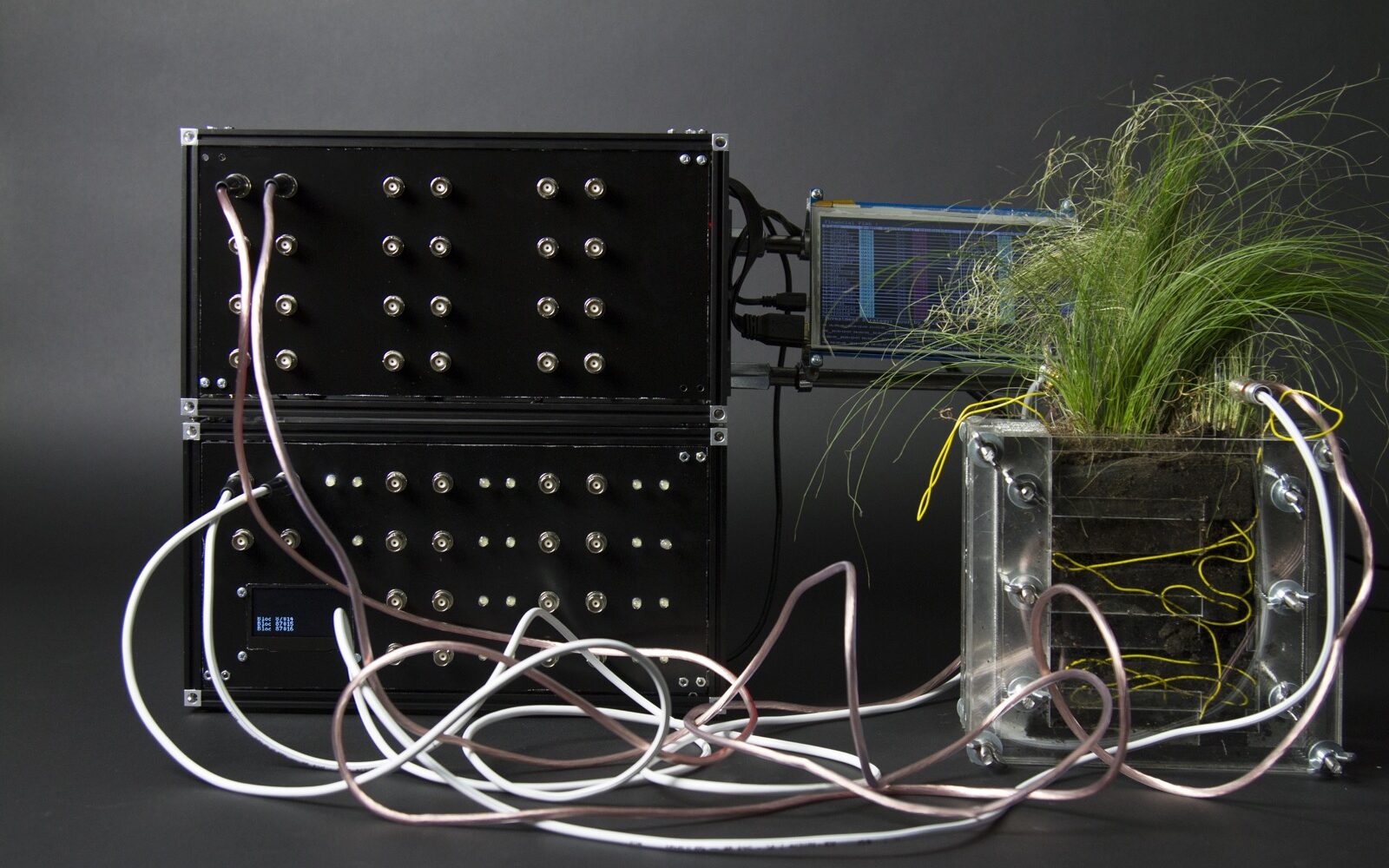

The scent is then materialised through a custom olfactory display, with pump timings computed from the formula, activating peristaltic pumps that draw from glass vials and dispense their contents into a shared blending vessel. This process echoes the metaphor of distillation, with memory condensed into olfactory form through a systematic, multi-stage process.

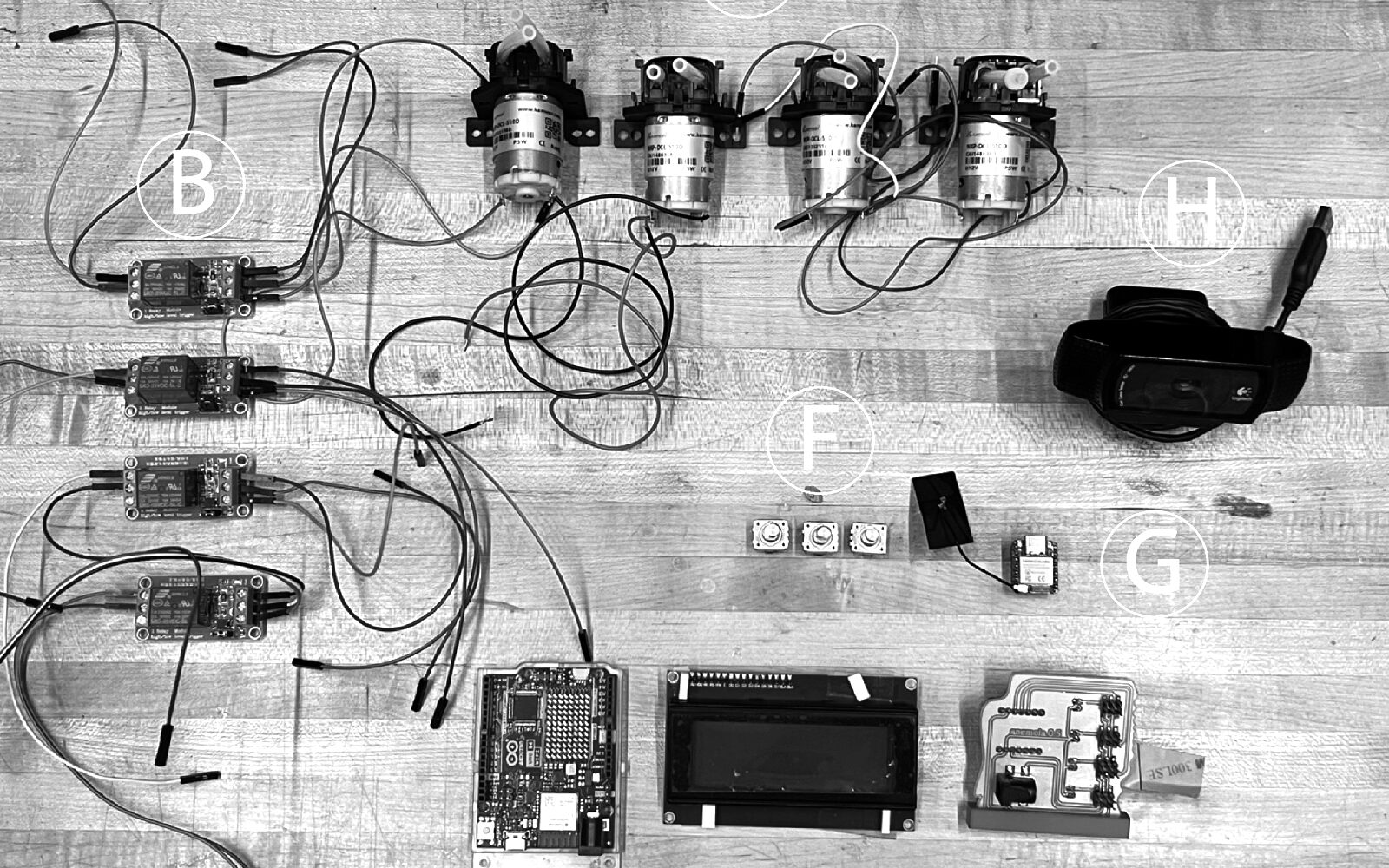

| Pipeline Architecture | Hardware | Software |

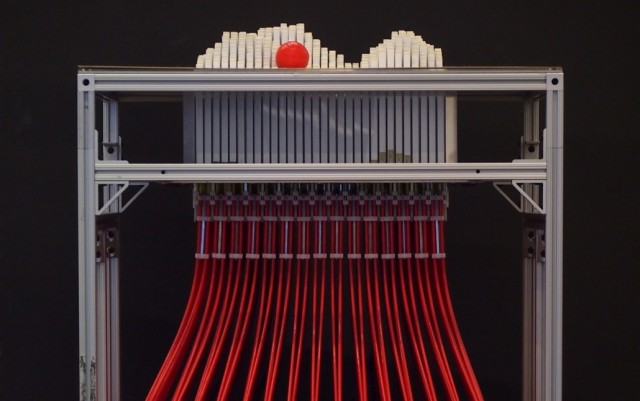

| 1. VLM-based image captioning / segmentation 2. LLM subject classification 3. Dial-based parameter selection (Subject / Time / Mood) 4. Prompt creation via LLM 5. Semantic-to-olfactory mapping using few-shot in-context learning 6. Scent rendering through olfactory display | XIAO ESP32S3 microcontroller Custom CNC-milled PCB 12V DC peristaltic pumps Modular aluminium frame with laser-cut frosted acrylic 3D-printed internal mounts (PLA) Silicone tubing and glass reservoirs | Multi-modal image analysis for semantic extraction LLM pipeline for narrative generation and cross-modal translation Orchestration layer: coordinating vision, language, and olfactory subsystems Embedded control firmware for real-time actuation and pump timing |

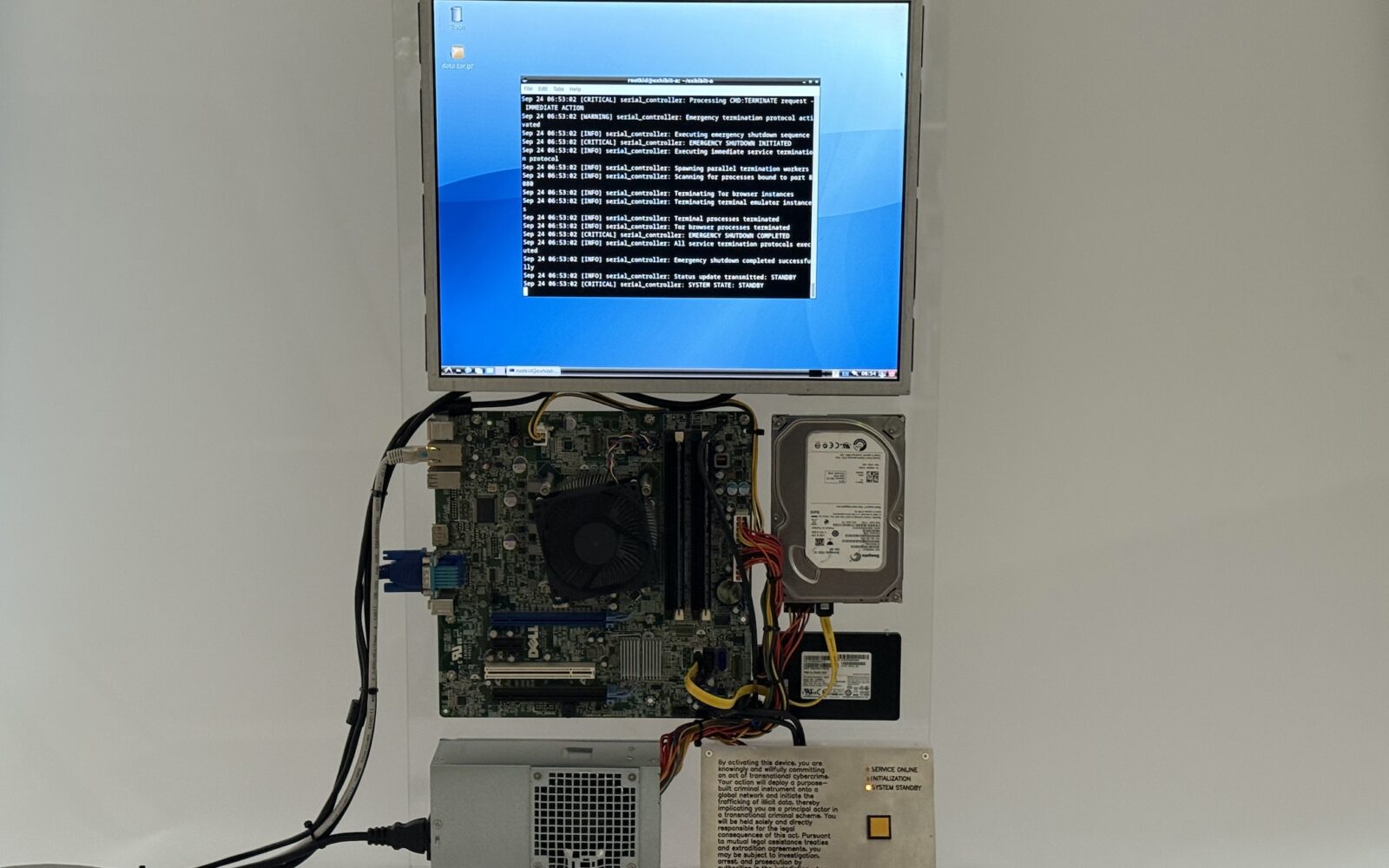

The device itself is vertically structured around this metaphor; a memory entering at the top and a scent emerging below. Internal subsystems (logic, relays, reservoirs, pumps) are physically separated to minimise interference. Structural elements blend CNC-milled PCBs, 3D-printed joints, and laser-cut acrylic for a layered, translucent aesthetic that echoes the machine’s conceptual focus on memory, transformation, and atmosphere.

Project Page | Cyrus Clarke | Twitter/X | Instagram | LinkedIn | Tangible Media Group

Development of the device is led by MIT Media Lab researcher Cyrus Clarke at the Tangible Media Group. The Anemoia Device demonstrates how multimodal AI systems can be orchestrated into a cohesive pipeline that spans perception, narrative generation, semantic mapping, and material output, forming a fully tangible system for computational memory-making.