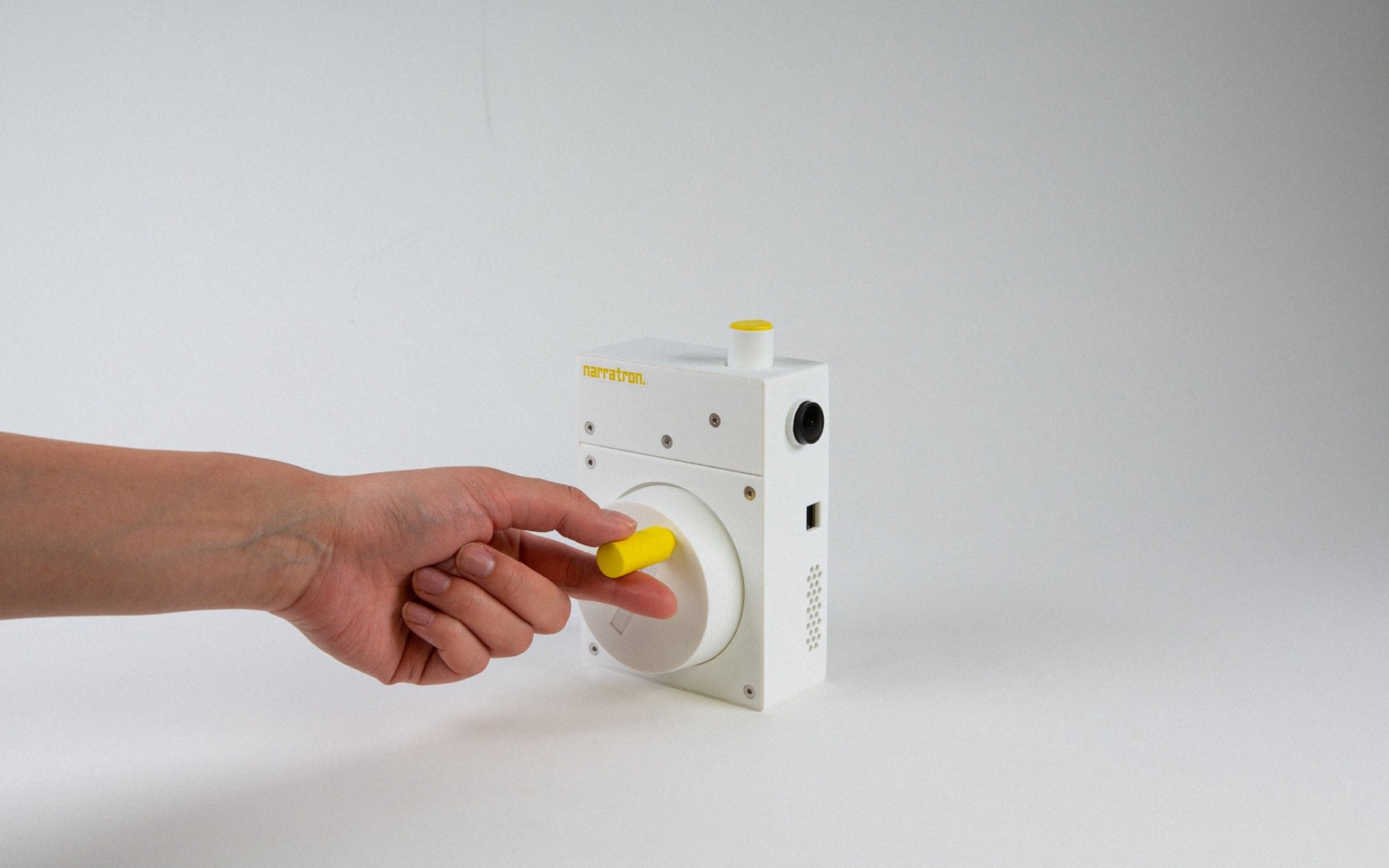

Created by Melo Chen & Nomy Yu for 4.043/4.044 Interaction Intelligence: a course at MIT taught by Marcelo Coelho, Noema is a large language object (LLO) that segments and reconstructs human perception through spatialized audio experiences, such as ambient cues, storytelling, and music. By shifting perception from sight to sound, Noema turns AI into a sensory extension: an inner voice that sees, interprets, speaks, and even questions.

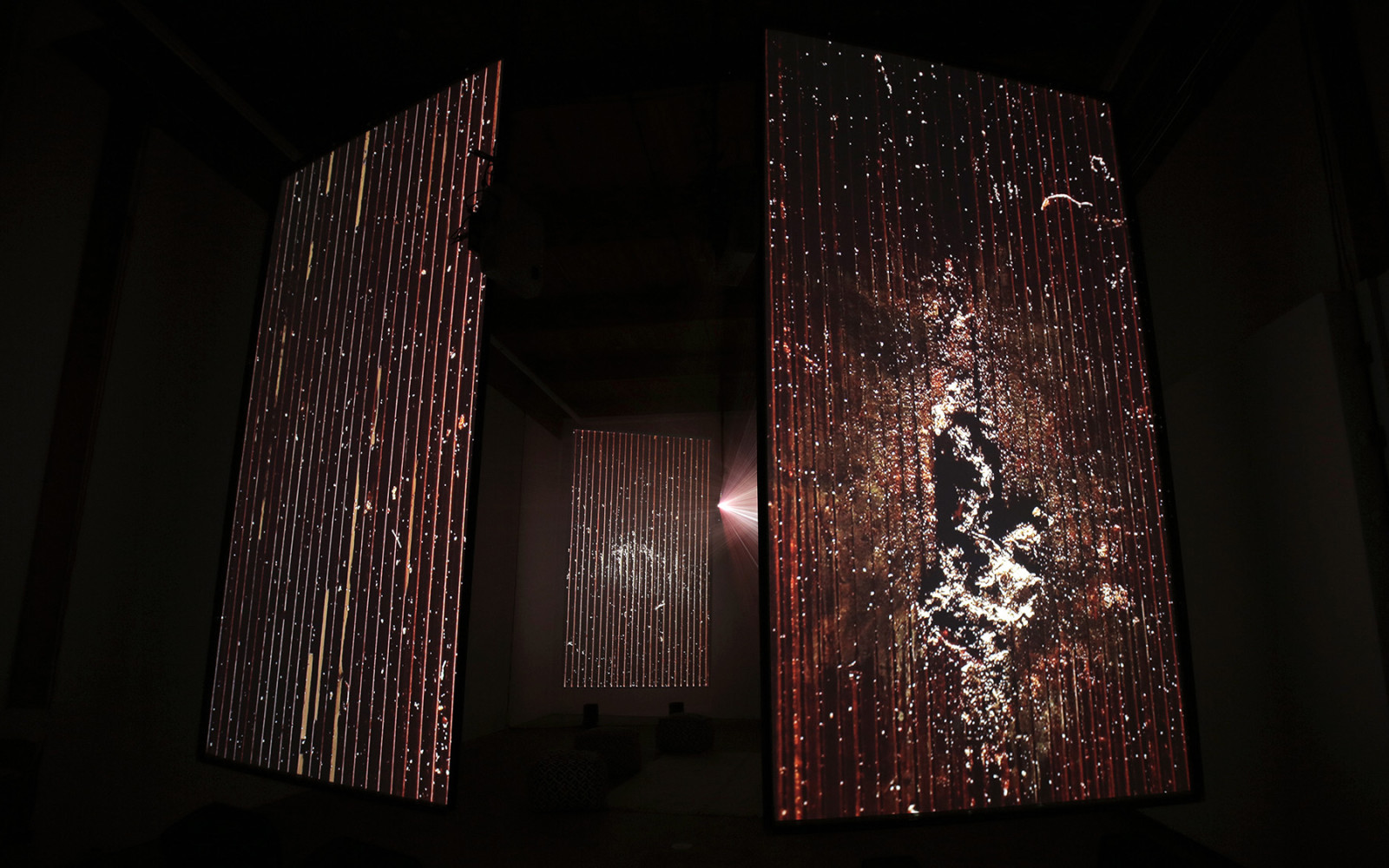

Activated when the user closes their eyes, Noema captures environmental data via cameras and generates real-time, spatially oriented audio narratives. This approach challenges the conventional expectation of AI precision, embracing ambiguity, hallucination, and imagination to foster a co-constructed, multisensory reality. The work explores the potential of embodied AI to augment human perception and cognition, offering insights into the design of future AI-integrated wearable technologies.

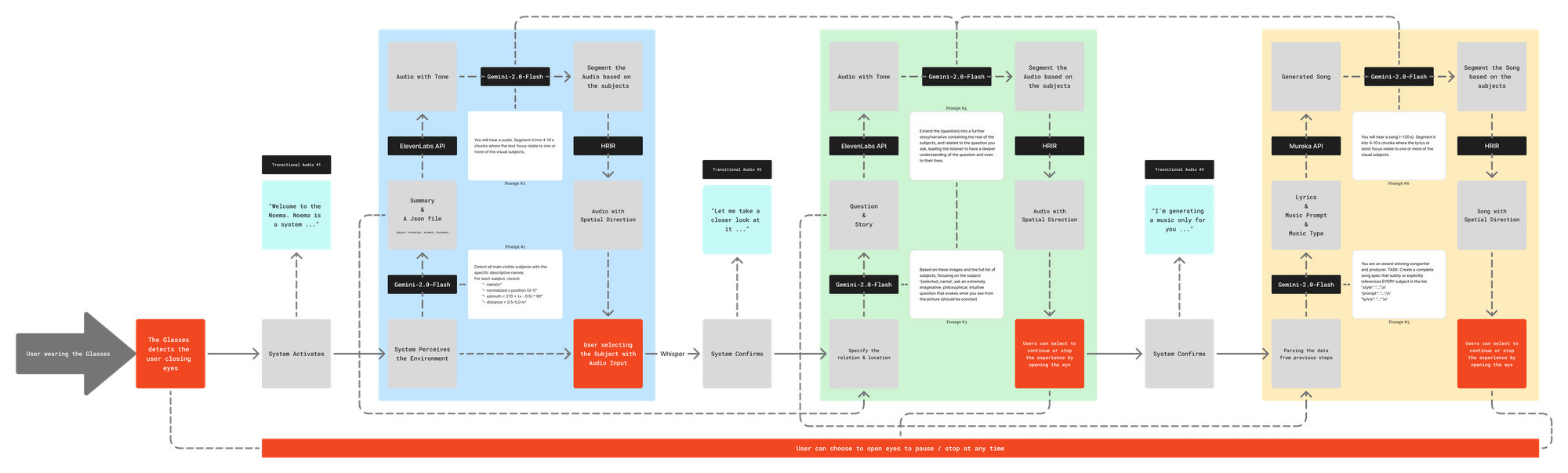

The project follows a system-building approach with iterative testing. The methodology comprises five key stages; eye status detection through MLLM (Multimodal Large Language Models), subject recognition & summary generation, narrative segmentation through MLLM, dynamic spatialization and interactive audio playback & music generation.

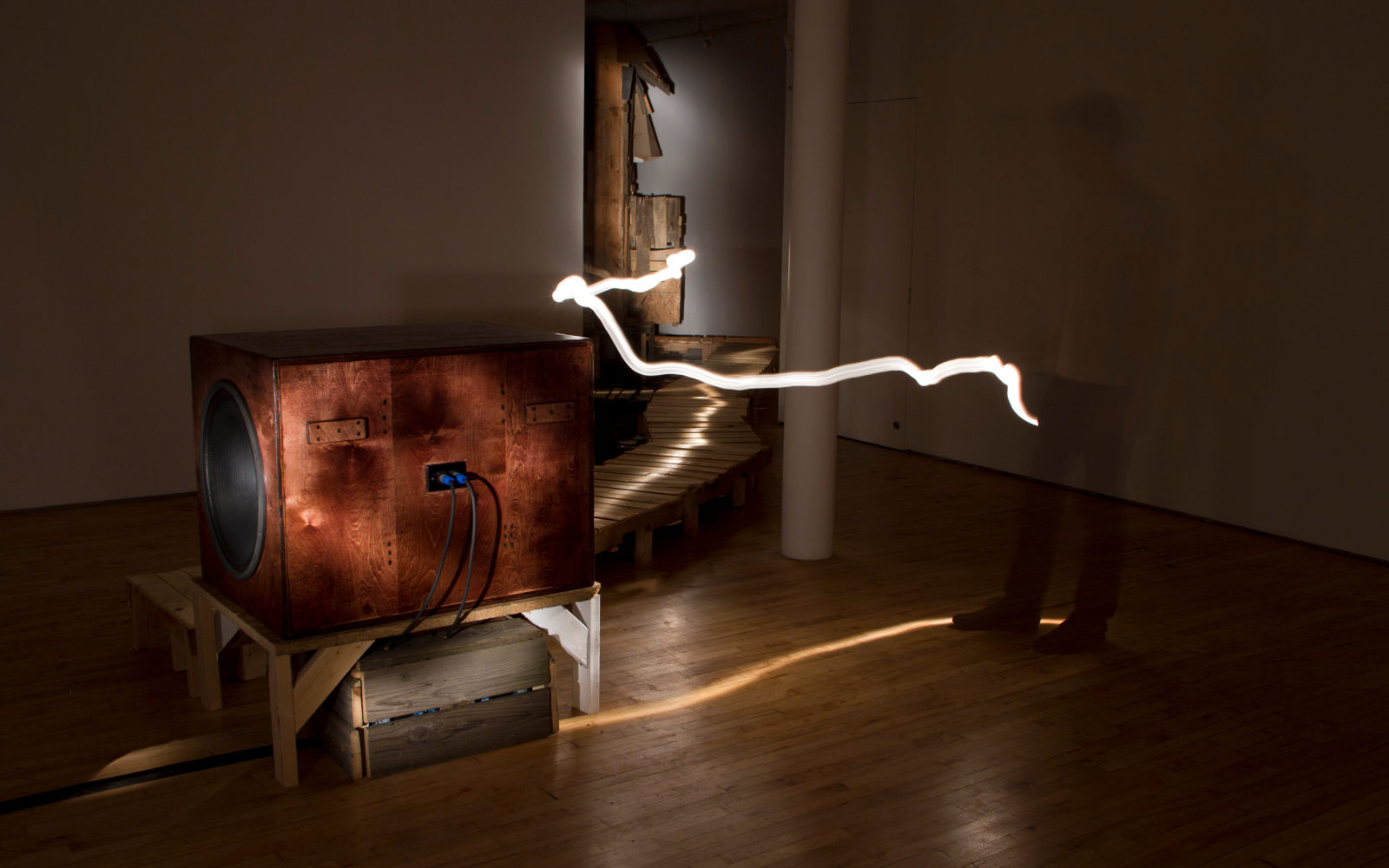

Noema does not seek to reproduce reality with accuracy, but rather to amplify, bend, and recontextualize it through sound, inviting users to sense the world not just as it is, but as it might be perceived.

Melo Chen & Nomy Yu

Noema also prompts broader questions about the future of human–AI relationships. What does it mean when AI not only responds to our input, but perceives and interprets the world with us and for us? By framing hallucination and sensory ambiguity as design opportunities rather than limitations, Melo and Nomy argue for a more poetic, affective, and multisensory approach to embodied AI. This work is only a starting point: they envision future iterations of Noema enabling dynamic and mobile user experiences that further blur the boundary between body, machine, and mind.

Project Page | Melo Chen | Nomy Yu | Design Intelligence at MIT

Project was supported by William McKenna, TAs Sergio Mutis and Xdd.